Japan Bolsters Legacy Chip Supply Chain: Impact on On-Premise AI

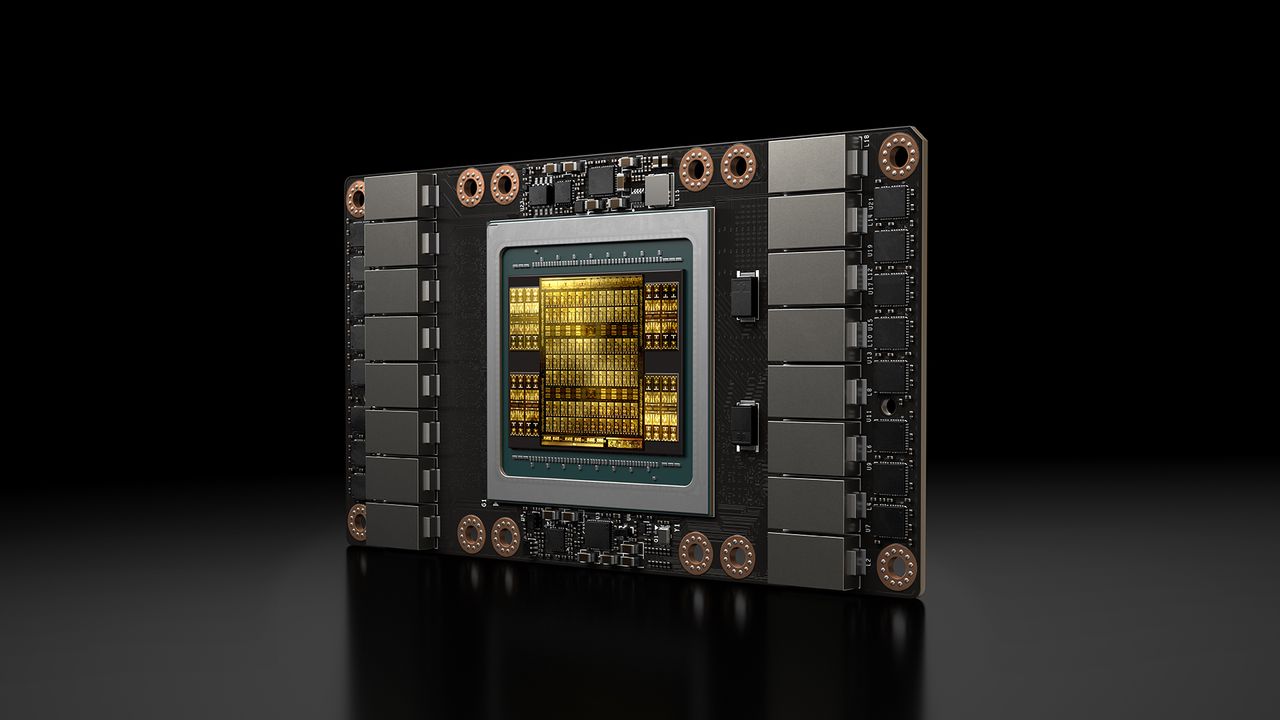

Japan is intensifying efforts to secure its legacy chip supply chain. This strategic move is crucial not only for traditional industries but also for ensuring stability and predictability in on-premise AI deployments, where the availability of reliab...