Qwen3.6-27B vs Coder-Next: A Field Comparison for Large Language Models

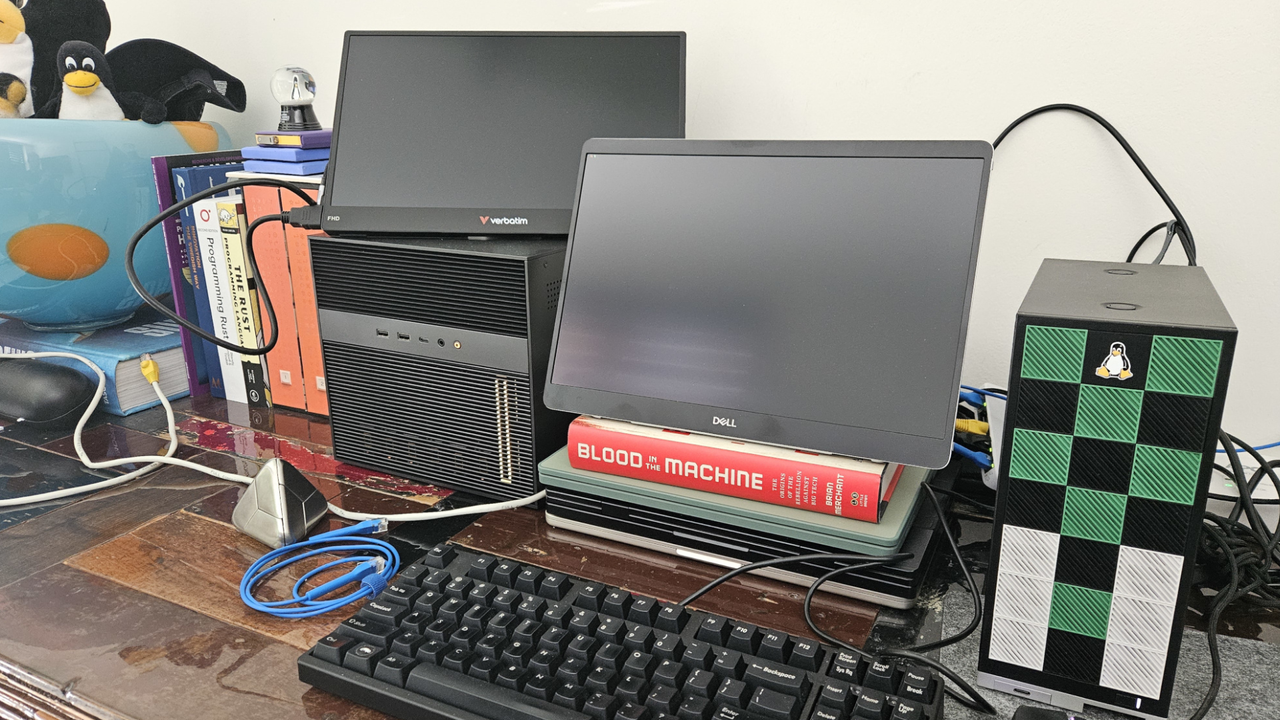

An in-depth analysis compared the Large Language Models Qwen3.6-27B and Coder-Next on RTX PRO 6000 Blackwell hardware. The tests, conducted with an unconventional methodology, revealed that the optimal model choice heavily depends on the specific wor...