Mercedes-Benz Taiwan adopts Nvidia's Alpamayo platform for new CLA

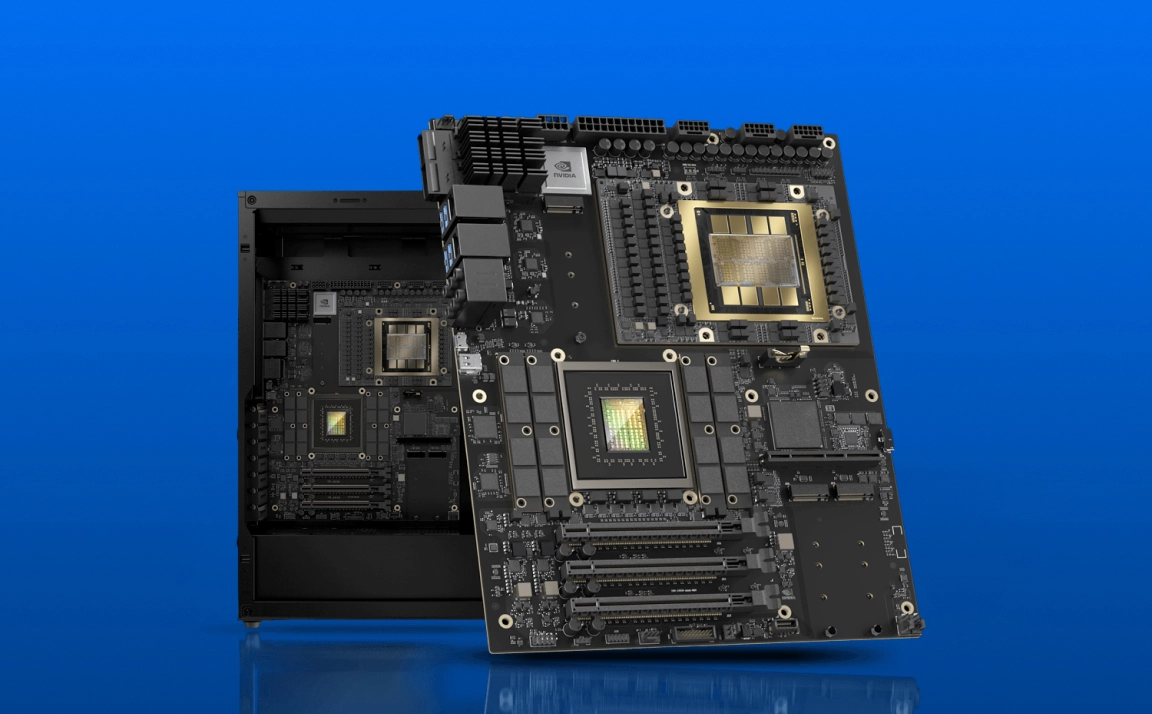

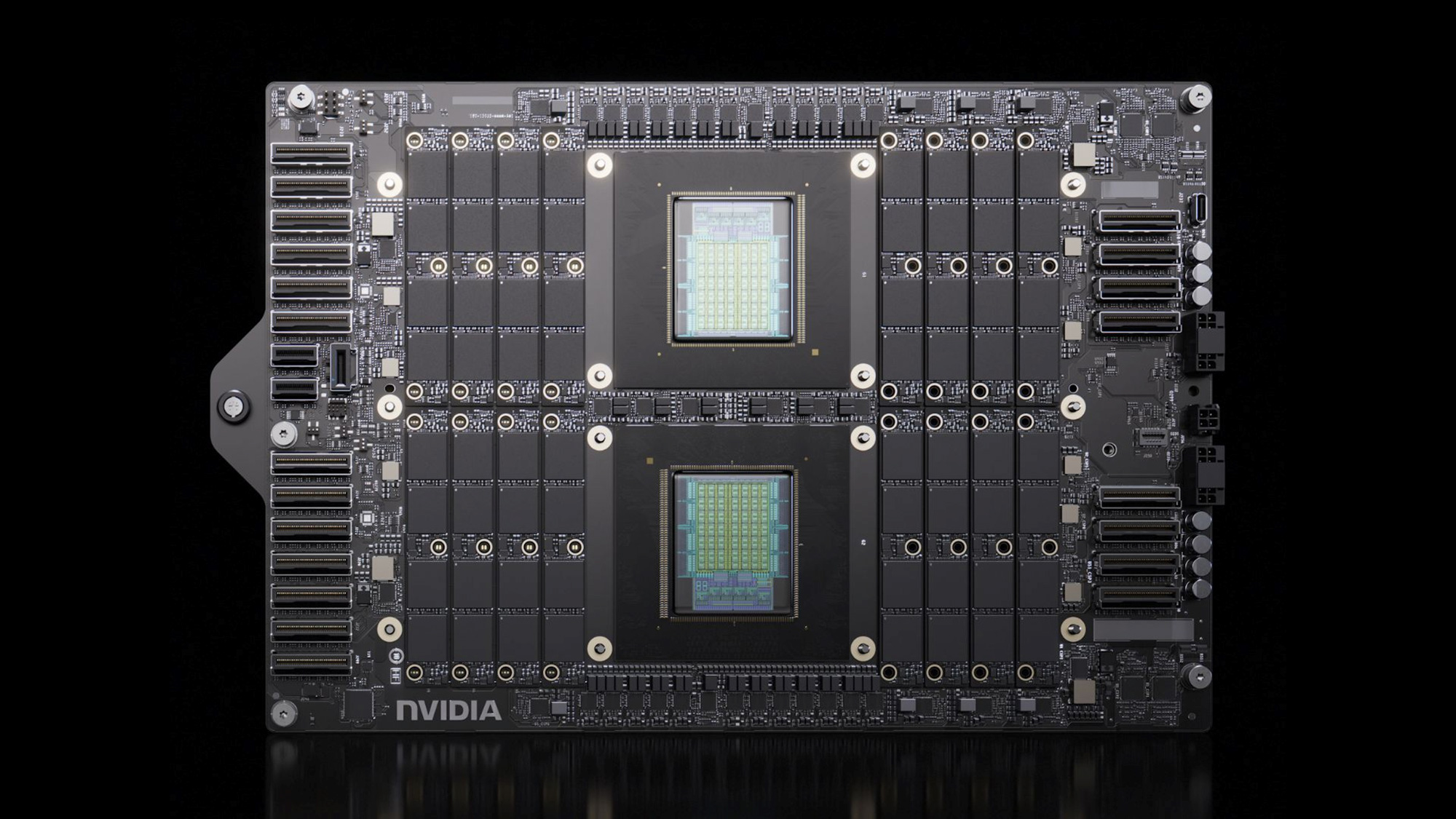

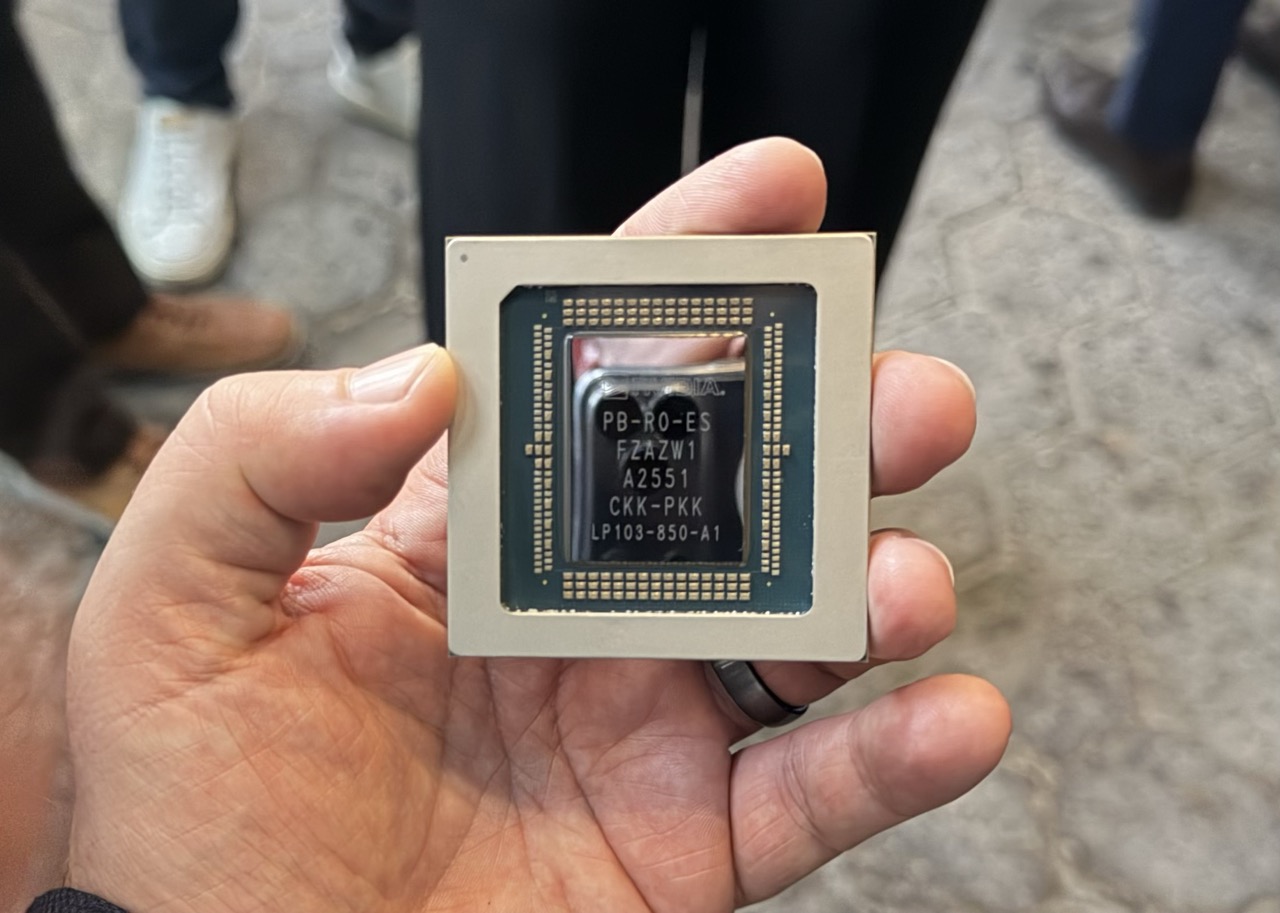

Mercedes-Benz Taiwan has announced the integration of Nvidia's Alpamayo platform into its new CLA. This strategic move underscores the company's commitment to technological innovation in the automotive sector and paves the way for future expansion in...