A New Frontier for Panel Manufacturers

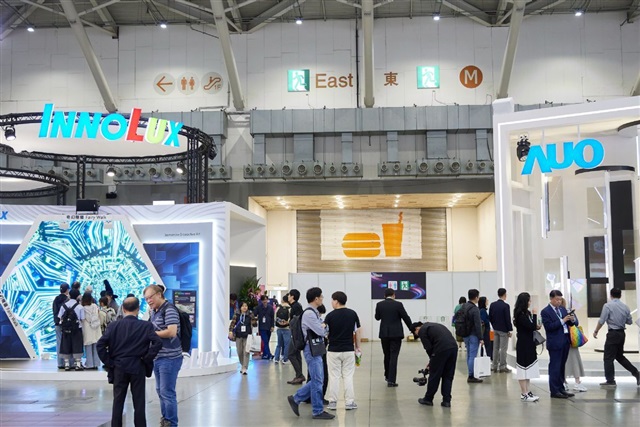

The global technological landscape is in constant evolution, and companies are continuously seeking new opportunities to leverage their expertise. It is within this context that two leading Taiwanese panel manufacturers have announced their strategic entry into the semiconductor packaging sector. This diversification is not coincidental but reflects a careful evaluation of market trends and the increasing demands of the chip industry, particularly for high-performance applications such as Large Language Models (LLMs).

The move by these display manufacturing giants underscores a convergence between seemingly distinct sectors. Their expertise in large-area processing and precision manufacturing, typical of panel fabrication, proves to be a valuable asset for advanced semiconductor packaging techniques, which require high accuracy and large-scale production capabilities.

CPO and FOPLP: Keys to Packaging Innovation

At the core of this transition are two emerging technologies: Co-Packaged Optics (CPO) and Fan-Out Panel Level Packaging (FOPLP). CPO represents a significant step forward in integration, positioning optical transceivers directly within the same package as the processing chip, whether it's a CPU or a GPU. This approach aims to overcome the limitations of traditional electrical interconnects, drastically reducing power consumption, increasing bandwidth, and lowering latency. For the intensive workloads of LLMs, which demand enormous amounts of data transfer between compute units and memory, CPO is fundamental to unlocking new levels of performance and scalability.

In parallel, FOPLP is a packaging technique that shifts chip processing from individual wafers to larger panels, similar to those used for displays. This methodology promises to improve manufacturing efficiency and reduce per-chip costs, especially for larger packages or the integration of multiple dies (chiplets). FOPLP enables higher integration density and better thermal management, critical factors for building increasingly powerful and complex AI accelerators, essential for large-scale LLM inference and training.

Impact on On-Premise Infrastructure and TCO

These innovations in semiconductor packaging have direct and significant implications for organizations evaluating or managing on-premise deployments of AI infrastructure. The adoption of CPO and FOPLP translates into higher-performing and more energy-efficient hardware. GPUs and AI accelerators benefiting from these technologies can offer greater throughput and lower latency, crucial aspects for running LLMs in self-hosted environments.

For CTOs, DevOps leads, and infrastructure architects, this means relying on hardware components that optimize the Total Cost of Ownership (TCO). Superior energy efficiency reduces long-term operational costs, while increased performance per compute unit allows for managing more complex workloads with a potentially smaller physical footprint. The ability to integrate more functionalities into a single package, thanks to FOPLP, can also simplify system design and maintenance, contributing to greater robustness and reliability for air-gapped environments or those with stringent data sovereignty requirements.

Future Outlook and Deployment Strategies

The entry of panel manufacturers into the semiconductor packaging sector is not just market news but an indicator of the direction the entire hardware industry is moving. The pursuit of more efficient, powerful, and scalable solutions for data processing is relentless, driven by the exponential demand generated by LLMs and other artificial intelligence applications.

For companies facing the choice between cloud and on-premise deployments, the evolution of these packaging technologies strengthens the argument for self-hosted solutions. More advanced and optimized hardware enables the construction of local infrastructures that can compete in terms of performance and TCO, while offering unparalleled control over data sovereignty and compliance. AI-RADAR, for instance, provides analytical frameworks on /llm-onpremise to evaluate the specific trade-offs associated with these strategic decisions, highlighting how silicio-level innovation is a fundamental pillar for the future of enterprise AI.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!