ASE Boosts Investments for the Future of AI

ASE (Advanced Semiconductor Engineering), a leading provider of semiconductor assembly and test services, has announced a significant increase in its Capital Expenditure (CapEx) for 2026, reaching a record $8.5 billion. This strategic move is a direct response to the growing and robust demand for advanced packaging solutions, a technological segment proving increasingly crucial for the evolution of artificial intelligence and Large Language Model (LLM) workloads.

This investment reflects a clear forecast of growth in the AI sector, where the processing power and energy efficiency of chips largely depend on interconnection and integration technologies. For companies evaluating on-premise LLM deployments, this announcement underscores the importance of monitoring upstream investments in the semiconductor supply chain, as they directly influence the availability and cost of high-performance hardware.

The Strategic Role of Advanced Packaging in the AI Era

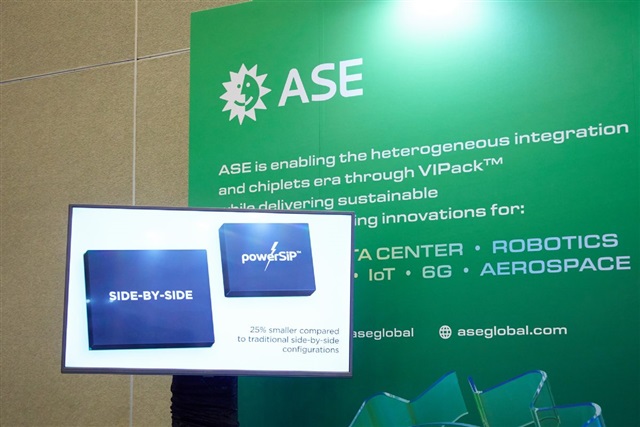

Advanced packaging is no longer just a final step in chip manufacturing; it has become a critical enabler for modern hardware architectures, especially those designed for AI. Technologies like 2.5D and 3D packaging allow for the integration of multiple dies (chips) into a single package, overcoming the physical limits imposed by Moore's Law for individual transistors. This is fundamental for GPUs and AI accelerators, where the proximity between computing logic and high-bandwidth memory (HBM) is essential to minimize latency and maximize throughput.

Efficient packaging enables better heat dissipation management, a significant aspect for AI chips operating at high power. Furthermore, it facilitates the implementation of high-speed interconnections between various components, such as computing units and VRAM modules, drastically improving overall performance. ASE's investment in this sector indicates a forecast of continuous innovation and a growing need for these solutions to support increasingly complex and data-intensive AI models.

Implications for On-Premise Infrastructure and TCO

For organizations choosing to deploy LLMs and other AI applications on self-hosted or air-gapped infrastructures, investments in advanced packaging have direct implications. Increased production capacity and innovation in this field potentially translate into better availability of GPUs and AI accelerators with superior performance, and in the long term, a positive impact on the Total Cost of Ownership (TCO) of the infrastructure. The ability to acquire cutting-edge hardware is a key element for maintaining competitiveness and ensuring data sovereignty.

Reliance on specialized components makes the supply chain a critical factor. Investment decisions by players like ASE can influence companies' ability to build and scale their local stacks for LLM inference and training. For those evaluating on-premise deployments, it is crucial to consider not only immediate hardware specifications but also the stability and innovation capacity of the supply chain supporting these components. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these complex trade-offs.

Future Prospects and Strategic Control

ASE's CapEx increase highlights an underlying trend in the semiconductor industry: AI is not only a demand driver for chips but is also redefining investment priorities across the entire value chain. The ability to efficiently produce and integrate high-performance components will be a key differentiator for hardware providers and, consequently, for companies that rely on such hardware for their AI strategies.

This scenario reinforces the importance for businesses to have a clear vision of their infrastructural and deployment needs. The choice between cloud and on-premise solutions for LLM workloads is increasingly influenced not only by cost and performance considerations but also by the need for control, compliance, and data sovereignty. Investments in advanced packaging are a fundamental piece of this evolving ecosystem, ensuring that the necessary hardware for next-generation AI is available to support these strategic decisions.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!