ASPEED's Growth Driven by AI Servers

ASPEED, a key player in the semiconductor industry, is experiencing sustained growth, a trend directly linked to the surge in demand for dedicated artificial intelligence servers. This scenario not only consolidates the company's position but also strengthens the market outlook for Baseboard Management Controllers (BMCs), fundamental components for managing modern IT infrastructure. The expansion of the AI server market signals a clear direction towards the widespread adoption of complex workloads, which require increasingly robust and reliable hardware and management solutions.

The growing adoption of Large Language Models (LLM) and other artificial intelligence applications by enterprises is driving the need for infrastructure capable of supporting large-scale training and inference. This translates into an increased demand for high-performance servers, often equipped with specialized GPUs and advanced management systems. In this context, the ability to efficiently monitor, control, and diagnose hardware becomes a critical factor in ensuring operational uptime and optimizing resources.

The Crucial Role of BMCs in the AI Ecosystem

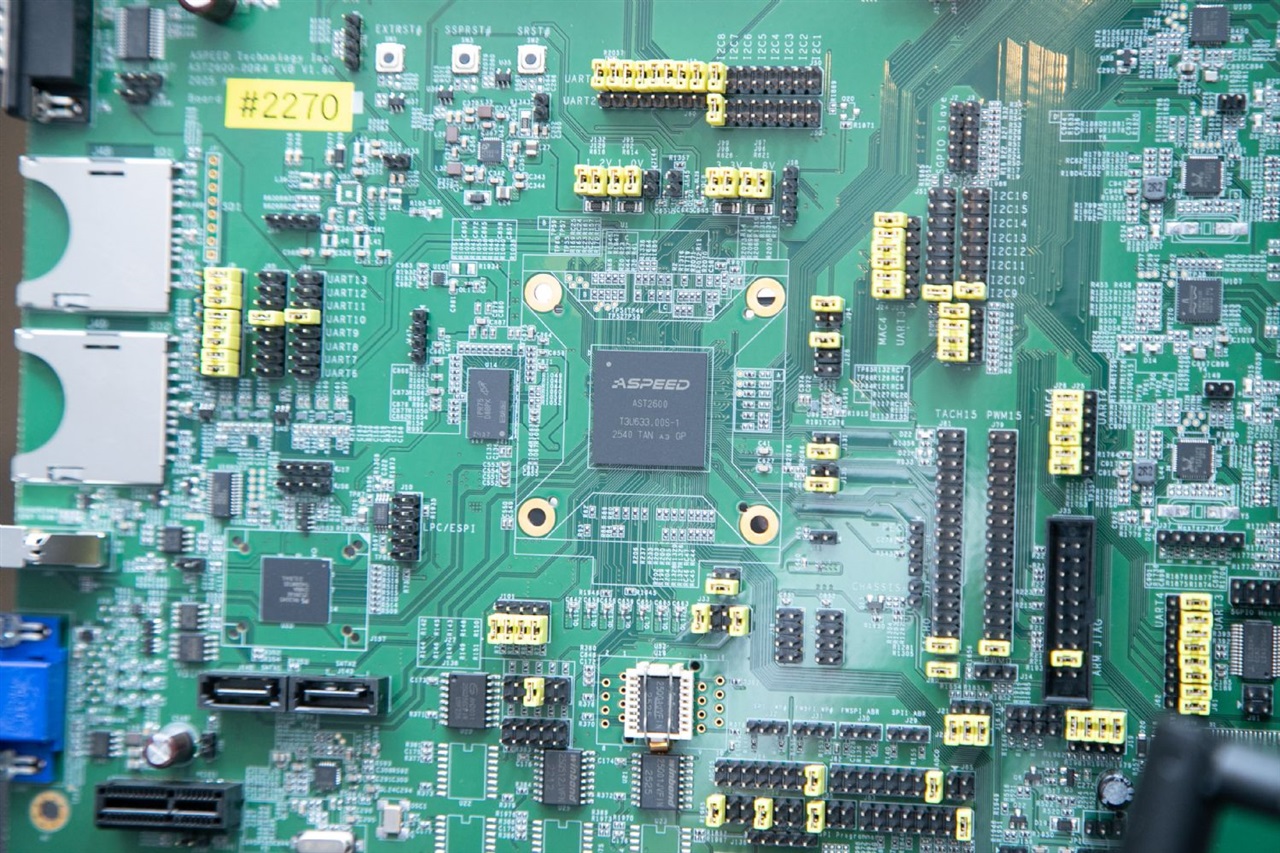

Baseboard Management Controllers (BMCs) are microcontrollers integrated into server motherboards, responsible for remote management and hardware monitoring. Their functions include power control, temperature monitoring, fan management, error diagnostics, and remote access to the operating system, even when the main server is off or unresponsive. In an AI infrastructure, where servers often operate under intense and continuous loads, the reliability and remote management capabilities offered by BMCs are indispensable.

For data centers and high-performance computing environments, BMCs allow system administrators to maintain operations with minimal on-site intervention. This is particularly advantageous for large-scale deployments or installations in remote locations. Their importance is amplified by the complexity of AI servers, which integrate a high number of high-power components and generate significant heat, making constant monitoring and thermal management vital functions to prevent failures and extend hardware lifespan.

Implications for On-Premise Deployments

The drive towards AI servers and the importance of BMCs have significant repercussions for organizations choosing on-premise or self-hosted deployment strategies. Opting for local infrastructure offers advantages in terms of data sovereignty, security, and direct control over the operating environment—crucial aspects for regulated industries or companies with specific compliance requirements. However, managing these infrastructures requires appropriate tools and expertise, and BMCs represent a cornerstone of this management capability.

For those evaluating on-premise deployments, there are significant trade-offs between initial costs (CapEx), scalability, and operational control. A well-managed self-hosted infrastructure, supported by robust BMCs, can offer a competitive Total Cost of Ownership (TCO) in the long term, reducing reliance on external cloud services and ensuring air-gapped environments if necessary. AI-RADAR offers analytical frameworks on /llm-onpremise to delve deeper into these evaluations and help companies navigate the complexities of deployment decisions.

Future Outlook and Technological Challenges

The AI server market is poised for further expansion, driven by continuous innovation in artificial intelligence models and their integration into a growing number of sectors. This growth will lead to persistent demand for specialized hardware and advanced management solutions. Companies like ASPEED, which provide critical components such as BMCs, are strategically positioned to benefit from this trend.

Future challenges include managing increasing compute density, optimizing energy consumption, and ensuring maximum reliability in increasingly complex environments. The evolution of BMCs will need to keep pace with these demands, offering more sophisticated functionalities for predictive monitoring, security, and automation. The ability to provide resilient and manageable AI infrastructure will be a decisive factor for the success of enterprise artificial intelligence strategies.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!