Auras and Nvidia Vera Rubin Project Changes: A Sign of Resilience

Auras, a key player in the technology supply chain, recently announced that modifications made to the Nvidia Vera Rubin project will not impact its operations. This statement comes at a time of strong growth for the company, which has reported a significant increase in both revenue and profit. The news, as reported by DIGITIMES, offers an interesting insight into the dynamics of supply chains within the artificial intelligence hardware sector.

The context is one of a rapidly evolving industry where the availability and stability of components are critical factors. For companies planning Large Language Model (LLM) deployments and other AI workloads on-premise, certainty in hardware supply is fundamental to ensuring operational continuity and cost predictability.

The Significance of the Changes and Technical Implications

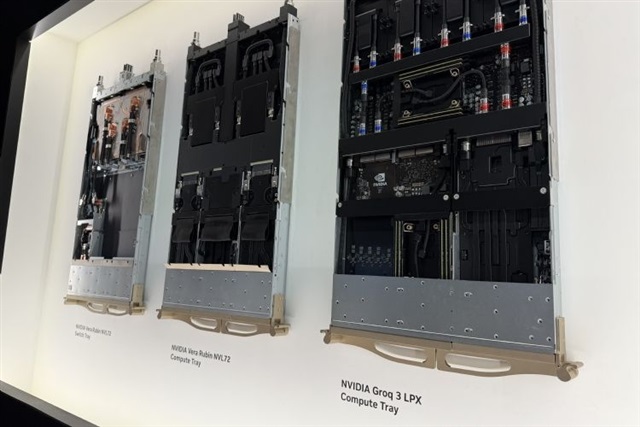

While specific details of the "gold-plating" changes to the Nvidia Vera Rubin project have not been disclosed, their potential impact can be contextualized. In the high-performance electronics sector, "gold-plating" refers to the application of a layer of gold on electrical contacts or printed circuit boards to improve conductivity, corrosion resistance, and durability. A modification in this process could indicate various strategies: cost optimization, improved material availability, or even an evolution in design for specific performance requirements.

Nvidia Vera Rubin, while not yet an officially announced platform by this name to the public, likely fits into the development roadmap for high-performance GPU architectures and computing platforms. These architectures are the beating heart of inference and training systems for LLMs, requiring components of the highest quality and precision. Auras's ability to absorb such changes without operational interruptions underscores robust supply chain management and manufacturing flexibility, which are essential in such a dynamic market.

Operational Resilience and TCO Impact for On-Premise Deployments

Auras's expressed confidence regarding the continuity of its operations, despite Nvidia's changes, is a reassuring signal for the entire ecosystem. For organizations investing in self-hosted AI infrastructure, the stability of the hardware supply chain is directly related to the Total Cost of Ownership (TCO). Interruptions or delays in the supply of critical components can generate significant additional costs, both in terms of CapEx and OpEx, due to downtime or the need to source more expensive alternatives.

Auras's jump in revenue and profit reflects the strong demand for components and services related to AI infrastructure. This trend is driven by the increasing adoption of AI solutions across various sectors, prompting companies to carefully evaluate deployment options, including on-premise solutions, for reasons of data sovereignty, compliance, and direct control over computational resources.

Outlook for the AI Ecosystem and Deployment Decisions

The episode involving Auras and Nvidia Vera Rubin highlights the importance of reliable partners and resilient supply chains for anyone planning or expanding their AI infrastructure. A component supplier's ability to adapt to technical changes without impacting production is a key indicator of its long-term reliability.

For CTOs, DevOps leads, and infrastructure architects evaluating self-hosted alternatives versus cloud solutions for AI/LLM workloads, hardware supply chain stability is a decisive factor. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate the trade-offs between different deployment strategies, considering aspects such as TCO, data sovereignty, and concrete hardware specifications. The continuous evolution of the AI hardware landscape requires careful planning and a deep understanding of market and supply dynamics.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!