The Challenge of Long Contexts in LLMs

Large Language Model (LLM) training is increasingly moving towards tasks that require handling extremely long contexts, where token counts can exceed 100,000 units. At these scales, Out-Of-Memory (OOM) issues frequently arise, even when scaling device counts using conventional training techniques such as ZeRO or FSDP. To circumvent these problems, Sequence Parallelism (SP), which involves partitioning input tokens across devices, represents an increasingly adopted parallel training technique to enable long-context training with a growing number of GPUs.

However, implementing Sequence Parallelism is notoriously difficult. It requires invasive code changes to existing libraries such as DeepSpeed or HuggingFace. These code changes often involve partitioning input token contexts and intermediate activations, inserting communication collectives, and overlapping communication with computation—operations that must be performed for both the forward and backward passes. This results in researchers who want to experiment with long-context capabilities spending significant effort on engineering the system's stack to enable such capability, repeating this effort for different hardware vendors.

AutoSP: A Compiler-Based Solution

To avoid this complexity, AutoSP has been introduced: a fully automated compiler-based solution. AutoSP automatically converts easy-to-write standard training code into multi-GPU sequence parallel code that efficiently uses GPUs to train on longer input contexts while composing with existing parallel strategies such as ZeRO. This avoids the cumbersome need for developers to repeatedly modify training pipelines for long-context training.

Users can now simply import AutoSP and compile arbitrary models using the AutoSP backend, giving the power of long-context training to anyone. Moreover, by embedding this technology into the compiler, the approach is performance-portable: highly performant SP can be realized on diverse hardware. A key design philosophy of AutoSP is simplicity in abstracting most of the complexity in programming multiple GPUs from users, by implementing AutoSP within DeepCompile, a compiler ecosystem within DeepSpeed.

Technical Details and Performance Evaluation

AutoSP transforms user code to enable longer-context training. The specific SP strategy AutoSP converts code into is DeepSpeed-Ulysses. This choice is motivated by its communication overhead remaining constant with increasing GPU counts on NVLink network topologies or fat-tree networks. It is important to note, however, that DeepSpeed-Ulysses only enables scaling the SP-size to the number of heads in a model (e.g., 32 in 7-8B models).

AutoSP additionally applies a custom Activation Checkpointing (AC) strategy curated for long-context modeling, named Sequence-aware AC (SAC). This technique releases intermediate activations of cheap-to-compute operators, recomputing them in the backward pass as required for relevant gradients. While PyTorch 2.0 introduces an automated max-flow min-cut based AC formulation, this was found to be overly conservative for long-context modeling. SAC, instead, exploits unique long-context FLOP dynamics. When triggered on (the default setting in AutoSP), this strategy marginally reduces training throughput, but without it, training on longer contexts would be infeasible. Users can selectively choose to turn this pass on only for configurations that experience OOM.

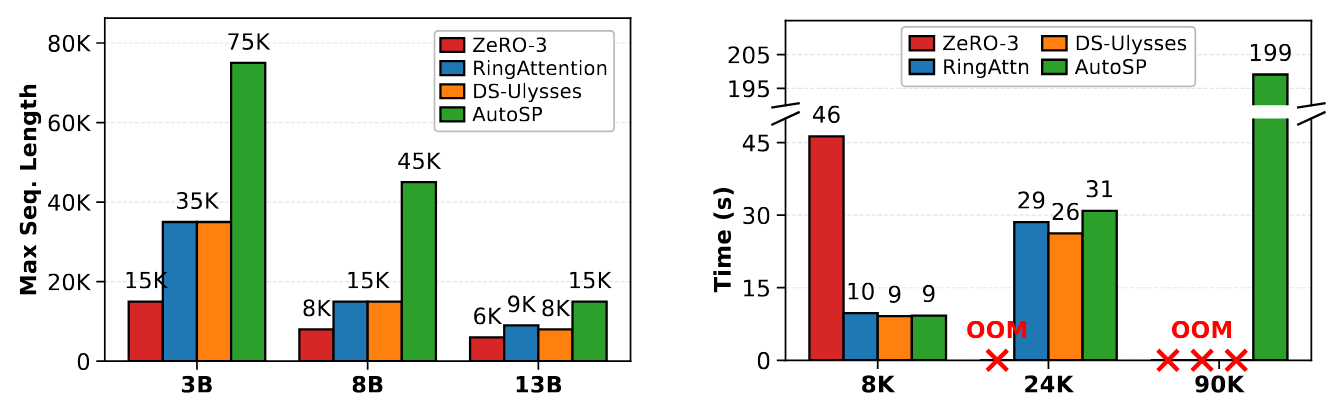

To demonstrate AutoSP's viability, its performance was evaluated on models of varying sizes using NVIDIA GPUs. Benchmarks were conducted on Llama 3.1 models on an 8x NVIDIA A100-80GB SXM node, using PyTorch 2.7 with CUDA 12.8. Results were compared against torch-compiled hand-written baselines of RingFlashAttention, DeepSpeed-Ulysses, and ZeRO-3. The tests showed that AutoSP not only increases the maximum trainable sequence length given the same resources but also achieves these benefits with little to no cost to runtime performance.

Implications and Limitations for On-Premise Deployments

For CTOs, DevOps leads, and infrastructure architects evaluating self-hosted alternatives for AI/LLM workloads, AutoSP represents a significant innovation. The ability to simplify long-context LLM training on existing multi-GPU infrastructures is crucial for optimizing resource utilization and containing the Total Cost of Ownership (TCO). In on-premise environments, where hardware is a fixed investment, maximizing the efficiency of each NVIDIA A100-80GB SXM GPU becomes a decisive factor for the sustainability and scalability of AI projects. For those evaluating on-premise deployments, solutions like AutoSP offer a path to optimize the use of existing hardware resources, a crucial factor in TCO management. AI-RADAR provides analytical frameworks on /llm-onpremise to evaluate these trade-offs.

Despite its advantages, AutoSP has some limitations. First, it requires the user to forcefully compile a transformer as a single compilable artifact. This means PyTorch users who compile many functions individually and stitch them together into one model cannot use AutoSP, as the compiler needs to see the entire model to correctly shard input sequences and propagate this information throughout the entire graph. Second, AutoSP disallows any graph breaks in compilable artifacts, which complicates analysis and information propagation. Extending AutoSP to be graph-break resilient is left to future research. Despite these limitations, its integration with DeepSpeed democratizes access to long-context LLM training, making it more accessible and manageable for organizations aiming to maintain control and data sovereignty.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!