Cerebras Prepares for IPO with AI Chip Breakthrough

Cerebras, a company renowned for its specialized AI hardware solutions, has formally initiated the process for its Initial Public Offering (IPO). This decision comes at a time of intense activity in the AI sector, driven by a growing demand for specialized computing capabilities to develop and deploy Large Language Models (LLM) and other artificial intelligence applications.

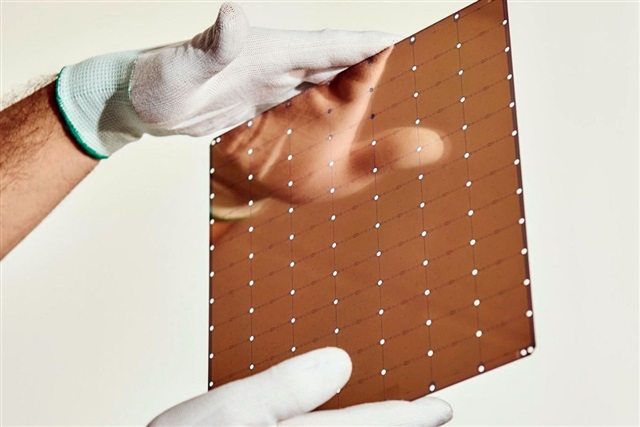

The IPO announcement is coupled with news of a significant innovation in AI chip technology, a critical element for companies seeking to optimize their infrastructures. For organizations evaluating on-premise deployment, access to cutting-edge hardware is essential to ensure performance, energy efficiency, and control over their data—aspects that Cerebras aims to address with its solutions.

The Role of Specialized Architectures in On-Premise Deployment

Innovation in AI chips, such as that announced by Cerebras, is particularly relevant for on-premise deployment strategies. Traditional GPU-based architectures, while powerful, can present limitations in terms of interconnection and memory management for large-scale AI workloads. Specialized chips are designed to overcome these hurdles, potentially offering higher throughput and reduced latency, critical factors for the inference and training of complex LLMs.

For CTOs, DevOps leads, and infrastructure architects, selecting the right hardware is a strategic decision that directly impacts the Total Cost of Ownership (TCO) and future scalability. Solutions like those proposed by Cerebras can offer advantages in self-hosted or air-gapped environments, where data sovereignty and regulatory compliance are absolute priorities. The ability to manage large models with less hardware or greater efficiency can translate into significant operational savings and increased agility.

The G42 Partnership and Its Impact on the Supply Chain

Another key element in Cerebras' announcement is its strategic partnership with G42, a global technology company. This collaboration is set to reshape the supply chain for AI hardware solutions, an increasingly important aspect in a market characterized by high demand and, at times, limited availability of critical components like advanced silicio.

Strategic partnerships play a fundamental role in ensuring the availability and distribution of innovative technologies. For companies planning significant investments in AI infrastructure, supply chain stability is a decisive factor. A collaboration like that between Cerebras and G42 can help mitigate risks related to the supply of specialized hardware, ensuring that enterprises can access the necessary resources for their artificial intelligence projects, whether in cloud or on-premise environments.

Future Outlook for the AI Hardware Market and Deployment

Cerebras' IPO and its AI chip innovation, supported by the G42 partnership, reflect the rapid evolution of the artificial intelligence hardware market. Companies are increasingly seeking solutions that offer a balance between performance, cost, and control, especially for the most demanding AI workloads. This scenario encourages the emergence of new architectures and business models.

For those evaluating on-premise deployment, Cerebras' offering fits into a broader context of hardware options that require careful trade-off analysis. Factors such as available VRAM, throughput, latency, and power requirements are essential for making informed decisions. AI-RADAR provides analytical frameworks on /llm-onpremise to evaluate these trade-offs, helping decision-makers navigate the complexities of self-hosted and cloud solutions for Large Language Models.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!