Taiwan's Expanding AI Server Market

Taiwan's artificial intelligence server sector is witnessing substantial growth, indicating an evolution that extends beyond TSMC's traditional dominance in the semiconductor manufacturing landscape. This trend suggests that the economic and technological benefits linked to the increasing demand for AI infrastructure are spreading to a broader range of players within the Taiwanese ecosystem. For organizations planning to adopt AI solutions, this expansion of the global AI server market is a key indicator of the availability and diversification of hardware options.

The demand for computational capacity for training and Inference of Large Language Models (LLMs) continues to drive investment in specialized hardware. Taiwan's ability to meet this need, not only through its chip foundries but also via server and component manufacturers, is a critical factor for the global supply chain. This dynamic is particularly relevant for companies evaluating on-premise deployment strategies, where hardware selection and supply chain robustness are fundamental elements.

Diversification of the AI Supply Chain

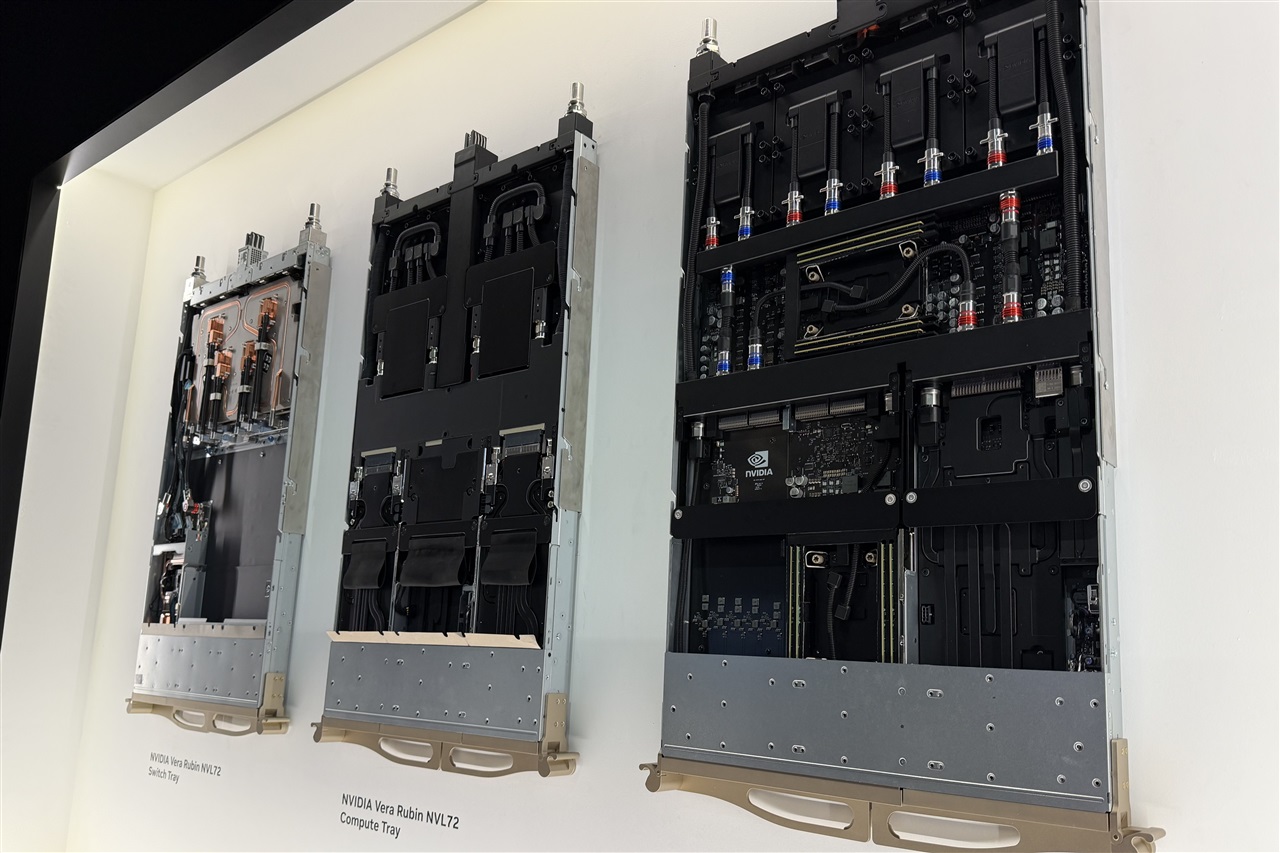

Traditionally, TSMC has been an indispensable pillar in the production of advanced chips, including those essential for AI. However, the news that gains in the AI server sector are extending beyond this single entity indicates a maturation and diversification of the supply chain. This could signify increased participation from Original Design Manufacturers (ODMs), motherboard manufacturers, cooling system providers, and other hardware specialists who contribute to the assembly and customization of AI servers.

For CTOs and infrastructure architects, a broader and more resilient supply chain translates into greater flexibility and potentially better conditions for hardware procurement. The ability to access a wider range of suppliers can mitigate risks associated with reliance on a single player and foster innovation through competition. This scenario is crucial for those planning long-term investments in AI infrastructure, where availability and scalability are absolute priorities.

Implications for On-Premise Deployments and TCO

The expansion of Taiwan's AI server market has direct implications for on-premise deployment strategies. Companies opting for self-hosted solutions for their LLM workloads require specific hardware, often characterized by GPUs with high VRAM and high-speed interconnects. The increased availability of diversified AI servers can facilitate the acquisition of systems optimized for Inference or Fine-tuning, allowing for greater customization based on specific performance requirements, such as throughput and latency.

Evaluating the Total Cost of Ownership (TCO) becomes a decisive factor in this context. While the initial investment (CapEx) for on-premise hardware can be significant, long-term operational costs, including energy and maintenance, must be carefully balanced against cloud-based OpEx models. Data sovereignty and compliance requirements, especially in regulated sectors, often make air-gapped or self-hosted deployments a mandatory choice, and the availability of a robust hardware market is crucial to support such decisions. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these trade-offs.

Future Outlook and Challenges for AI Infrastructure

The continuous development of Taiwan's AI server market reflects a global trend towards intensified AI adoption across all sectors. However, this growth also brings significant challenges. Managing the enormous energy consumption of AI data centers, the need for advanced cooling systems, and the complexity of managing heterogeneous hardware and software stacks are just some of the considerations companies must address.

Looking ahead, the Taiwanese ecosystem's ability to continue innovating and diversifying its offerings will be crucial. For technology decision-makers, understanding these market dynamics is essential for formulating infrastructure strategies that not only meet current needs but are also scalable and resilient in the face of rapidly evolving AI technologies. The choice between cloud and on-premise solutions will remain a central debate, with the availability of specialized hardware playing an increasingly important role.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!