Legal Tensions Between Musk and OpenAI

The artificial intelligence landscape is often a stage for complex dynamics, not only on the front of technological innovation but also on legal and strategic grounds. A recent development has shed new light on the tensions between Elon Musk and OpenAI, the organization he co-founded. Two days before the trial began, Musk attempted to initiate a dialogue for a possible settlement.

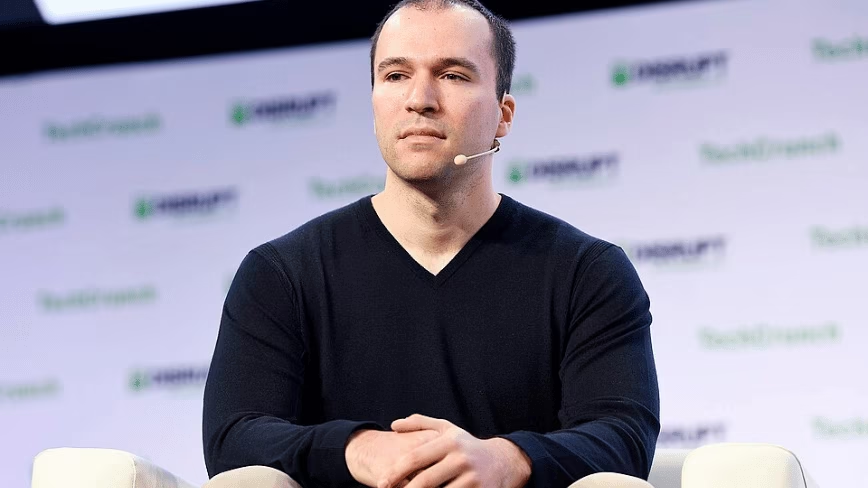

The exchange, which occurred via text messages with Greg Brockman, OpenAI co-founder, aimed to explore avenues for an out-of-court resolution. However, Brockman's proposal to drop all claims against the individuals involved in the dispute marked a turning point in the negotiations, leading to an unexpected reaction from Musk.

The Text Exchange and Its Implications

Elon Musk's response to Brockman's counter-proposal was direct and charged with tension. Musk replied that if Brockman insisted on his request, he and Sam Altman would become "the most hated men in America by the end of this week." This statement, followed by the assertion "If you insist, so it will be," underscores the depth of the conflict and the determination of the parties involved.

The entire exchange was made public through a court filing, offering insight into the personal and professional dynamics animating key figures in the AI sector. These episodes, while not directly related to specific deployment techniques or hardware, reflect a context of uncertainty that can influence the strategic decisions of companies relying on these players for their AI infrastructures.

Context and Impact on the AI Landscape

For CTOs, DevOps leads, and infrastructure architects, the stability and long-term vision of Large Language Model providers are critical factors. Legal disputes and internal turmoil among industry leaders can raise concerns regarding continuity of support, future model development, and the overall robustness of technological partnerships. This is particularly relevant for companies evaluating self-hosted or on-premise LLM deployments, where investments in hardware, licenses, and personnel are significant and long-term.

The choice of a technology partner for AI workloads, whether for inference or training, is not solely based on VRAM specifications or throughput, but also on trust in leadership and corporate strategy. Events like that between Musk and OpenAI highlight the need for thorough due diligence that goes beyond mere technical analysis, including an assessment of the governance and organizational stability of AI solution providers.

Future Perspectives and Deployment Decisions

Internal dynamics and legal controversies among AI giants can have repercussions on the market, influencing risk perception and adoption strategies. For organizations aiming to maintain data sovereignty and control over their AI stacks through on-premise or air-gapped deployments, choosing a stable and predictable ecosystem is fundamental. Transparency regarding these dynamics, even if uncomfortable, is crucial for informed strategic planning.

AI-RADAR is committed to providing neutral analyses of the constraints and trade-offs associated with different deployment choices, without direct recommendations. For those evaluating on-premise deployments, analytical frameworks are available at /llm-onpremise that can help weigh not only operational and capital costs (TCO) but also risk factors related to provider and market stability. These events underscore how the business and legal context is an integral part of the overall technological evaluation.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!