Google Redefines AI Infrastructure with New TPUs and Arm-based Axion

Google recently captured the tech industry's attention during its annual Cloud Next conference in Las Vegas, unveiling two new proprietary AI accelerators. This move underscores the growing trend among tech giants to invest heavily in custom silicio development, aiming to optimize performance and energy efficiency for artificial intelligence workloads.

The announcement is particularly significant because it introduces a dual approach: one accelerator is specifically designed to speed up the training operations of Large Language Models (LLMs) and other complex AI models, while the other aims to drastically reduce the costs associated with model serving, or the Inference phase. This distinction is crucial, as hardware requirements and optimizations for training and Inference can vary significantly, directly impacting the Total Cost of Ownership (TCO) of AI infrastructures.

The TPU-Axion Duo: A Paradigm Shift in Architecture

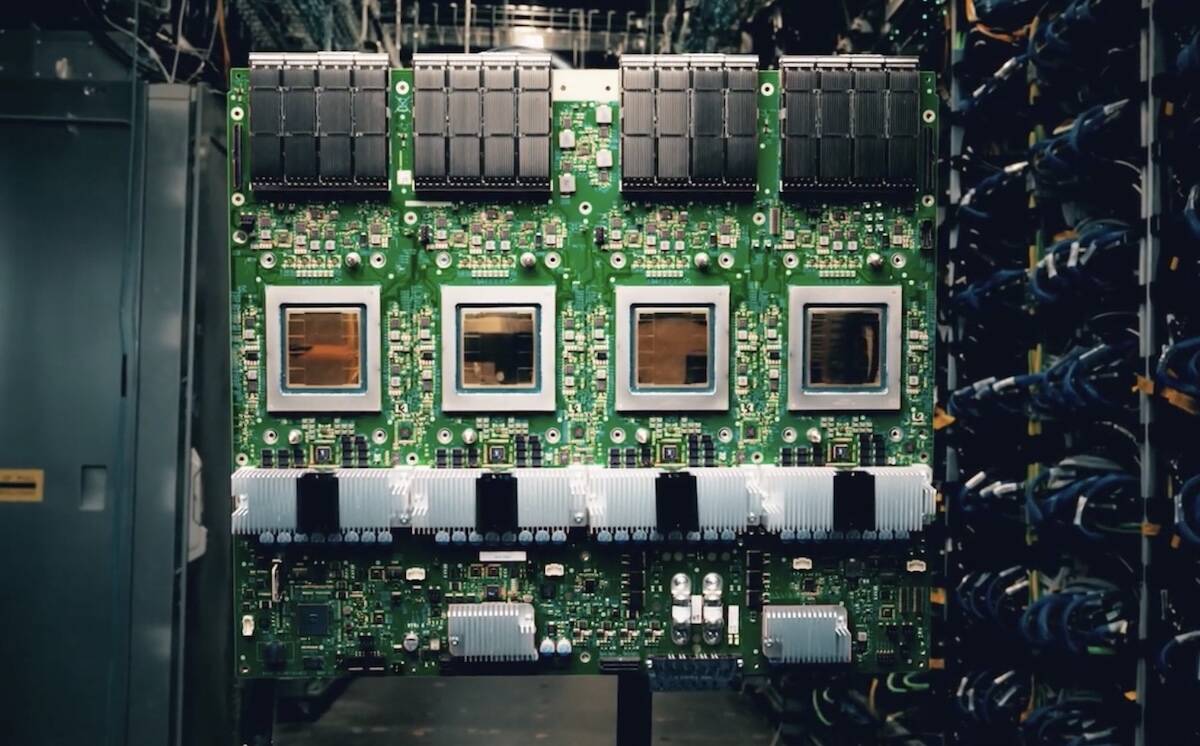

One of the most relevant novelties is the pairing of Google's new TPUs with Arm-based Axion cores. This choice represents a clear departure from the x86 architecture, traditionally dominant in data centers. The adoption of Arm processors to complement TPUs suggests a strategy aimed at maximizing efficiency and flexibility.

Arm processors are known for their energy efficiency and ability to deliver competitive performance in specific workloads, making them an interesting choice for orchestration and data management within an AI acceleration system. This integration could allow Google to gain more granular control over the entire processing pipeline, optimizing communication between CPUs and accelerators and reducing latencies, which are critical factors for both distributed training and low-latency Inference.

Implications for Deployment and TCO

The push towards custom silicio, like Google's, has profound implications for companies evaluating their AI deployment strategies. While cloud-based solutions leveraging these accelerators can offer access to cutting-edge resources without the burden of initial investment (CapEx), organizations with data sovereignty needs, stringent compliance, or air-gapped environment requirements might prefer self-hosted or hybrid solutions.

For those evaluating on-premise deployments, the availability of specialized hardware, even if not directly accessible as a standalone product, influences the competitive landscape and future technological choices. An infrastructure's ability to support intensive training workloads or handle millions of Inference requests per second with controlled TCO heavily depends on the efficiency of the underlying hardware. Architectures like the one proposed by Google highlight how chip-level optimization is fundamental to achieving these goals.

The Race for Innovation in AI Silicio

Google's announcement is part of a broader context of intense competition in the AI silicio sector. Many companies are investing in the development of proprietary chips, recognizing that hardware is a critical enabler for the advancement of artificial intelligence. This technological "arms race" is not just about raw computing power, but also about efficiency, programmability, and the ability to tightly integrate hardware and software.

For enterprises, understanding these dynamics is essential for planning future investments and choosing the most suitable platforms for their needs. The choice between solutions based on general-purpose GPUs, FPGAs, or custom ASICs, and the supporting CPU architecture (x86 or Arm), involves significant trade-offs in terms of performance, flexibility, costs, and integration requirements. The market will continue to evolve rapidly, offering increasingly specialized options to address the challenges of AI workloads.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!