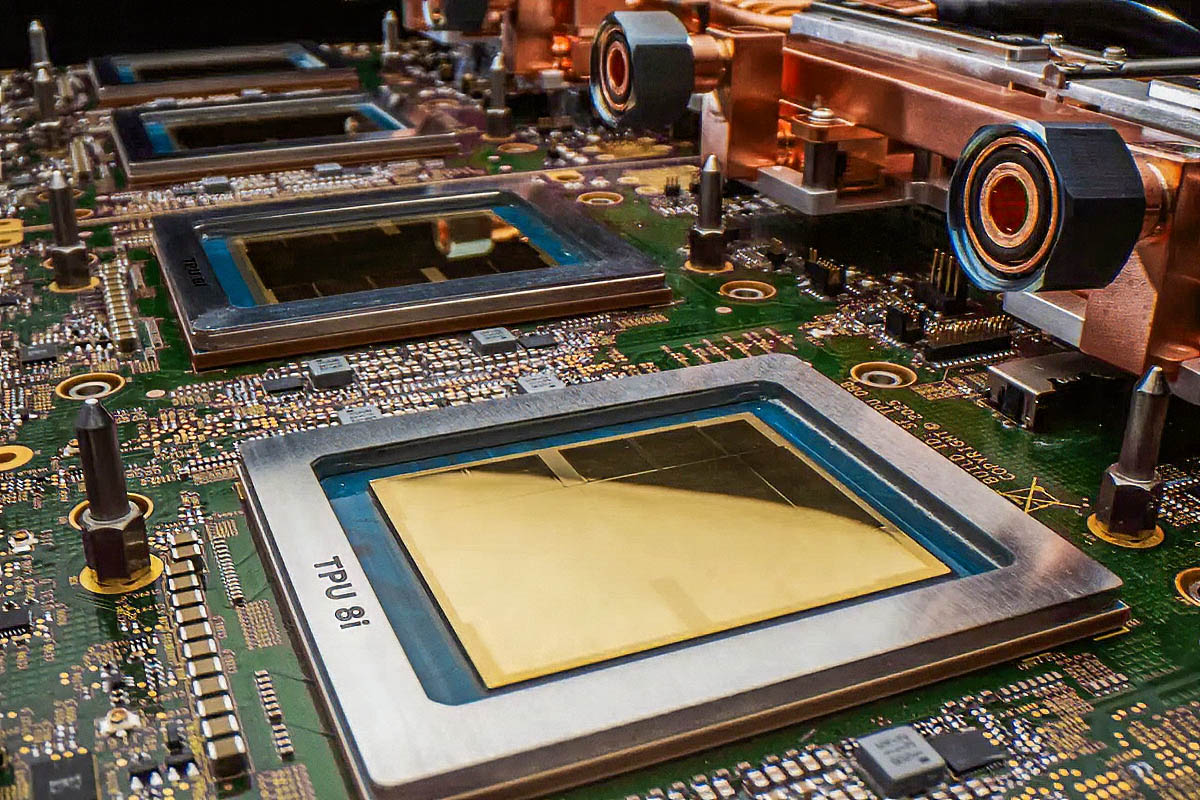

Google Unveils Eighth-Generation TPUs for AI

Google recently announced the introduction of its new eighth-generation Tensor Processing Units (TPUs): the TPU 8i and TPU 8t. These new iterations of Google's proprietary silicio have been designed with a specific focus, clearly distinguishing between the computational requirements of AI inference and training models.

The TPU 8i has been developed to handle AI inference workloads, while the TPU 8t is dedicated to training operations. This specialization reflects a consolidated trend in the industry, where hardware optimization for specific tasks is fundamental to maximizing efficiency and performance in the era of Large Language Models (LLMs).

Technical Detail and Role in the AI Landscape

The distinction between inference and training hardware is crucial for companies operating with intensive AI workloads. Training an LLM requires massive computing power, high memory bandwidth (VRAM), and the ability to handle high-precision floating-point operations for extended periods. The goal is to train complex models on enormous datasets, a process that can take days or weeks.

Inference, on the other hand, focuses on execution speed and throughput to process a large number of requests in real-time or near real-time. Here, latency is a critical factor, and techniques like Quantization are often used to reduce model footprint and accelerate responses while maintaining acceptable accuracy. Google's new TPUs fit into this context, offering optimized solutions for each phase, albeit within their own cloud ecosystem.

Implications for On-Premise and Hybrid Deployments

Google's announcement, while concerning proprietary hardware for its own cloud, highlights a broader trend with profound implications for organizations evaluating self-hosted or hybrid deployments. The need for specialized silicio for AI, both for training and inference, is a key factor in infrastructure planning.

For companies opting for on-premise solutions, hardware selection becomes a strategic decision that directly impacts Total Cost of Ownership (TCO), data sovereignty, and compliance. The availability of GPUs with adequate specifications, such as sufficient VRAM and high throughput, is essential for running LLMs locally. Evaluating these trade-offs is complex and requires an in-depth analysis of specific workload needs, security requirements for air-gapped environments, and bare metal infrastructure management capabilities. AI-RADAR offers analytical frameworks on /llm-onpremise to support these evaluations, highlighting the constraints and opportunities of local deployments.

Future Outlook and Strategic Choices

The continuous evolution of AI-dedicated silicio, as demonstrated by Google's new TPUs, underscores the increasing importance of hardware decisions in the artificial intelligence landscape. Organizations must carefully consider whether proprietary cloud solutions, with their advantages in scalability and management, align with their needs for control, security, and long-term costs.

The choice between a cloud-first approach and an on-premise or hybrid deployment is never trivial. Factors such as data sensitivity, industry regulations, and the predictability of operational costs play a decisive role. The availability of specialized hardware, whether offered by cloud providers or third parties for local installations, will continue to be a key element in unlocking the full potential of AI in diverse enterprise contexts.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!