The New Generation of TPUs: A Dual-Chip Strategy

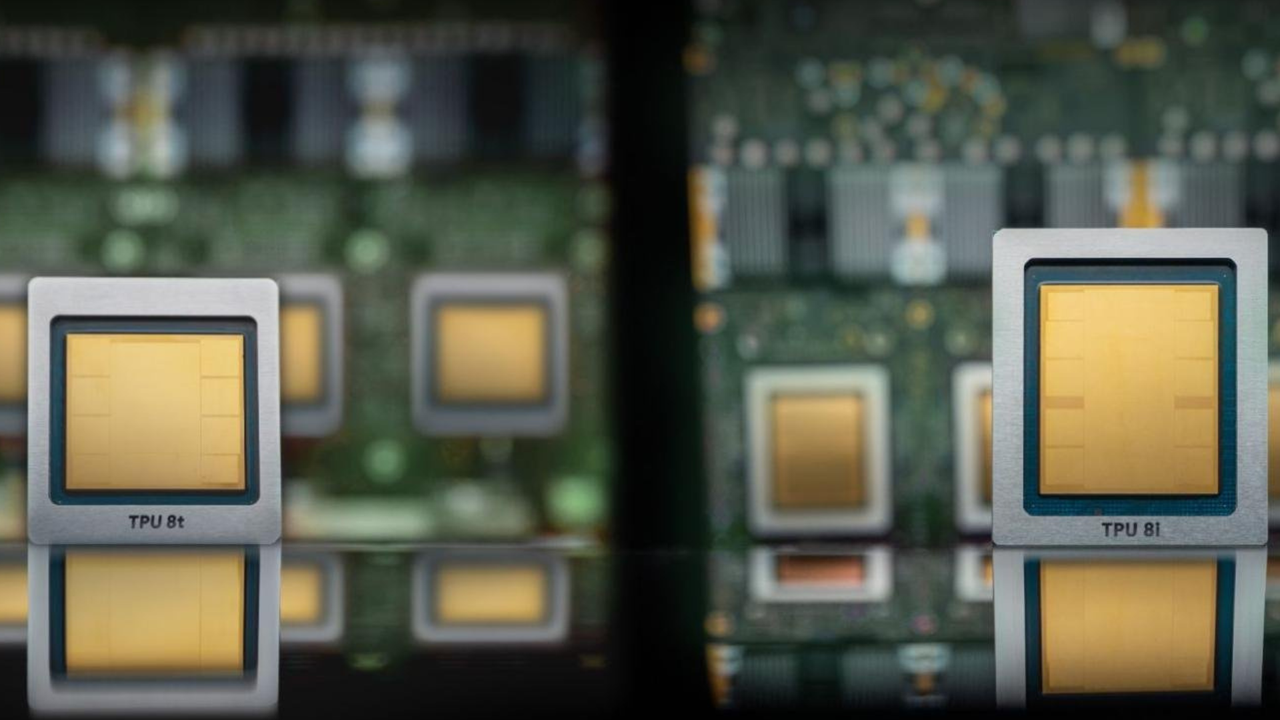

Google recently unveiled its eighth generation of Tensor Processing Units (TPUs), introducing the 8i and 8t models. This strategic move underscores the company's commitment to developing proprietary silicio for artificial intelligence, a rapidly evolving sector where hardware optimization is crucial for supporting the growth of Large Language Models (LLMs) and other computationally intensive workloads.

The choice to present two distinct chips, the TPU 8i and TPU 8t, suggests a targeted approach to address two fundamental tasks within AI. Traditionally, AI hardware is optimized for either the training phase or the inference phase. It is plausible that the two models have been designed to excel in these two complementary areas, offering more efficient solutions for the entire lifecycle of an AI model.

Optimization for Specific Workloads and Scalability

Google's strategy with the TPU 8i and 8t focuses on optimization for specific workloads. While one chip might be configured to maximize throughput during the training of complex models, requiring extensive VRAM capabilities and high-speed interconnections, the other could be optimized for inference, where latency and energy efficiency per token are critical parameters. This differentiation allows developers and enterprises to choose the most suitable solution for their needs, maximizing performance and containing operational costs.

A key aspect highlighted by the strategy is the advantage offered by the scalability of these chips compared to other AI accelerators, including those from Nvidia. The ability to scale efficiently is fundamental for managing increasingly larger models and voluminous datasets, reducing training times and improving the responsiveness of inference systems. For companies operating with considerably sized LLMs, scalability is not just a performance factor but also a determining element for the Total Cost of Ownership (TCO).

Implications for On-Premise Deployment and Hardware Competition

Although Google's TPUs are historically associated with the company's cloud infrastructure, the introduction of new hardware generations like the TPU 8i and 8t has significant implications for the entire AI accelerator market, including self-hosted deployments. The competitive pressure exerted by Google drives innovation across the industry, leading to increasingly performant and efficient hardware solutions even for on-premise environments.

For CTOs, DevOps leads, and infrastructure architects evaluating self-hosted versus cloud alternatives for AI/LLM workloads, the evolution of proprietary silicio like Google's TPUs highlights the importance of carefully analyzing trade-offs. Factors such as data sovereignty, compliance, the need for air-gapped environments, and overall TCO remain priorities. The availability of specialized accelerators on the market, even if not directly accessible on-premise, influences the choices of vendors offering solutions for bare metal infrastructures, pushing them to improve their offerings in terms of VRAM, throughput, and latency. For those evaluating on-premise deployments, analytical frameworks are available at /llm-onpremise to assess these trade-offs.

Future Prospects in the AI Hardware Landscape

Google's TPU V8 strategy reflects a broader trend in the artificial intelligence sector: the increasing specialization of hardware to address the unique challenges posed by AI workloads. With the growing complexity and size of models, the need for silicio designed specifically for training and inference becomes ever more pressing. This race for hardware innovation is set to continue, with a constant focus on energy efficiency, memory capacity, and computational speed.

AI deployment decisions, whether in the cloud or on-premise, will increasingly depend on the ability to balance performance needs with cost, security, and control constraints. The introduction of the TPU 8i and 8t by Google not only strengthens its position in cloud AI but also stimulates innovation across the entire hardware ecosystem, offering new opportunities and challenges for companies seeking to fully leverage the potential of artificial intelligence.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!