GPT-5.3 Accelerated by Cerebras

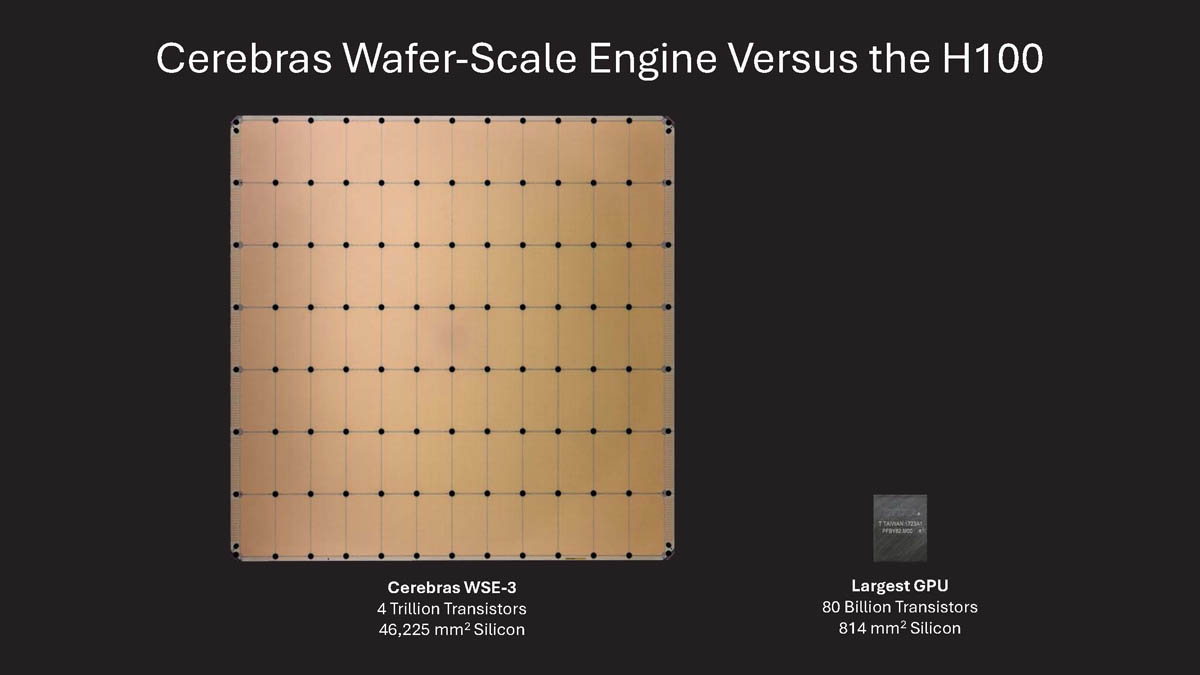

The OpenAI GPT-5.3-Codex-Spark model has achieved an inference speed of over 1000 tokens per second thanks to the use of Cerebras WSE-3 chips. This integration promises to significantly improve performance in scenarios where response speed is crucial.

For those evaluating on-premise deployments, there are trade-offs to consider carefully. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these aspects.

Implications for LLM Inference

The increased inference speed opens the way for new real-time applications, such as advanced chatbots, immediate predictive analytics, and more responsive recommendation systems. The use of specialized hardware such as Cerebras WSE-3 chips demonstrates the importance of optimizing both the model and the infrastructure to achieve the best possible performance.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!