Hanyuan-2's Debut: Promises and Uncertainties in Quantum Computing

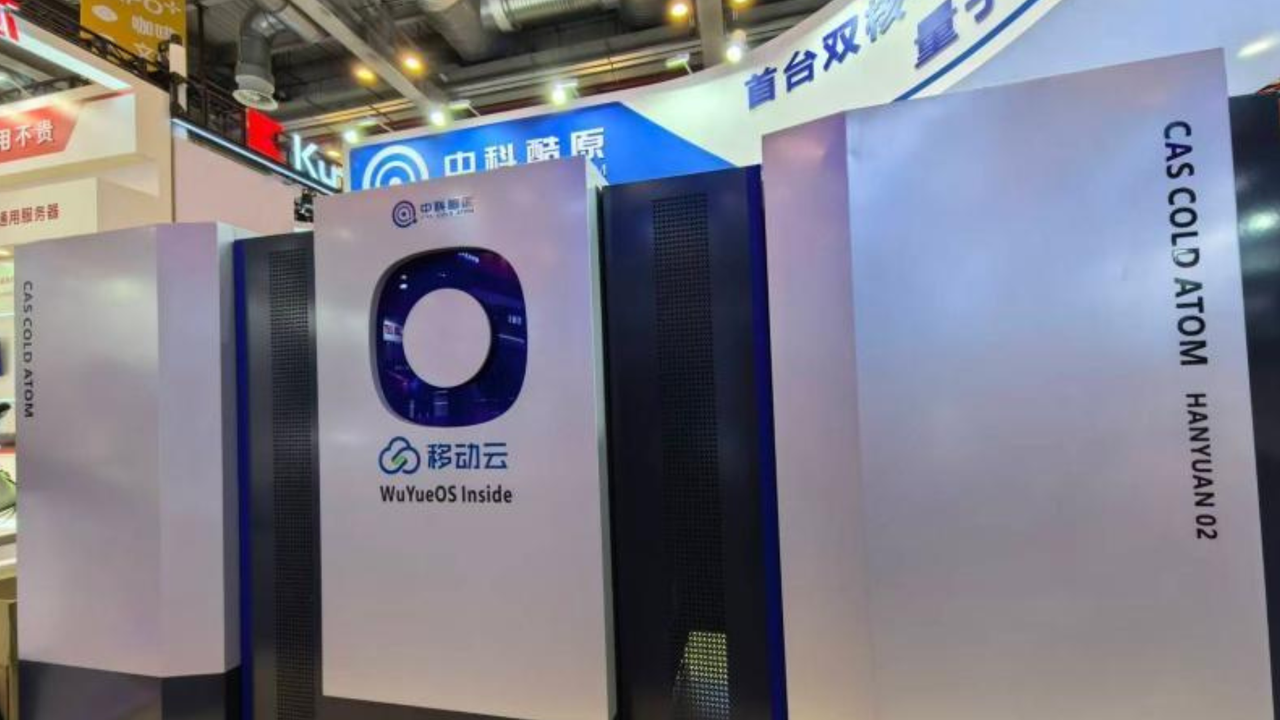

China has announced the debut of Hanyuan-2, a system presented as the world's first dual-core quantum computer. With a claimed capacity of 200 qubits, this machine represents a significant step in the ambitious global race towards quantum computing dominance. Its introduction highlights the rapid progress and intense competition in the sector, where every new architecture or qubit increase is observed with great attention.

One aspect particularly emphasized by its developers is the system's incredible power efficiency. In an era where the energy consumption of data centers and high-performance computing infrastructures is a growing concern, the promise of high efficiency is undoubtedly appealing. However, the validity of such claims, however promising, remains to be verified in the absence of concrete and comparable data.

The Importance of Benchmarks in the Technology Landscape

Despite Hanyuan-2's claims, a crucial element is missing: performance benchmarks. In the world of technology, and particularly in emerging sectors like quantum computing or Large Language Models (LLM), benchmarks represent the cornerstone for objective evaluation of a system's capabilities. They allow for the measurement of throughput, latency, scalability, and efficiency against recognized standards, providing a clear picture of real-world performance.

For CTOs, DevOps leads, and infrastructure architects who must make strategic deployment decisions, the absence of benchmarks is a significant hurdle. Without standardized metrics, it becomes extremely difficult to compare Hanyuan-2 with other existing or future quantum solutions, or to assess its potential impact on specific workloads. This principle applies universally, whether it's a cutting-edge quantum computer or an infrastructure for LLM inference on bare metal hardware.

Power Efficiency vs. Performance: A Trade-off to Validate

Hanyuan-2's claim of incredible power efficiency is a potential strength, especially considering the Total Cost of Ownership (TCO) of computing infrastructures. Reducing energy consumption can translate into significantly lower operational costs and a smaller environmental footprint. However, efficiency alone is not sufficient if it is not accompanied by adequate performance for the tasks the system is intended to perform.

The balance between efficiency and performance is a constant trade-off in every area of hardware computing, from GPUs for LLM training to processors for edge computing. Without benchmarks demonstrating how efficiency translates into a practical advantage for solving quantum problems, its real utility for enterprise applications remains unknown. The need for independent and transparent validation is therefore more evident than ever.

Future Prospects and the Need for Transparency

Hanyuan-2's debut underscores the acceleration of research and development in quantum computing globally. While quantum technology is still in its early stages and far from widespread deployment for most business applications, advancements like Hanyuan-2 are important for pushing the boundaries of innovation. However, the maturity of any technology, including LLMs and their supporting infrastructures, depends on the community's ability to rigorously evaluate it.

For decision-makers evaluating the adoption of new technologies, transparency in performance data and adherence to recognized benchmark standards are fundamental. This fact-based approach is essential for mitigating risks and optimizing investments, whether exploring the potential of quantum computing or choosing the best strategy for on-premise LLM deployments. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate trade-offs and constraints in critical deployment contexts, emphasizing the importance of concrete data for every infrastructure decision.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!