Holtek: MCU Price Hike, Expansion into AI Server Cooling and Optical Comms

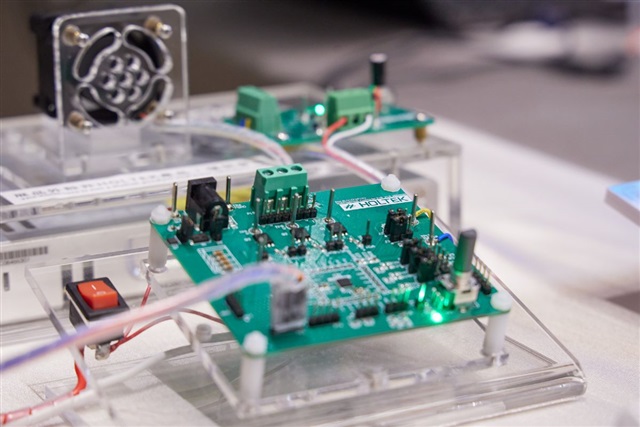

Holtek, an established player in microcontroller (MCU) manufacturing, has recently announced a revision of its business strategy. The company has communicated its intention to increase prices for its low-margin MCUs, a move signaling a repositioning aimed at optimizing profitability and focusing resources on more promising market segments. This decision comes amidst a global context of fluctuating demand and cost pressures, prompting manufacturers to reconsider their product portfolios.

In parallel with this pricing strategy, Holtek has unveiled ambitious plans to significantly expand its operations into two emerging and critically important technology sectors: AI server cooling and optical communications. This dual expansion highlights a clear strategic direction towards high-performance computing infrastructure, particularly that dedicated to artificial intelligence workloads and Large Language Models (LLMs).

The Importance of Cooling for AI Infrastructure

Holtek's expansion into the AI server cooling sector addresses an increasingly pressing need in the world of high-performance computing. Modern AI servers, equipped with state-of-the-art GPUs, generate considerable amounts of heat during LLM training and inference operations. This heat, if not managed effectively, can compromise performance, reduce hardware reliability, and drastically increase the Total Cost of Ownership (TCO) of a data center.

For companies evaluating on-premise deployments of AI infrastructure, advanced cooling solutions are a critical factor. Liquid cooling systems, for example, can offer superior efficiency compared to traditional air-based methods, enabling higher computing densities and helping to maintain optimal operating temperatures for components like GPU VRAM. The ability to dissipate heat efficiently is directly related to the sustainability and scalability of local AI architectures.

The Strategic Role of Optical Communications

Concurrently, Holtek's entry into the field of optical communications underscores another fundamental component for AI infrastructure. The growing complexity and size of Large Language Models require not only massive computing power but also extremely high-bandwidth, low-latency interconnection capabilities between GPUs and servers. Optical communications, thanks to their ability to offer very high throughput over longer distances compared to traditional copper links, have become indispensable in this scenario.

For both distributed training and large-scale inference, the speed at which data can be transferred between compute nodes is a limiting factor. Fiber optic-based solutions allow for the construction of AI clusters with a more flexible and performant architecture, reducing bottlenecks and maximizing the utilization of computing resources. This is particularly relevant for self-hosted environments, where optimizing every component of the infrastructure helps improve overall efficiency and ensure data sovereignty.

Implications for On-Premise Deployments and Data Sovereignty

Holtek's strategic moves reflect a broader trend in the technology sector, where demand for specialized AI components is rapidly growing. For CTOs, DevOps leads, and infrastructure architects considering on-premise deployments for their LLM workloads, the availability of advanced cooling and optical communication solutions is fundamental. These elements not only directly influence performance and TCO but are also crucial for compliance and data sovereignty, which are priority aspects for many organizations.

The ability to build and manage robust and high-performing AI infrastructure in a controlled environment, such as an air-gapped data center, depends on the quality and efficiency of every single component. The expansion of companies like Holtek into these key sectors helps strengthen the ecosystem of providers for self-hosted solutions, offering more options and innovations for those seeking alternatives to the public cloud. For those evaluating on-premise deployments, complex trade-offs exist between initial CapEx, long-term operational costs, and the benefits in terms of control and security, and the choice of infrastructural components plays a decisive role.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!