The Race for Technological Sovereignty in AI Chips

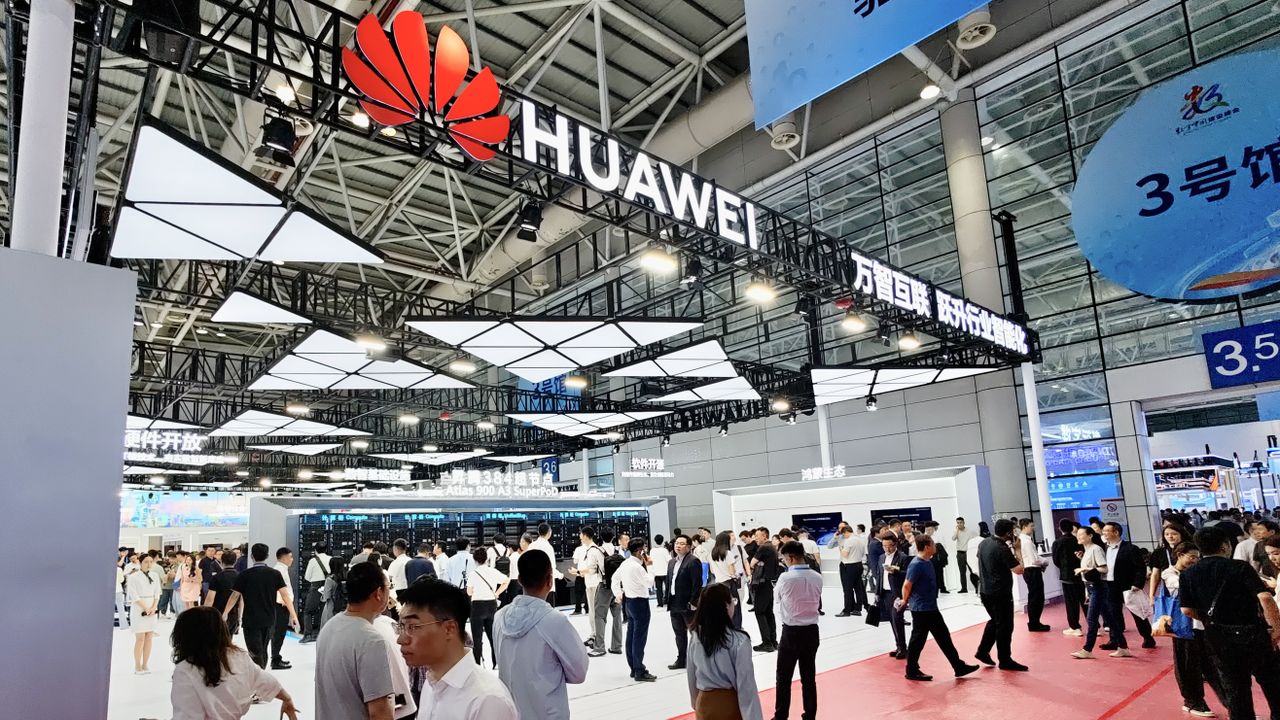

The global artificial intelligence landscape is increasingly shaped by geopolitical dynamics and the pursuit of technological self-sufficiency. In this scenario, China is intensifying its efforts to consolidate its dominance in AI hardware, with significant implications for the global market. Recent analysis suggests that Huawei could emerge as a key player, potentially taking the lead in China's AI chip sector by 2026.

This prospect arises as shipments of Nvidia's H200 chip, a crucial component for Large Language Models (LLM) workloads and other AI applications, are facing delays due to regulatory constraints. Such obstacles not only create an opportunity for local manufacturers but also reinforce Beijing's strategy to promote internally developed AI hardware solutions.

Regulatory Hurdles and the Rise of Local Players

Export restrictions imposed by some Western governments have directly impacted the ability of companies like Nvidia to supply their most advanced chips to the Chinese market. The slowdown in shipments of Nvidia's H200 model highlights the challenges global companies face in an increasingly complex regulatory environment. This scenario has accelerated China's push for technological independence, incentivizing the development of domestic alternatives.

For Chinese companies, the goal is to reduce reliance on external suppliers, ensuring supply chain continuity and data sovereignty. Huawei, with its established expertise in telecommunications and semiconductors, is positioned as a strong candidate to fill the void left by restrictions, offering hardware solutions that can meet the growing demand for AI computing capacity in the country.

Implications for On-Premise Deployments and TCO

The pursuit of domestic AI hardware has profound implications for enterprise deployment strategies, particularly for those evaluating on-premise solutions. The availability of local chips can offer organizations greater control over infrastructure, data security, and regulatory compliance—crucial aspects for sectors such as finance, healthcare, and public administration. A local hardware ecosystem can also influence the Total Cost of Ownership (TCO) of AI deployments.

While the initial investment (CapEx) for on-premise hardware can be significant, reduced reliance on external suppliers and potential long-term operational cost optimization can represent a strategic advantage. For LLM workloads, hardware choice is critical, requiring high amounts of VRAM and throughput for inference and training. The availability of robust local alternatives thus becomes a decisive factor for infrastructure planning and operational resilience.

Future Prospects in a Growing Market

The Chinese AI market is projected to reach $67 billion by 2030, a figure that underscores the enormous potential and strategic importance of this sector. The competition for leadership in AI chips is not just a matter of market share but also of technological and geopolitical influence. Huawei's potential rise by 2026 marks a turning point, indicating greater fragmentation in the global AI semiconductor market.

For companies operating in this context, evaluating hardware options will require careful analysis of trade-offs between performance, cost, availability, and regulatory compliance. AI-RADAR, for instance, offers analytical frameworks on /llm-onpremise to support decisions regarding on-premise deployments, helping to navigate the complexities of a rapidly evolving and increasingly diversified technological landscape. The ability to choose solutions that ensure data sovereignty and infrastructural control will increasingly be a distinguishing factor.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!