Iceotope Raises $26 Million for Liquid Cooling in the AI Era

Iceotope, a British company at the forefront of precision liquid cooling, has announced the closing of a $26 million Series B funding round. The operation was led by Barclays Climate Ventures and Two Seas Capital, with participation from existing investors including ABC Impact, Northern Gritstone, Edinv, and British Patient Capital. This significant investment is intended to support the expansion of Iceotope's product line and patent portfolio, at a time when the artificial intelligence industry is pushing the limits of computing infrastructure.

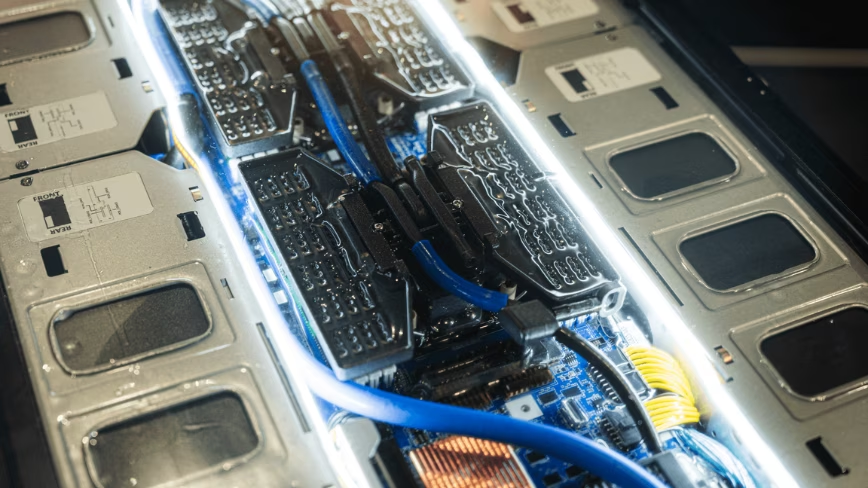

The need for advanced cooling solutions has become urgent. The current AI hardware development cycle, characterized by high component density in data center racks, is exceeding the capabilities of traditional air cooling systems. Latest-generation GPUs, essential for training and Inference of Large Language Models (LLMs), generate increasingly large amounts of heat, making innovative approaches to thermal management indispensable.

The Strategic Role of Liquid Cooling in AI

The evolution of AI workloads demands unprecedented computing power, concentrated in increasingly smaller spaces. This translates into an exponential increase in power density per rack, leading to significant cooling challenges. Air systems, while having been the standard for decades, struggle to effectively dissipate the heat produced by multi-GPU configurations and high-performance servers, leading to throttling issues, instability, and ultimately, a reduction in hardware lifespan.

Precision liquid cooling offers a targeted solution, allowing heat to be removed directly from the most critical sources, such as processors and graphics cards. This technology helps maintain optimal operating temperatures, improving the reliability and performance of AI hardware. For organizations deploying local LLM stacks, the ability to effectively manage heat is crucial for maximizing throughput and minimizing latency, while ensuring efficient and sustainable operation.

Implications for On-Premise Deployments and Data Sovereignty

The adoption of liquid cooling solutions has direct implications for on-premise deployment strategies. Companies choosing to keep their AI workloads in self-hosted or air-gapped environments, often for reasons of data sovereignty, compliance, or TCO control, benefit enormously from these technologies. Liquid cooling allows for greater computing power to be concentrated in a smaller physical space, optimizing data center utilization and reducing energy-related operational costs.

In a context where data security and residency are absolute priorities, the ability to deploy high-performance AI infrastructures locally, without relying on external cloud services, is a competitive advantage. Liquid cooling is an enabler for these scenarios, allowing for the construction and management of robust and scalable AI compute clusters directly on-site. For those evaluating the trade-offs between on-premise and cloud deployments, AI-RADAR offers analytical frameworks on /llm-onpremise to support these strategic decisions.

Future Prospects and the Evolution of AI Infrastructure

The investment in Iceotope underscores a broader trend in the tech sector: the growing importance of the physical infrastructure supporting the advancement of artificial intelligence. As Large Language Models become more complex and demand ever-increasing computing resources, the ability to effectively cool hardware will become a distinguishing factor for innovation. Iceotope's expansion of its product line and patent portfolio reflects this strategic vision, positioning the company at the center of a rapidly growing market.

The future of AI will not only depend on algorithms or chip power but also on the ability to manage the physical environment in which they operate. Liquid cooling solutions, such as those developed by Iceotope, are set to become a standard component for next-generation data centers, ensuring that the promises of artificial intelligence can be realized at scale, with efficiency and reliability.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!