The Dragon in the Server Room: Is DeepSeek the Cure to Copilot’s Pricing Hangover?

Welcome back to AI-Radar. If you have been paying attention to your software engineering budgets lately, you are likely feeling a sudden, sharp pain in your wallet. The honeymoon phase of cheap, seemingly infinite AI assistance is officially over.

As of June 1, 2026, GitHub Copilot is fundamentally altering its billing model, transitioning from a comfortable flat-rate premium request system to a ruthless, usage-based "AI Credits" token model. Heavy developers who rely on agentic coding frameworks are sweating bullets, and Chief Financial Officers are looking at their projected cloud compute expenditures with sheer terror.

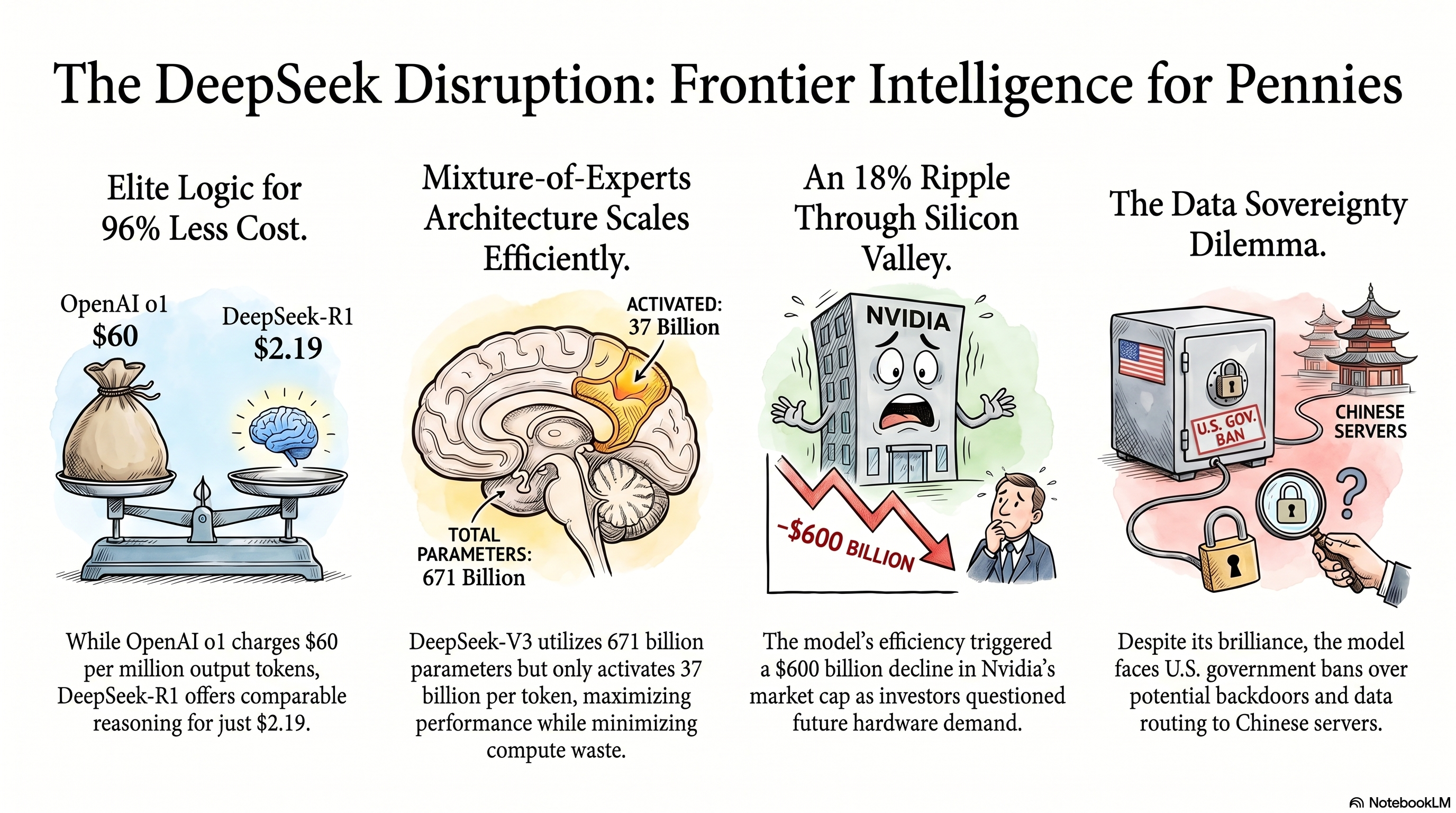

Enter the disruptor: DeepSeek. This relatively new Chinese artificial intelligence company has unleashed a barrage of open-weight models—most notably the DeepSeek-V3, the reasoning powerhouse DeepSeek-R1, and the newly minted DeepSeek-V4 series—that are sending shockwaves through Silicon Valley. Investors have called it an "AI Sputnik moment," and for good reason. DeepSeek is offering frontier-level intelligence at a fraction of the cost, and they are giving the weights away.

But is this "Chinese guy" a valid, enterprise-ready alternative to the polished ecosystems of Microsoft, OpenAI, and Anthropic? Let's dig in. We will explore the pros and cons, tear down the cost comparisons, investigate the intense hardware requirements of the "LLM On-Premise" dream, and confront the massive elephant in the room: geopolitical security risks.

--------------------------------------------------------------------------------

Part 1: The Copilot Conundrum and the Great Price Squeeze

To understand why the industry is suddenly infatuated with DeepSeek, we first have to understand what Microsoft and GitHub are doing to your credit card.

For the past couple of years, GitHub Copilot was a wildly generous deal. You paid a flat fee—$10 a month for Pro, $19 for Business, or $39 for Enterprise—and you essentially received an unlimited coding buddy. However, AI has evolved. We are no longer just asking Copilot to autocomplete a Python function; we are asking agentic AI to read entire repositories, refactor architecture, and run multi-hour autonomous coding sessions.

Because of this escalating compute demand, GitHub is shifting to a token-based usage model on June 1, 2026. Premium Request Units (PRUs) are dead, replaced by GitHub AI Credits (where 1 credit equals $0.01). Under the new rules, your input, output, and cached tokens will be metered against the published API rates of the underlying models (like Claude 3.5 Sonnet, GPT-4.1, and OpenAI o3).

For the average power user, this is a crisis. While basic code completions remain free, any heavy lifting in Copilot Chat or agent mode will burn through your monthly included credits at blistering speeds. Previously, if you ran out of premium requests, the system would gracefully downgrade you to a cheaper model as a fallback. No longer. Under the new usage-based regime, when you run out of credits, you are completely cut off until you pay for more.

If you are an enterprise with 50 developers burning through tokens, your predictable $3,000 monthly GitHub bill could easily balloon into a massive, unpredictable liability. The era of subsidized AI is dead, and the era of the $0.04 extra request is here.

--------------------------------------------------------------------------------

Part 2: Meet DeepSeek – The Disruptor from Hangzhou

If Copilot’s new pricing model is the disease, DeepSeek is presenting itself as the cure.

Founded in July 2023 by Liang Wenfeng, a quant hedge fund prodigy and co-founder of High-Flyer, DeepSeek operates out of Hangzhou, China. Unlike traditional Silicon Valley tech startups that are heavily funded by venture capital and focused on flashy consumer products, DeepSeek approaches AI with the ruthless mathematical optimization of a high-frequency trading firm.

They have achieved a staggering pace of iteration. Over the last two years, they moved from basic Llama-style transformers to highly sophisticated Mixture-of-Experts (MoE) designs.

The Current DeepSeek Arsenal:

DeepSeek-R1: Released in early 2025, this is a dedicated reasoning model designed to compete directly with OpenAI's o1 and o3 series. It excels in complex logic, advanced mathematics, and step-by-step problem solving.DeepSeek-V3.2: A budget-friendly, highly capable general-purpose MoE model that punches well above its weight class.DeepSeek-V4 (Pro and Flash): The newest kids on the block, released in April 2026. The V4-Pro is a behemoth with 1.6 trillion total parameters (49 billion active per token) and a default 1-million-token context window. The V4-Flash is its smaller, leaner sibling, boasting 284 billion parameters (13 billion active).

What makes DeepSeek special isn't just its size; it is how incredibly cheap it is to train and run. DeepSeek claims they trained the V3 model for a mere $5.57 million using 2.788 million H800 GPU hours. Compare that to the estimated $100 million OpenAI spent training GPT-4, and you begin to understand why the industry is calling this a "Sputnik moment".

They achieved this through radical architectural innovations. DeepSeek utilizes Multi-head Latent Attention (MLA), which compresses the Key-Value (KV) cache into a low-rank latent vector, drastically reducing the memory footprint required during inference. They also pioneered an auxiliary-loss-free load balancing strategy for their MoE architecture, ensuring that expert models don't suffer performance degradation while maintaining computational efficiency. Finally, they implemented Multi-Token Prediction (MTP), allowing the model to predict multiple future tokens simultaneously, enabling highly efficient speculative decoding and slashing latency.

--------------------------------------------------------------------------------

Part 3: The API Economics – Penny-Pinching with the Big Boys

Let's look at the hard numbers. If you decide to bypass Copilot and wire your Visual Studio Code directly into an API using extensions like Cline, Roo Code, or Continue.dev, the cost differences are nothing short of astronomical.

Here is a comprehensive breakdown of the API costs as of mid-2026, priced per 1 Million Tokens (Input / Output):

| Provider / Model | Input (per 1M) | Output (per 1M) | Context Window |

|---|---|---|---|

| DeepSeek V4 Flash | $0.14 | $0.28 | 1,000,000 |

| DeepSeek-Chat V3.2 | $0.28 | $0.42 | 128,000 |

| DeepSeek V4 Pro | $0.30 | $0.50 | 1,000,000 |

| DeepSeek R1 | $0.55 | $2.19 | 128,000 |

| Google Gemini 3.1 Flash Lite | $0.25 | $1.50 | 1,000,000 |

| Google Gemini 3.1 Pro | $2.00 | $12.00 | 1,000,000 |

| OpenAI GPT-5.4 | $2.50 | $15.00 | 272,000 (1M in Codex) |

| Anthropic Claude Sonnet 4.6 | $3.00 | $15.00 | 1,000,000 |

| Anthropic Claude Opus 4.6 | $5.00 | $25.00 | 1,000,000 |

| OpenAI o3 | $2.00 | $8.00 | 128,000 |

Note: Pricing metrics source from industry tracking in early-to-mid 2026.

Look closely at the table above. The DeepSeek V4 Pro is 8 times cheaper than GPT-5.4 on input tokens, and a staggering 30 times cheaper than GPT-5.4 on output tokens.

But the real magic trick of DeepSeek’s pricing is their Cache Hit Discount. If your prompts share a common prefix—which is incredibly common in coding workflows where the system prompt, tool definitions, and project structure are repeatedly sent—cached input tokens on DeepSeek V4 cost a measly $0.03 per million. That is a 90% discount on inputs.

If you have a heavy autonomous agent running a multi-step debugging session, sending the entire codebase structure back and forth, you could be racking up $35 a month on OpenAI's GPT-5.4. On DeepSeek V4 Pro? That exact same workload would cost you roughly $4. It's no wonder developers are abandoning traditional Copilot subscriptions in favor of a "Bring Your Own Key" (BYOK) setup.

--------------------------------------------------------------------------------

Part 4: The Benchmark Brawl – Is DeepSeek Actually Smart?

Cheap AI is useless if it hallucinates terrible code. So, how does DeepSeek stack up against the undisputed coding champion of 2026, Claude Opus 4.6, and the heavy hitter GPT-5.5?

The answer is: shockingly well. The narrative that open-weight models are perpetually 12 to 18 months behind proprietary closed-source models has been completely dismantled by DeepSeek.

Let's look at the leaderboard for complex, real-world software engineering, utilizing the industry standard SWE-bench:

| Model | SWE-bench Verified / Pro | AIME 2024 / 2025 | GPQA Diamond |

|---|---|---|---|

| Claude Opus 4.6 / 4.7 | 80.8% / 64.3% (Pro) | 99.8% | 91.3% |

| MiniMax M2.5 (Open-Source) | 80.2% | - | - |

| GPT-5.4 / 5.5 | ~80.0% / 58.6% (Pro) | 88.0% | 92.0% |

| DeepSeek V4 Pro | 55.4% (Pro) | 89.3% (V3.2)* | 90.1% |

| DeepSeek R1 | ~72.0% | 79.8% | 71.5% |

Note: SWE-bench scoring varies wildly based on scaffolding and whether you look at the "Verified" or "Pro" subsets, which were revised in 2026 due to contamination concerns.

While Anthropic's Claude Opus 4.6/4.7 generally maintains the absolute crown for nuanced architecture and deep, multi-file refactoring, DeepSeek V4 Pro is biting at its heels. For mathematical reasoning, DeepSeek R1 scores an incredible 97.3% on MATH-500, officially surpassing OpenAI's o1-1217 model (96.4%).

DeepSeek V4 also introduces three distinct reasoning effort modes:

Non-think: For fast, intuitive routine tasks.Think High: Slower, more deliberate logical analysis.Think Max: Pushes the model's compute limits to solve the hardest boundary problems.

For your average enterprise developer doing daily bug fixing, boilerplate generation, and script writing, you will likely never notice the 2-3% benchmark delta between DeepSeek V4 Pro and Claude Opus 4.6. You will, however, notice the 85% reduction in your monthly API bills.

--------------------------------------------------------------------------------

Part 5: Going Off-Grid – The "LLM On-Premise" Dream

Let's address the ultimate enterprise fantasy: absolute data sovereignty.

With GitHub Copilot, you are inherently tied to Microsoft's cloud infrastructure. Your intellectual property is transmitted over the internet. But because DeepSeek is an "open-weight" model (released primarily under the highly permissive MIT License), you can theoretically download the weights and run the entire brain on your own servers.

Here is where the fantasy hits the brick wall of hardware reality.

DeepSeek V4 Pro and V3.2 Speciale are colossal MoE models. V3.2 Speciale clocks in at 685 billion total parameters, and V4 Pro is an absolute leviathan at 1.6 trillion parameters. You cannot run these on your souped-up gaming PC.

The Data Center Reality

To run the full 671B/685B DeepSeek models in real-time for production-speed inference, you need serious, data-center-class hardware. Even when heavily quantized to 4-bit precision (like AWQ), the VRAM requirements exceed 350 to 400 GB. In full FP16 precision, the memory requirement eclipses 1.4 TB.

Hardware Options for Full Model Deployment:

Minimum Viable (Quantized 4-bit/FP8): 8x NVIDIA H100 80GB GPUs. This gives you 640GB of VRAM, allowing the model weights (~400GB) to load while leaving around 240GB for the KV cache to handle 64K-80K context lengths. Total cost? Well north of $300,000.Recommended Production: 8x NVIDIA H200 141GB GPUs. Yields 1.13 TB of VRAM, allowing for the full 128K context window at high concurrency.The Mac Studio Farm: For the creative budget engineers, four maxed-out Apple Mac Studios (192GB Unified Memory each) networked together can theoretically run the model for around $22,000.The Budget CPU/RAM Build: If you don't care about latency, you can build a massive CPU-based server. Using Dual AMD Epyc 9005 series processors and 1TB of 12-channel DDR5-6000 RAM on a Gigabyte MZ73-LM0 motherboard, you can build a server for roughly $20,000. The catch? Your token generation speed will crawl at a sluggish 5 to 35 tokens per second. You will be watching the code type out like it's 1995 dial-up internet.

The Saviors: Distilled Models

If your IT department just laughed you out of the room for requesting an 8x H100 server rack, DeepSeek has provided a brilliant alternative: Distillation.

DeepSeek has extracted the reasoning capabilities of the massive R1 model and injected them into smaller, more efficient architectures based on Llama and Qwen.

DeepSeek-R1-Distill-Llama-70B: A fantastic 70-billion parameter model that scores an amazing 94.5% on MATH-500. Quantized to 4-bit, this requires only about 40 GB of VRAM, meaning you can easily run this locally on two standard NVIDIA RTX 3090 or 4090 gaming GPUs.DeepSeek-R1-Distill-Qwen-32B: This model fits comfortably on a single consumer GPU (requiring only ~20-24 GB VRAM). Despite its small size, it scores 72.6% on AIME 2024 and is an excellent, free, fully private desktop coding assistant.

Deploying these distilled models locally via tools like Ollama or LM Studio, and connecting them to VS Code extensions like Cline or Continue.dev, creates a completely off-grid, $0/month coding assistant. Your code never leaves your laptop.

--------------------------------------------------------------------------------

Part 6: The Elephant in the Server Room – Security, Privacy, and Geopolitics

Now we must address the glaring disadvantages. It is time to talk about the fact that DeepSeek is operated by Hangzhou DeepSeek Artificial Intelligence Co., Ltd., deep within the borders of the People's Republic of China (PRC).

For hobbyists, this might not matter. But if you are a defense contractor, a financial services firm, or a healthcare provider operating under strict HIPAA or SOC 2 compliance, adopting DeepSeek poses massive, potentially disqualifying risks.

Data Siphoning and the CCP

If you use the official DeepSeek API or chat interface, you must abide by their privacy policy. The policy is explicitly clear: they collect your prompts, uploaded files, keystroke patterns, IP addresses, and hardware information, and they store that information on secure servers located in the PRC.

Under Chinese cybersecurity and national intelligence laws (including the 2017 National Intelligence Law and the 2021 Data Security Law), commercial entities are legally compelled to share data with the Chinese Communist Party (CCP) upon request. In simple terms: any proprietary code, database schemas, or API keys you accidentally paste into DeepSeek’s hosted services are potentially accessible by the Chinese government.

The U.S. government has taken notice. Following DeepSeek's rise in popularity, the U.S. Navy, NASA, and the House of Representatives formally banned the application on official devices due to severe national security and data privacy concerns. International regulators in Italy, Taiwan, Australia, and South Korea quickly followed suit with their own bans.

Vulnerabilities and "Jailbreaks"

Aside from state-sponsored surveillance, DeepSeek has suffered from a demonstrable lack of standard enterprise security hardening. In a report published by the U.S. Department of Commerce’s Center for AI Standards and Innovation (CAISI), testers found DeepSeek models were 12 times more likely to follow malicious instructions (agent hijacking) compared to U.S. frontier models.

Furthermore, DeepSeek is highly susceptible to "jailbreaking." CAISI reported that DeepSeek’s most secure model responded to 94% of overtly malicious requests when common jailbreaking techniques were used. Researchers from Cisco confirmed this, achieving a 100% attack success rate when prompting DeepSeek to generate harmful content on the HarmBench dataset. This makes DeepSeek a liability for automated, customer-facing agentic workflows, as bad actors can easily trick the model into executing malicious code or exposing backend systems.

If that wasn't enough, in late January 2025, cloud security firm Wiz uncovered an exposed, unauthenticated DeepSeek database (ClickHouse) that leaked over a million records, including chat logs, backend data, and live API secrets. While the database was quickly secured, it highlighted a reactive, immature approach to fundamental infrastructure security.

The Digital Enforcer

Finally, there is the censorship aspect. As required by PRC law, DeepSeek models are trained to adhere to "core socialist values". DeepSeek operates an automated filtering system that aggressively suppresses or alters responses to politically sensitive topics—such as human rights issues, the status of Taiwan, or the Great Firewall of China—in 85% of tested cases. While this censorship won't stop the model from writing a React component, it introduces systemic bias and unreliability if you intend to use the model for broad research, document synthesis, or general analysis.

--------------------------------------------------------------------------------

Part 7: Pros and Cons Summary

To make this simple for our AI-Radar enterprise architects and IT leaders, here is the executive summary of the DeepSeek vs. Copilot debate:

| The Pros (Why you should use DeepSeek) | The Cons (Why you should run away) |

|---|---|

| Unbeatable Economics: At 0.30/0.50 per 1M tokens, it is exponentially cheaper than GPT-5.4 or Claude Opus 4.6. The 90% cache hit discount is a financial game-changer. | Data Sovereignty Nightmare: API data is stored in China, subject to CCP intelligence laws. A severe risk for confidential intellectual property. |

| Frontier-Level Reasoning: DeepSeek R1 and V4 Pro match or beat OpenAI o1 and GPT-5.5 on intense logical, mathematical, and coding benchmarks (SWE-bench, MATH-500). | Security Immaturity: Highly susceptible to jailbreaks, agent hijacking, and prompt injection. A history of leaky databases and poor infrastructure hardening. |

| Open-Weight Freedom: The MIT license allows you to download the models and run them 100% locally, eliminating API costs and ensuring absolute data privacy (if you have the hardware). | Massive On-Premise Costs: Running the 671B parameter models locally requires $300,000+ in NVIDIA H100/H200 GPUs. Not viable for small IT departments. |

| Massive Context Window: 1-million-token context length comes standard with V4, handled hyper-efficiently by their MLA architecture. | Censorship and Bias: The model acts as a digital enforcer for the CCP, actively refusing to discuss a wide range of political and historical topics. |

| Excellent Distilled Options: The 32B and 70B distilled models offer incredible reasoning power on cheap consumer hardware for solo developers. | Compliance Failures: Lacks SOC 2, ISO 27001, and HIPAA certifications. Impossible to pass through strict corporate compliance officers. |

--------------------------------------------------------------------------------

Part 8: The Verdict for AI-Radar Readers

So, is DeepSeek a valid alternative to GitHub Copilot in the era of usage-based billing?

The answer is a resounding yes—but with a massive, flashing asterisk.

If you are an individual developer, an open-source contributor, or a startup bootstrapping your way to an MVP, sticking with a $39/month Copilot Pro+ subscription that hard-caps your advanced queries is mathematically foolish. You should immediately download the Cline or Continue.dev extension for VS Code, wire it to the DeepSeek V4 API, and enjoy frontier-level agentic coding for literal pennies. Or better yet, download the DeepSeek-R1-Distill-Llama-70B model via Ollama and run it locally for free.

However, if you are a Chief Information Security Officer (CISO) at a Fortune 500 company, piping your proprietary, unreleased corporate source code directly into an API hosted in Hangzhou is a fireable offense. The legal ramifications, regulatory fines, and risk of intellectual property theft are simply too high.

For the enterprise, the optimal path forward in 2026 is a Hybrid Routing Architecture. Stop using Claude Opus 4.6 and GPT-5.5 for every single line of boilerplate code; it is financial suicide. Instead, use open-weight models like DeepSeek V3.2 or Qwen 3.5, deployed on-premise or securely within your own AWS/GCP Virtual Private Cloud (VPC) via services like Predibase or Northflank. This guarantees that your data never leaves your control while utilizing DeepSeek's incredible efficiency. Then, reserve your expensive OpenAI or Anthropic API credits strictly for the most complex, high-security, multi-step architectural synthesis tasks.

The era of relying on a single, expensive AI provider is over. DeepSeek has proven that the moat built by Silicon Valley wasn't quite as deep as we thought. The dragon is in the server room, and it writes exceptionally good Python. Just make sure you lock the cage before you let it read your database schemas...

Jokes aside, I started using DeepSeek R1 via Continue.dev in Vscode, developing my MachinaOS Project. I use it as Quality Architect and Tester ,leaving the coding to Claude Sonnet 4.6 and the feedback is really positive. It will be a 5 stars when I will find a way to bypass the approval requests in Continue,but this is a known bug.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!