The Evolution of Process Nodes: The Role of Intel 18A-P

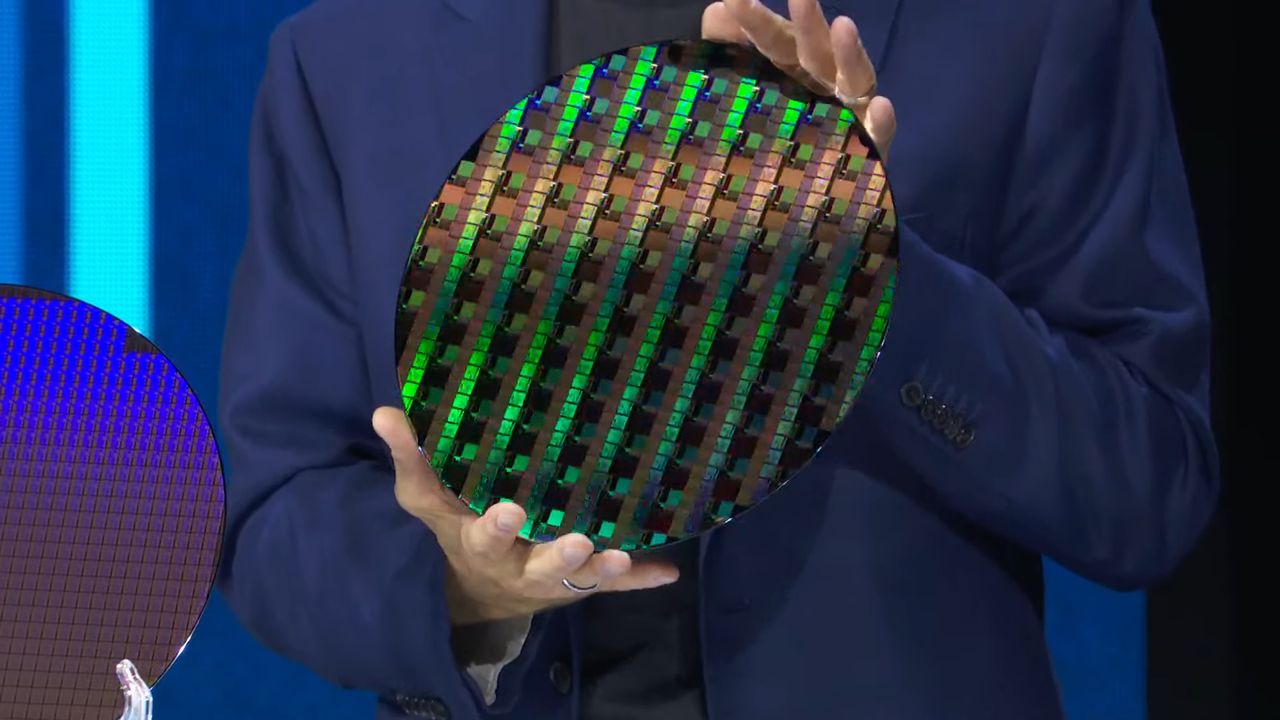

In the constantly evolving landscape of semiconductor manufacturing, advancements in process nodes are a decisive factor for chip performance and efficiency. Intel, a historical player in this sector, has recently provided new details on its 18A-P process node, an innovation that promises to redefine standards for future generations of processors. These developments are of particular interest to companies managing intensive workloads, such as those related to Large Language Models (LLM), and evaluating on-premise deployment strategies.

The ability to integrate more transistors into increasingly smaller spaces, while simultaneously improving their operational characteristics, is fundamental to sustaining the growing demand for computing power. The 18A-P node positions itself as a significant step forward in this direction, with direct implications for data center architecture and AI infrastructure strategies.

Technical Details: Performance, Power Consumption, and Thermal Management

The details released by Intel highlight two key metrics that underscore the potential of the 18A-P node. The company has stated a 9% increase in performance, a figure that translates into greater processing capability per clock cycle. This improvement is essential for accelerating the execution of complex algorithms and reducing latency times in LLM inference and training operations.

Equally significant is the 50% improvement in thermal conductivity. This aspect is crucial for managing the heat generated by high-performance processors. Better thermal conductivity allows chips to operate at lower temperatures or to dissipate heat more efficiently, contributing to reduced overall power consumption and improved long-term reliability of components. These factors are vital for the sustainability and operational efficiency of modern data centers.

Implications for On-Premise Deployments and TCO

For organizations prioritizing on-premise deployments, Intel's 18A-P node innovations offer tangible benefits. The performance increase allows for more computing power per rack unit, improving infrastructure density. Simultaneously, reduced power consumption and optimized thermal management directly contribute to a more favorable TCO (Total Cost of Ownership). Lower cooling requirements and superior energy efficiency translate into reduced operational costs (OpEx), a fundamental aspect for those managing large-scale infrastructures.

In a context where data sovereignty and regulatory compliance are absolute priorities, adopting cutting-edge hardware for self-hosted solutions becomes strategic. The ability to run complex AI workloads on internally controlled infrastructures, while benefiting from improved efficiency and performance, strengthens the argument for on-premise deployments. For those evaluating these options, AI-RADAR offers analytical frameworks on /llm-onpremise to assess the trade-offs between initial costs (CapEx) and long-term operational benefits.

Future Prospects for AI Infrastructure

Advances in process nodes like Intel's 18A-P are a fundamental pillar for the future development of artificial intelligence. Incremental improvements in performance and energy efficiency accumulate over time, enabling new capabilities and making AI workloads increasingly accessible and sustainable. These developments not only push the limits of what is technically possible but also offer concrete solutions to the operational and financial challenges companies face in deploying advanced AI systems.

The continuous pursuit of greater efficiency and performance in silicio is a key driver for innovation, especially for architectures supporting LLMs. Intel's commitment in this area underscores the importance of a robust and optimized hardware foundation for the success of AI strategies, particularly those requiring maximum control and efficiency in self-hosted and air-gapped environments.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!