Intel Expands Overclocking to Core Ultra 200K Plus Processors: Implications for On-Premise Deployments

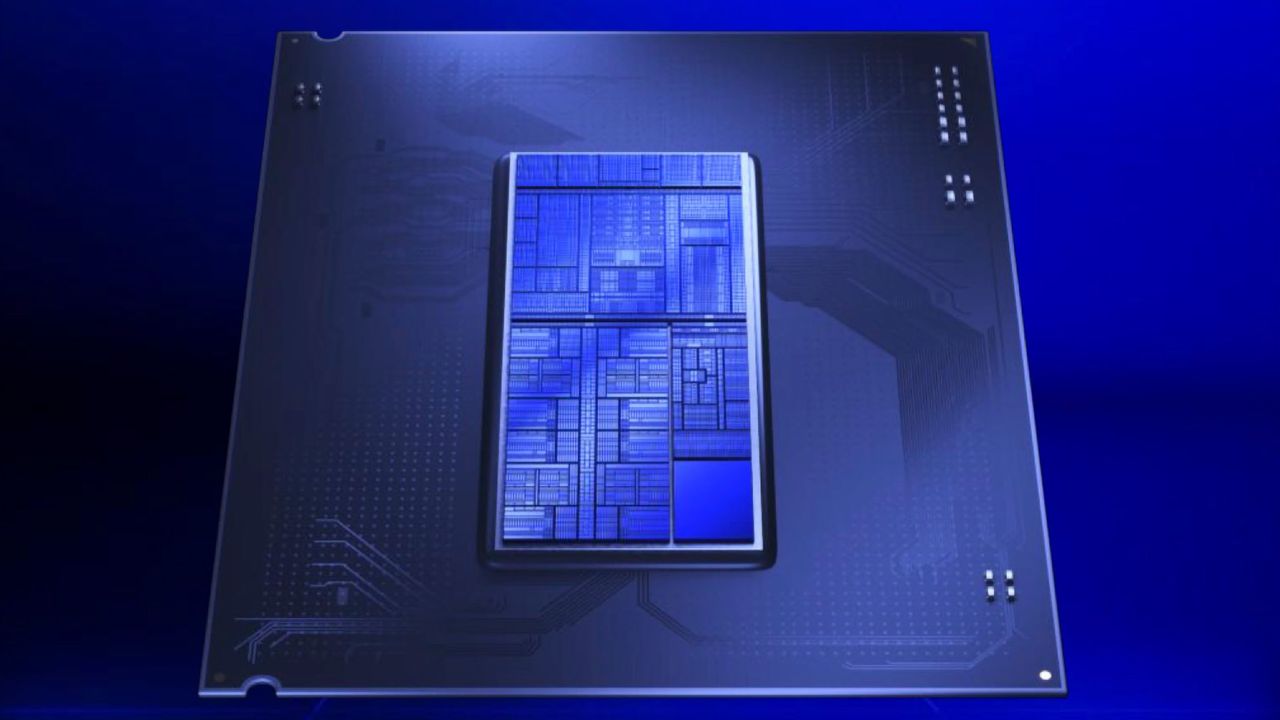

Intel has recently announced its intention to extend overclocking capabilities to a wider range of processors destined for future platforms. Among these, the Core Ultra 200K Plus models stand out, a clear indication of the company's desire to democratize features traditionally reserved for high-end enthusiasts. This move, as emphasized by VP Robert Hallock, aims to ensure that even "budget builders deserve the same level of features" as more well-heeled professionals.

For AI-RADAR's audience, comprising CTOs, DevOps leads, and infrastructure architects, this Intel strategy raises questions and offers new perspectives. The ability to extract more performance from more accessible hardware can have a significant impact on decisions regarding on-premise deployments of AI and Large Language Models (LLM) workloads, where cost-effectiveness and infrastructure control are paramount.

Technical Details and Potential Performance Impact

Overclocking, which involves increasing a processor's operating frequency beyond factory specifications, can result in enhanced computational performance. For Core Ultra 200K Plus processors, opening up these capabilities means users can potentially achieve higher throughput for intensive operations, such as LLM inference for smaller models or local data processing. This is particularly relevant in scenarios where dedicated GPUs are not always the sole or most cost-effective solution.

However, overclocking is not without its trade-offs. It requires careful cooling management and can increase the system's power consumption. System architects will need to balance performance gains with operational stability and thermal requirements, which are crucial aspects in production environments. Choosing hardware that supports overclocking, while offering additional performance potential, also implies greater complexity in configuration and monitoring.

On-Premise Context, TCO, and Data Sovereignty

For organizations prioritizing on-premise deployments, the availability of overclockable CPUs in more accessible price ranges can be an interesting factor in Total Cost of Ownership (TCO) analysis. A more performant processor, even with a contained initial cost, can reduce the need to invest in more expensive hardware or resort to cloud services, keeping data within corporate boundaries. This is fundamental for data sovereignty needs, regulatory compliance, and air-gapped environments.

Optimizing CPU performance for specific workloads can influence the development and deployment pipeline of AI solutions. For example, for the inference of smaller models or data pre-processing tasks, an enhanced CPU can lighten the load on GPUs, optimizing overall resource utilization. It is essential to carefully evaluate the trade-offs between initial hardware cost, long-term power consumption, and the actual performance benefits for one's specific use case.

Future Prospects and Final Considerations

Intel's initiative to extend overclocking to a wider range of processors, including the Core Ultra 200K Plus, reflects a market trend towards greater hardware flexibility and optimization. For decision-makers evaluating self-hosted vs cloud alternatives for AI/LLM workloads, this evolution offers new options for building more agile and controlled infrastructures.

While overclocking can unlock hidden performance potential, it is crucial to adopt a methodical approach to its implementation. System stability, long-term reliability, and heat management remain primary considerations, especially in enterprise contexts. AI-RADAR continues to explore these trade-offs, providing analytical frameworks on /llm-onpremise to support companies in evaluating the most suitable solutions for their on-premise deployment needs.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!