Jensen Huang and the 'Stupid' GPU Analogy

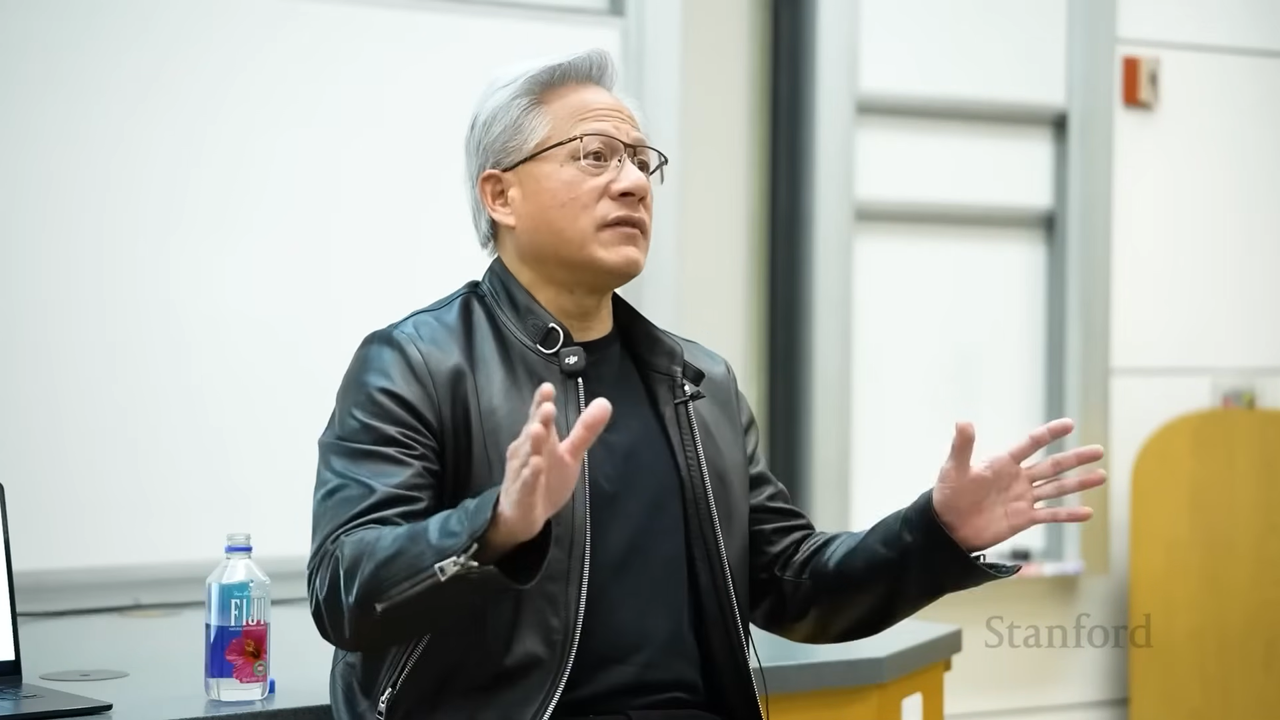

Jensen Huang, CEO of Nvidia, has ignited a significant debate on technology export control policies, calling the analogy comparing GPUs to nuclear weapons 'stupid'. The statement, made during the CS 153 Frontier Systems event at Stanford, underscores a clear stance from Nvidia: governments should allow the sale of GPUs even to countries considered 'adversarial'. This perspective openly challenges the current geopolitical approach that views advanced technologies, particularly those enabling artificial intelligence, as strategic tools of power and, consequently, subject to severe restrictions.

Huang's criticism is set against a global backdrop where technology is increasingly at the center of international tensions. His words highlight the tech industry's frustration with policies that, in his view, hinder progress and the dissemination of innovation, treating computing tools as national security threats rather than engines of universal economic and scientific development.

The Context of Restrictions and Their Impact on GPUs

GPUs, especially high-performance models like Nvidia's A100 and H100 series, have become the beating heart of modern AI infrastructure. Their ability to process enormous volumes of data in parallel makes them indispensable for training and Inference of Large Language Models (LLM). This centrality has led several governments to consider GPUs as 'dual-use technologies,' with potential civilian and military applications, thereby justifying export restrictions to certain nations.

However, such controls create significant complexities for companies seeking to implement self-hosted AI solutions. The limited availability of cutting-edge hardware can increase the Total Cost of Ownership (TCO), prolong Deployment times, and force organizations to compromise on performance, such as using models with higher Quantization or reduced context windows, to adapt to less powerful hardware. This directly impacts a company's ability to handle VRAM and Throughput intensive workloads, which are essential for complex LLMs.

GPUs as Tools for Progress or Power?

Huang's vision diverges from this narrative, promoting GPUs as universal catalysts for scientific, economic, and social progress. According to the Nvidia CEO, limiting access to these technologies means stifling global innovation and hindering nations' ability to autonomously develop their AI capabilities. For organizations prioritizing data sovereignty and compliance, access to high-performance hardware is crucial. An air-gapped or self-hosted environment requires not only the ability to process data locally but also to do so with efficiency and scalability.

GPU restrictions can therefore undermine a company's or country's ability to maintain control over its data and develop cutting-edge AI solutions without relying on external cloud infrastructures, which may not meet data residency or security requirements. The possibility of Fine-tuning proprietary models on local hardware, for example, becomes a critical factor for competitiveness and data security.

Future Prospects and Implications for AI Deployment

The debate raised by Huang highlights the growing tension between technological globalization and national security concerns. For CTOs, DevOps leads, and infrastructure architects, these geopolitical dynamics are not abstract; they translate into concrete constraints in planning and executing AI strategies. The choice between an on-premise Deployment and a cloud-based solution becomes even more complex when the availability of critical hardware is uncertain.

The TCO assessment must now include not only direct acquisition and operational costs but also risks related to the supply chain and future restrictions. AI-RADAR, with its analytical frameworks on /llm-onpremise, offers tools to evaluate these trade-offs, helping decision-makers navigate an increasingly intricate landscape where the ability to innovate is intrinsically linked to access to fundamental technologies and the freedom of infrastructural choice.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!