The Need for High-Speed Connectivity for AI

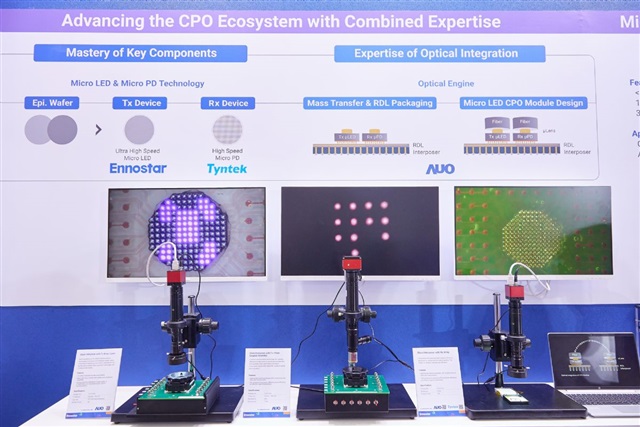

The current technological landscape is dominated by the rapid expansion of artificial intelligence, with Large Language Models (LLMs) representing one of the most demanding applications in terms of computational resources. Managing these workloads is not just about the processing power of GPUs, but crucially extends to the network infrastructure's ability to support massive, low-latency data transfer. It is in this context that the growing interest of Taiwanese Micro LED suppliers, who are focusing their efforts on developing optical links specifically for AI data centers, emerges.

This trend reflects a widespread understanding in the industry: the efficiency and scalability of AI data centers inherently depend on the speed at which data can travel between various components, from GPUs to storage systems. The ability to process and move terabytes of data per second has become a critical bottleneck, driving innovation towards more performant and energy-efficient connectivity solutions.

Optical Links: A Response to Bandwidth Challenges

Optical links, based on transmitting light signals through fibers, offer significant advantages over traditional copper cables, especially in high-density environments like AI data centers. Fiber optics ensure significantly higher throughput, allowing for the simultaneous transfer of much larger data volumes. This is fundamental for training complex LLMs, where millions of parameters must be exchanged between hundreds or thousands of GPUs in parallel.

Beyond increased capacity, optical links drastically reduce latency, a critical factor for real-time inference operations and the efficiency of distributed parallelization frameworks. Their immunity to electromagnetic interference and ability to cover longer distances without signal degradation make them ideal for large-scale data center architectures. The commitment of Micro LED suppliers in this sector suggests an evolution in the key components of these links, aiming for even more efficient and compact solutions.

Implications for On-Premise Deployments and Data Sovereignty

For organizations choosing to implement AI and LLM workloads in self-hosted or on-premise environments, the quality of the network infrastructure is a fundamental pillar. Unlike cloud solutions, where network complexity is abstracted by the provider, an on-premise deployment requires strategic decisions and direct investments in connectivity hardware. Adopting advanced optical links therefore becomes essential to ensure that local infrastructure can compete in terms of performance and scalability with cloud offerings.

These infrastructure choices have a direct impact on the Total Cost of Ownership (TCO), influencing not only initial CapEx but also OpEx related to energy consumption and cooling. An efficient optical network can reduce long-term operational costs, contributing to a more favorable TCO. Furthermore, for companies with stringent data sovereignty requirements, compliance (such as GDPR), or the need to operate in air-gapped environments, a robust and performant on-premise infrastructure, supported by cutting-edge connectivity technologies, is indispensable for maintaining full control over their information assets. For those evaluating on-premise deployments, analyzing these technologies is crucial. AI-RADAR, for example, offers analytical frameworks on /llm-onpremise to support the evaluation of trade-offs between different infrastructure solutions.

Future Prospects and the Evolution of AI Infrastructure

The investment by Taiwanese Micro LED suppliers in optical links for AI data centers is a clear indicator of the direction AI infrastructure is heading. As models become larger and more complex, and training and inference demands intensify, the network's ability to handle data flow will increasingly become a limiting factor. Innovation in this field is therefore not only desirable but necessary to unlock the full potential of future generations of AI.

This competition and the focus on more performant and energy-efficient optical solutions are crucial for the evolution of the entire AI ecosystem. Strategic decisions regarding network infrastructure, particularly for on-premise deployments, will require careful evaluation of trade-offs between costs, performance, scalability, and data security and sovereignty requirements. Adopting technologies like optical links represents a fundamental step towards building resilient and future-proof AI data centers.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!