Edge AI: Between Cloud and Data Sovereignty

Artificial intelligence is rapidly redefining how we work and live. However, much of this intelligence is still tied to the cloud, accessed through APIs and web interfaces. While effective for many scenarios, this model does not always fit the emerging needs of businesses.

Increasingly, organizations are looking to bring AI closer to the actual point of use, specifically on edge devices such as wearables, smart cameras, and other low-power systems. Running AI locally offers significant advantages, including reduced latency, improved privacy, and the ability to unlock new real-time capabilities. However, it also introduces a complex challenge: how to efficiently run complex models on hardware with limited resources in terms of memory, compute power, and energy consumption? This is where ExecuTorch comes in, an extension of the PyTorch ecosystem designed to address these very issues, bringing AI inference directly to the edge.

ExecuTorch on Arm CPUs: Efficiency and Optimization

PyTorch has become a leading framework for training and inferencing AI models in the cloud. ExecuTorch extends this ecosystem to enable local AI inference on edge devices. The process involves exporting a PyTorch model into a lightweight format, the .pte file, which contains both the model weights and a static computation graph. This approach eliminates the need for Python at runtime and reduces dynamic execution overhead, which is unnecessary for inference.

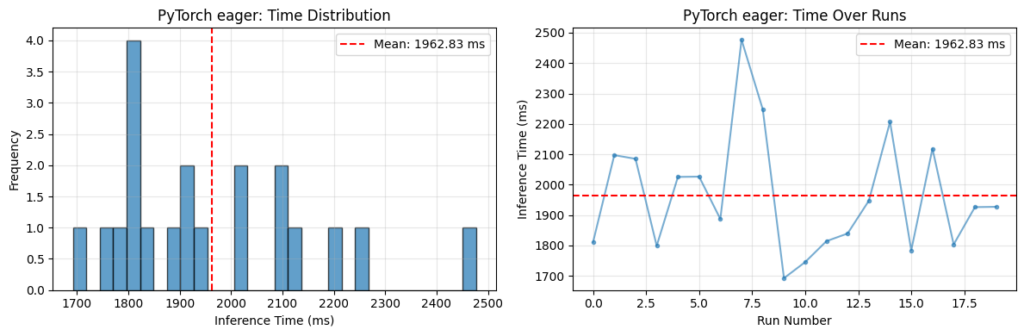

Following the export step is lowering, where the model graph is transformed into a backend-compatible form. This is where hardware-specific optimization begins. The result is a lightweight, portable artifact with predictable execution, suitable for deployment on resource-constrained systems. Even on devices like the Raspberry Pi 5, which can run PyTorch models without ExecuTorch, significant performance improvements can be found. ExecuTorch achieves this performance by delegating parts of the model to optimized backends, such as XNNPACK for Arm CPUs. When enabled, supported operators like convolutions and matrix multiplications are delegated to highly optimized implementations that leverage KleidiAI microkernels and Neon architectural features. It is crucial to note that backend selection is key: without an optimized backend like XNNPACK, ExecuTorch might not outperform PyTorch in terms of latency, while still offering the benefits of a reduced footprint and improved portability. Tests on an OPT-125M transformer model on a Raspberry Pi 5 showed a latency reduction with ExecuTorch and XNNPACK, although an increase in latency was observed in sustained runs, attributed to thermal effects on the Raspberry Pi 5 CPU without active cooling.

Hardware Acceleration with Arm Ethos-U NPUs and TOSA

To go beyond CPU optimization, hardware acceleration offered by Arm Ethos-U NPUs, often paired with Cortex-A or Cortex-M CPUs, can be leveraged. In this scenario, execution becomes heterogeneous: ExecuTorch partitions the model graph, delegating supported subgraphs to the NPU and falling back unsupported operators to the CPU. Ethos-U NPUs typically operate on quantized integer models (often INT8), making quantization a mandatory step before delegation. This process uses EthosUQuantizer and a compile_spec specific to the Ethos-U target, following the PyTorch 2 Export (PT2E) quantization flow.

A key step is the lowering of the model into TOSA (Tensor Operator Set Architecture), an intermediate representation designed to bridge high-level frameworks and hardware backends. TOSA offers a stable, hardware-agnostic operator set, simplifying implementation for hardware vendors. The to_edge_transform_and_lower API, specifying EthosUPartitioner, serializes the model to TOSA and uses Vela to produce an optimized command stream for NPU execution. Finally, to_executorch(...) packages the result into a .pte file. Understanding this flow is essential for performance analysis: efficient delegation results in large, contiguous subgraphs running on the NPU. The presence of unsupported operators can fragment the graph, leading to smaller subgraphs and increased overhead due to frequent transitions between CPU and NPU. Tools like Google Model Explorer, with adapters developed by Arm, allow inspection of the ExecuTorch graph (.pte) and visualization of how it is partitioned across backends, as well as examination of the TOSA representation (.tosa), providing crucial visibility for optimization decisions.

Implications for On-Premise Deployments and Next Steps

The ability to efficiently run AI models on edge devices has significant implications for companies evaluating on-premise or hybrid deployment strategies. For CTOs, DevOps leads, and infrastructure architects, the possibility of maintaining data control, ensuring compliance, and operating in air-gapped environments is a decisive factor. Edge deployments, enabled by solutions like ExecuTorch, contribute to reducing cloud dependency, offering greater data sovereignty and potentially a more advantageous TCO in the long term, balancing initial capital expenditures (CapEx) with operational expenditures (OpEx).

To become familiar with these concepts, Arm has released a collection of practical Jupyter labs, designed to allow developers to run and modify the code on their own hardware. These labs offer a concrete entry point for ML developers already comfortable with PyTorch or for embedded engineers building their Machine Learning foundations. Building models is only half the story; getting them running efficiently at the edge is what matters, and ExecuTorch, along with these practical resources, shows how to get started quickly while understanding the underlying concepts. For those evaluating on-premise deployments, AI-RADAR offers analytical frameworks on /llm-onpremise to assess the trade-offs between self-hosted and cloud solutions, considering aspects such as performance, security, and costs.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!