Agentic AI Reshapes Hardware Requirements

The advancement of artificial intelligence, particularly in the field of agentic AI, is triggering a significant evolution in the industry's hardware demands. According to DIGITIMES, there is a surge in demand for central processing units (CPUs), signaling that traditional GPU-centric architectures may not always suffice for new types of workloads.

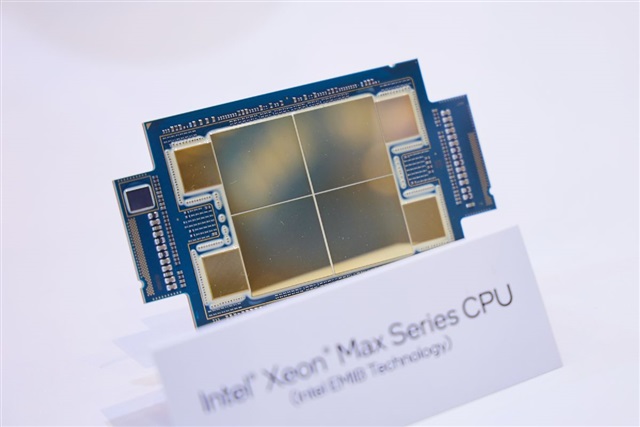

This trend is not limited to general-purpose CPUs but also extends to manufacturers of application-specific integrated circuits (ASICs) and niche chip makers. Agentic AI, which involves systems capable of planning, reasoning, and executing complex tasks autonomously, often requires more sophisticated orchestration and sequential processing that CPUs can handle efficiently, complementing the role of GPUs in intensive parallel processing.

The Growing Role of Processors and Specialized Chips

While traditional Large Language Models (LLMs) greatly benefit from the parallel computing power of GPUs for inference and training, agentic AI systems introduce new challenges. These agents often interact with various tools, APIs, and knowledge bases, requiring more dynamic memory management, sequential decision-making, and control flow processing that can be better suited for CPUs. CPUs excel in these operations, providing the flexibility needed to manage the different phases of an agentic workflow, from planning to execution and monitoring.

Concurrently, the increasing demand is stimulating the market for ASICs and niche chips. These components are designed to optimize performance and energy efficiency for specific tasks, offering a significant advantage in terms of Total Cost of Ownership (TCO) for repetitive or highly specialized AI workloads. For companies looking to optimize their on-premise deployments, integrating ASICs or other dedicated accelerators can be a key strategy to balance performance and operational costs, reducing power consumption and physical footprint.

Implications for On-Premise Deployments and Data Sovereignty

For CTOs, DevOps leads, and infrastructure architects, this evolving hardware landscape has direct implications for deployment decisions. The need to support agentic AI drives towards more heterogeneous infrastructure architectures, where CPUs, GPUs, and specialized chips coexist to maximize overall efficiency. TCO evaluation becomes even more critical, considering not only initial cost (CapEx) but also operational expenses (OpEx) related to power, cooling, and maintenance of a diversified hardware mix.

On-premise deployments, often chosen for reasons of data sovereignty, compliance, and security in air-gapped environments, particularly benefit from this hardware flexibility. The ability to select and combine specific components allows organizations to build custom AI infrastructures that precisely meet their performance, security, and control requirements, without relying exclusively on standardized cloud offerings. For those evaluating on-premise deployments, analytical frameworks are available at /llm-onpremise to help assess the trade-offs between different hardware configurations and deployment strategies.

Future Prospects and Hardware Acquisition Strategies

The future of AI workloads, particularly those related to agentic AI, suggests an increasingly modular and adaptable infrastructure. Hardware acquisition decisions can no longer be based on a monolithic approach but will require in-depth analysis of the specific needs of each component of the AI system. This includes evaluating VRAM for larger models, throughput capacity for inference, and latency for real-time interactions, as well as the robustness of CPUs for orchestration and data management.

Companies will need to carefully consider the trade-offs between the flexibility of general-purpose CPUs, the parallel computing power of GPUs, and the specific efficiency of ASICs. The goal is to build a resilient and economically sustainable AI pipeline, capable of evolving with the increasing complexities of artificial intelligence. This strategic approach is fundamental to ensure that self-hosted infrastructures can effectively support future innovations while maintaining control over data and operational costs.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!