The Surge in Demand for AI Infrastructure

The exponential rise of artificial intelligence, particularly Large Language Models (LLMs), is redefining global infrastructure requirements. This transformation is not just about computing power, but extends to every critical component supporting the operation of complex AI systems. One sector experiencing significant growth is that of power interconnects, fundamental elements for ensuring stable and reliable power delivery to dedicated AI hardware architectures.

In this scenario, companies such as BizLink and JPC are orienting their strategies towards the most demanding market segments. Their focus is on high-end solutions, designed to meet the stringent requirements imposed by new generations of AI hardware, where energy efficiency and the ability to manage high loads are non-negotiable parameters.

AI's Technical Demands: Beyond Simple Power

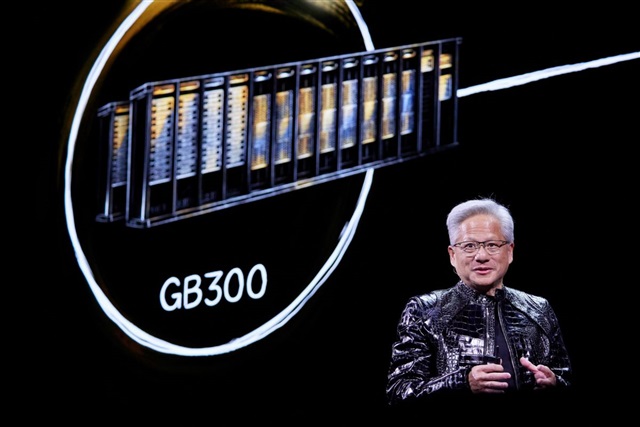

Modern AI architectures, especially those used for large-scale LLM training and Inference, require an unprecedented amount of power. Latest-generation GPUs, such as the H100 or A100 series, with their high VRAM and processing capabilities, draw considerable power. This translates into a critical need for power interconnects that can not only carry such loads but do so with maximum efficiency, minimizing losses and ensuring system stability.

The design of these interconnects must consider factors such as heat dissipation, electrical resistance, and durability—crucial aspects for preventing failures and optimizing the Total Cost of Ownership (TCO) of infrastructures. For on-premise deployments, where every component is under the direct control of the organization, the quality and reliability of these solutions become even more relevant, directly influencing the performance and longevity of the entire stack.

Implications for On-Premise Deployments and Data Sovereignty

The choice of high-quality power interconnects is a decisive factor for organizations opting for a self-hosted or air-gapped approach for their AI workloads. In these contexts, the ability to scale infrastructure, maintain high throughput performance, and ensure data sovereignty largely depends on the robustness and efficiency of the underlying hardware. High-end solutions offered by specialists like BizLink and JPC address this need, enabling the construction of data centers and computing clusters that can handle intensive training and real-time Inference with maximum reliability.

For CTOs and infrastructure architects, evaluating these components goes beyond initial cost. They consider the impact on energy consumption, ease of maintenance, and overall system resilience. The ability to have granular control over every aspect of the infrastructure, from the GPU to the power cable, is a competitive advantage for those who must comply with strict privacy regulations or operate in environments with high security requirements. For companies evaluating on-premise deployments, AI-RADAR offers analytical frameworks on /llm-onpremise to delve deeper into evaluating the trade-offs between control, performance, and TCO.

The Future of AI Infrastructure: Efficiency and Reliability

The evolution of the power interconnect market reflects the maturation of the AI ecosystem. As Large Language Models become more sophisticated and artificial intelligence applications spread into critical sectors, the demand for infrastructural components that can sustain increasingly intense workloads will only grow. The specialization in high-end solutions by players like BizLink and JPC highlights a clear industry trend: investment in quality and reliability is fundamental to unlocking AI's full potential.

This trend underscores the importance of meticulous infrastructure planning, especially for those considering on-premise deployments. Hardware decisions, including seemingly minor components like power interconnects, have a direct impact on TCO, performance, and an organization's ability to maintain control over its data and models.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!