The AI Infrastructure Wave: Taiwan at the Heart of the Global Supply Chain

The Taiwanese industry finds itself at the center of an unprecedented growth wave, driven by the global surge in demand for dedicated artificial intelligence infrastructure. This phenomenon, extending from the production of advanced substrates to the assembly of complete servers, underscores the island's strategic position as a crucial hub in the global technology supply chain. The push towards AI, particularly for Large Language Models (LLMs), is redefining infrastructural priorities for businesses of all sizes.

The increasing adoption of AI solutions, both cloud-based and on-premise, requires an increasingly powerful and specialized hardware foundation. This demand is not limited to processing chips alone but involves a complex ecosystem of components, essential for building systems capable of handling the extreme computational requirements of advanced AI model inference and training.

The Core of On-Premise AI Infrastructure

For organizations choosing to deploy LLMs on-premise, hardware infrastructure represents the fundamental pillar. Components such as high-performance GPUs, equipped with ample VRAM and high-speed connectivity, are indispensable for managing the size and complexity of these models. Processing capability, throughput, and latency become critical metrics in the selection of silicon and system architecture.

A self-hosted deployment offers unparalleled control over data and the operating environment, a crucial aspect for sectors with stringent compliance and data sovereignty requirements. However, this choice entails a careful evaluation of the Total Cost of Ownership (TCO), which includes not only the initial capital expenditure (CapEx) for hardware but also operational expenses (OpEx) related to power, cooling, and maintenance. The availability of a robust supply chain, like Taiwan's, is therefore vital to ensure access to these essential components.

From Production to Deployment: Challenges for Enterprises

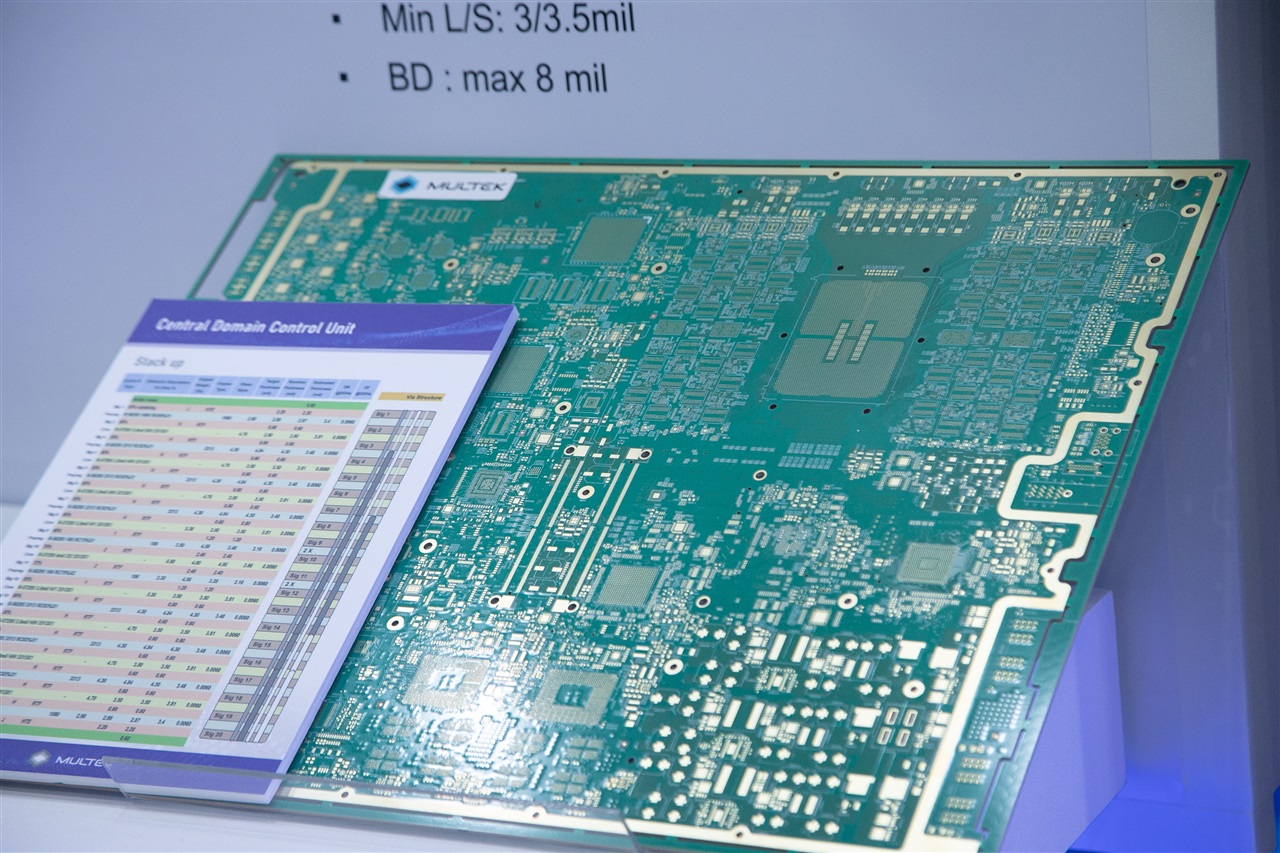

The supply chain, from substrates to servers, is complex and interconnected. Substrates, fundamental for chip encapsulation, are a critical bottleneck, whose production requires advanced technologies and significant investments. Taiwan's ability to supply these components, along with memory modules, motherboards, and complete server systems, is an enabling factor for the global expansion of AI.

For companies considering an on-premise deployment, the availability and cost of these hardware components are decisive factors. The choice between the initial investment in bare metal infrastructure and the adoption of cloud services depends on an in-depth analysis of anticipated workloads, security needs, and long-term TCO projections. The ability to scale infrastructure according to the evolving needs of LLMs requires strategic planning and a deep understanding of hardware constraints.

Future Prospects and Data Sovereignty

The strategic importance of the Taiwanese supply chain for AI infrastructure is set to grow, in parallel with the evolution and widespread adoption of LLMs. The demand for solutions that guarantee data sovereignty and the ability to operate in air-gapped environments is pushing many organizations towards self-hosted architectures. This approach not only addresses security and compliance needs but can also offer TCO advantages for predictable, long-term workloads.

For those evaluating on-premise deployments, complex trade-offs exist between flexibility, cost, and control. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these aspects, providing tools for informed decisions. The capacity of an industry like Taiwan's to sustain this global demand will be crucial for the future of artificial intelligence, enabling companies to build the digital foundations necessary for innovation and the protection of their information assets.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!