The AI Wave Reshapes the Memory Market: A New Chinese Player Emerges

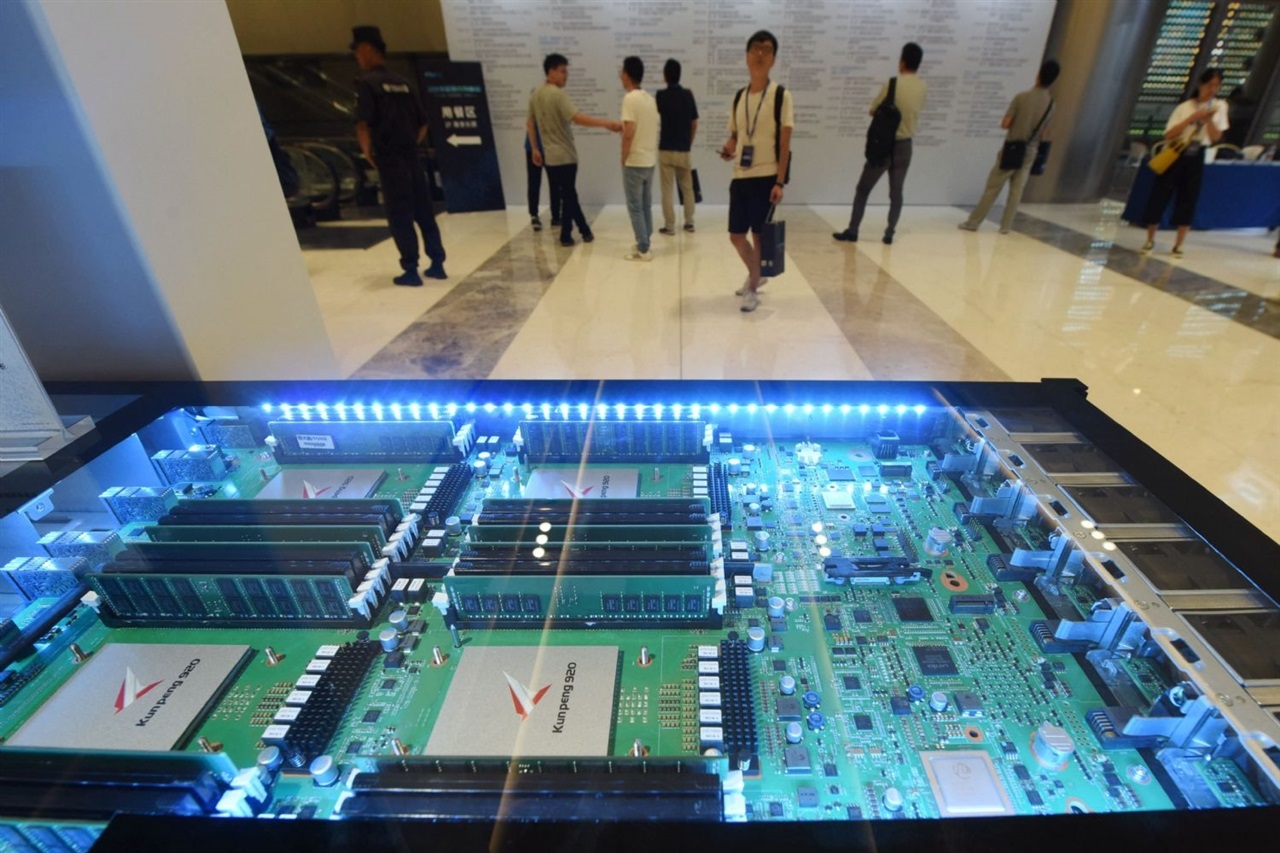

The global artificial intelligence industry is triggering a profound structural reorganization in key technology sectors, and memory is among the most impacted. The growing demand for computing and data storage capacity to support the development and deployment of Large Language Models (LLM) and other AI applications is driving new investments and the entry of emerging players. In this dynamic scenario, a Chinese conglomerate has announced its entry into the memory sector, a move that underscores the strategic importance of this segment for the future of AI.

This initiative is not an isolated case but reflects a broader trend: companies are recognizing the critical value of more direct control over the hardware supply chain. AI, with its extreme computational demands, is transforming investment priorities and market strategies, making high-performance memory an indispensable component for any modern infrastructure.

The Crucial Role of Memory in the AI Era

To understand the scope of this entry, it is essential to analyze the role of memory in AI workloads. Increasingly complex LLMs require vast amounts of VRAM (Video RAM) and extremely high bandwidth to operate efficiently. Both during the training phase, where billions of parameters are updated, and during inference, when the model generates real-time responses, memory speed and capacity are limiting factors.

Components such as HBM (High Bandwidth Memory) have become essential for latest-generation GPUs, as they enable unprecedented data throughput between the processor and the memory itself. This is crucial for reducing latency and increasing the number of tokens processed per second, vital parameters for the performance of AI systems. For companies evaluating self-hosted deployments, the availability and specifications of these memories directly translate into operational costs and scalability capabilities.

Implications for the Market and On-Premise Deployments

The entry of a new player into the memory sector can have several implications for the global market. It could increase competition, stimulate innovation, and potentially influence the pricing and availability of critical components. For CTOs, DevOps leads, and infrastructure architects evaluating self-hosted alternatives versus the cloud for AI/LLM workloads, these market dynamics are of primary importance.

Data sovereignty, regulatory compliance, and the need for air-gapped environments often push organizations towards on-premise solutions. In these contexts, access to high-performance memory at competitive costs is a key factor in calculating the TCO (Total Cost of Ownership). A more diversified and competitive memory market could offer more options and flexibility, allowing companies to optimize their AI pipelines with hardware better suited to their specific needs and constraints. For those evaluating on-premise deployments, analytical frameworks are available at /llm-onpremise that can help assess these trade-offs.

Future Prospects and Challenges

The expansion into the memory sector, driven by the impetus of AI, is a clear sign of the transformation underway in the technology industry. The demand for faster, denser, and more efficient memories will continue to grow exponentially, fueling research and development of new architectures and materials. However, the entry of new players also brings challenges, including manufacturing complexity, the need for massive investments in research and development, and the management of global supply chains.

The competitive landscape is evolving rapidly, with AI acting as a catalyst for innovation at every layer of the technology stack, from silicio to software. Strategic decisions made today in the memory sector will have a lasting impact on companies' ability to implement and scale their artificial intelligence solutions, defining the contours of the next digital era.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!