The Growing Opposition to Data Centers: An Obstacle for AI?

The rapid expansion of artificial intelligence and Large Language Models (LLMs) is fueling an unprecedented demand for compute capacity, prompting tech companies to invest heavily in new infrastructure. However, this race for "compute" is encountering increasing resistance from the public. In the United States, a recent survey reveals that 70% of Americans oppose the construction of data centers near their homes. This figure is significant, as it positions data centers as less popular than nuclear power plants in terms of local acceptance, a clear indicator of a shift in public sentiment.

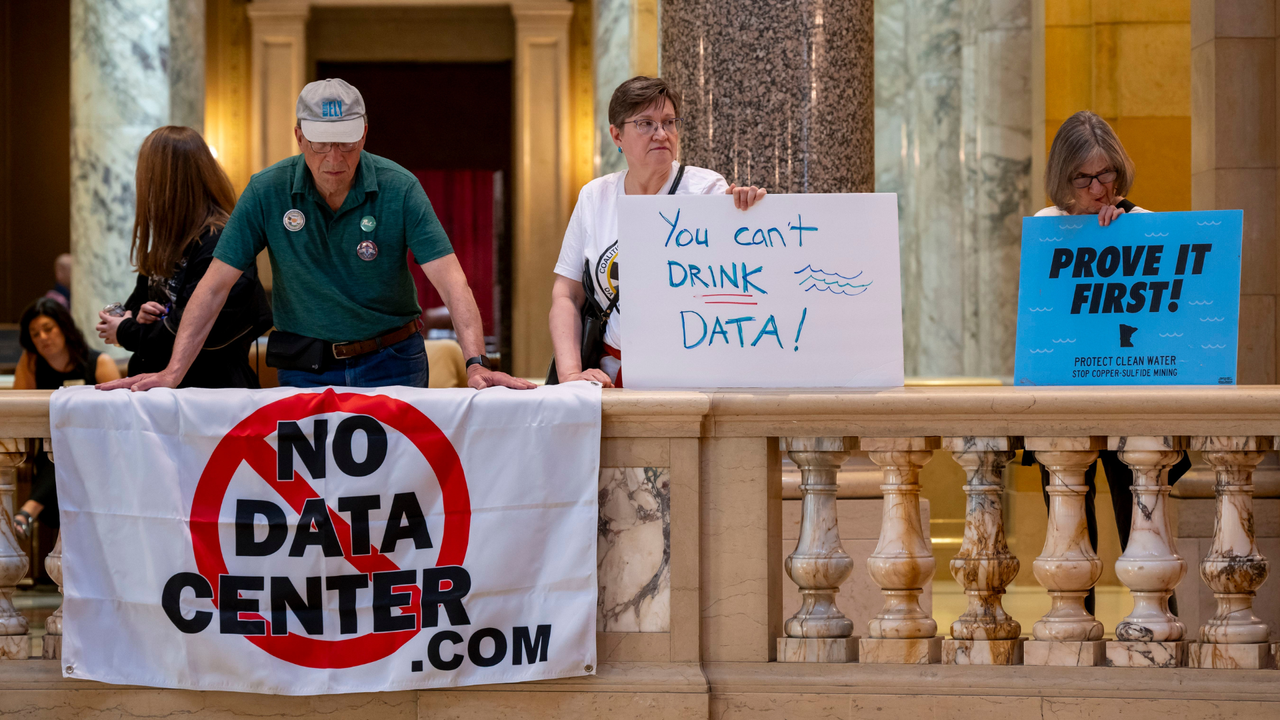

The opposition is not merely generic; it is specifically intensifying against AI infrastructure. Rallies, such as one advocating for a data center moratorium, highlight widespread discontent. Concerns range from environmental impact, linked to energy and water consumption, to noise generated by cooling systems, and the perception of excessive industrialization in residential areas. This scenario creates new challenges for companies seeking to expand their processing capabilities to support the development and deployment of advanced AI systems.

AI's Demands and Their Infrastructural Impact

Large Language Models, in particular, require enormous computational power for both training and inference. This translates into the need for arrays of high-performance GPUs, such as NVIDIA H100s or A100s, with high VRAM and bandwidth requirements. Such configurations generate considerable heat, which in turn necessitates complex, energy-intensive cooling systems. A single data center can house thousands of these units, significantly impacting the local power grid and water resources, often used for cooling.

The race by tech companies to acquire more "compute" is a direct response to these demands. However, the construction and expansion of these facilities are not simple processes. They require large tracts of land, access to robust energy infrastructure, and, increasingly, face intense scrutiny from local communities. The challenge is to balance the need for technological innovation with environmental sustainability and social acceptance, an equilibrium that is becoming increasingly precarious.

Implications for On-Premise Deployment and Data Sovereignty

For organizations evaluating the deployment of LLMs and other AI solutions, the growing opposition to data centers introduces new complexities. Decisions between cloud and self-hosted, or on-premise, solutions become even more critical. While the cloud offers scalability and flexibility, on-premise solutions provide greater control over data sovereignty, compliance, and security—fundamental aspects for sectors like finance or healthcare. However, building on-premise infrastructure requires not only a significant upfront investment (CapEx) but also managing challenges related to site selection, obtaining permits, and integrating with local communities.

The Total Cost of Ownership (TCO) of an on-premise deployment must now consider not only hardware, energy, and personnel costs but also potential delays and additional expenses arising from public opposition or moratoriums. For those evaluating these alternatives, AI-RADAR offers analytical frameworks on /llm-onpremise to understand the trade-offs between control, performance, and operational costs in a context of increasing public scrutiny. The ability to ensure air-gapped environments or meet specific data residency requirements remains a key factor for many companies, but the physical realization of such environments is increasingly under pressure.

Future Prospects and the Search for Sustainable Solutions

The tension between the insatiable demand for AI compute capacity and resistance from local communities is likely to persist. Companies and policymakers will need to explore innovative solutions to mitigate the impact of data centers. This could include developing more efficient cooling technologies, integrating with renewable energy sources, selecting sites in industrial or remote areas, or adopting distributed architectures that reduce the concentration of infrastructure in a single location.

The discussion is no longer solely about technological efficiency or TCO but also about the "social license" to operate. Ensuring transparency, engaging communities, and demonstrating a concrete commitment to sustainability will become increasingly decisive factors for the success of AI-related infrastructure projects. The future of AI deployment will depend not only on the power of silicon but also on the ability to build consensus and harmonious coexistence with the environment and people.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!