The 2nm Race: Automotive and Networking Drive Innovation

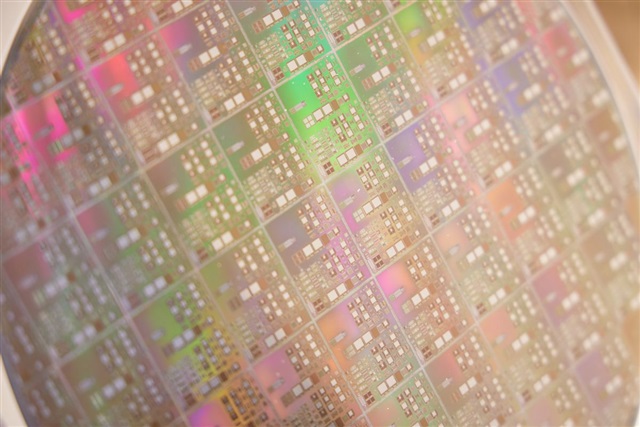

The semiconductor industry landscape is in constant evolution, with an incessant push towards smaller and more performant process nodes. One of the most recent and significant trends is the acceleration of the automotive and networking sectors towards the 2-nanometer (nm) process node. This strategic move, which in many cases implies a direct leap from less advanced nodes, underscores the urgency to integrate superior computing capabilities and greater energy efficiency in these critical areas.

The transition to 2nm promises smaller transistors, which translates into higher component density on a single chip, improved performance, and reduced power consumption. For automotive, this means enabling advanced driver-assistance systems (ADAS), autonomous driving functionalities, and increasingly sophisticated infotainment systems. In networking, 2nm chips are essential to support the exponential increase in data traffic, 5G networks, and next-generation data center infrastructures, where speed and efficiency are fundamental parameters.

The Impact of AI Demand on Global Manufacturing Capacity

Parallel to this innovative push, the industry is facing a growing challenge: the insatiable demand for advanced silicio from the artificial intelligence sector. The proliferation of Large Language Models (LLM) and other AI applications has generated unprecedented demand for GPUs and specialized accelerators, which require the most cutting-edge process nodes to maximize training and Inference performance.

This pressure results in globally limited manufacturing capacity at major foundries, such as TSMC and Samsung Foundry. The priority given to the production of high-performance AI chips is inevitably tightening the availability of advanced nodes for other sectors. This can lead to longer lead times and potentially higher costs for chips destined for automotive, networking, and other applications that require state-of-the-art technology.

Implications for On-Premise Deployment and the Supply Chain

For companies evaluating the deployment of AI workloads, particularly LLMs, in self-hosted or on-premise environments, semiconductor supply chain dynamics become critically important. The availability and cost of advanced silicio directly influence the Total Cost of Ownership (TCO) of AI infrastructures. The competition for 2nm and 3nm nodes is not just about the latest generation GPUs, but also about processors, network chips, and other essential components for building a robust local stack.

Strategic planning is therefore fundamental. Hardware decisions, from choosing GPUs with sufficient VRAM to configuring the internal network to ensure high throughput, must take into account current and future market availability. For those evaluating on-premise deployment, there are complex trade-offs between adopting the latest technologies and ensuring a stable and predictable supply chain, often with an eye on data sovereignty and compliance.

Future Outlook and Mitigation Strategies

The trend towards ever-smaller process nodes is unstoppable, but the challenges related to manufacturing capacity and the supply chain will require innovative mitigation strategies. Companies may need to consider a mix of solutions, balancing the use of cutting-edge hardware with more mature but more readily available options, or exploring hybrid architectures that combine on-premise resources with cloud capacity to manage demand peaks.

Diversification of suppliers and investment in research and development to optimize the use of existing hardware, for example through Quantization techniques to reduce VRAM requirements and improve Inference on less powerful hardware, will become increasingly relevant. The ability to navigate this complex scenario, characterized by rapid innovation and supply constraints, will be a key factor for the success of enterprise AI strategies in the coming years.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!