La Domanda di Chip AI Mette Sotto Pressione la Supply Chain

Il settore dell'intelligenza artificiale sta vivendo una fase di espansione senza precedenti, alimentata dalla rapida adozione di Large Language Models (LLM) e altre applicazioni di AI generativa. Questa crescita vertiginosa ha innescato una domanda massiccia di hardware specializzato, in particolare di acceleratori AI come le GPU, che sono fondamentali per l'inference e il training di questi modelli complessi. La corsa all'innovazione e al deployment di soluzioni AI sta però rivelando punti di fragilità nella catena di approvvigionamento globale.

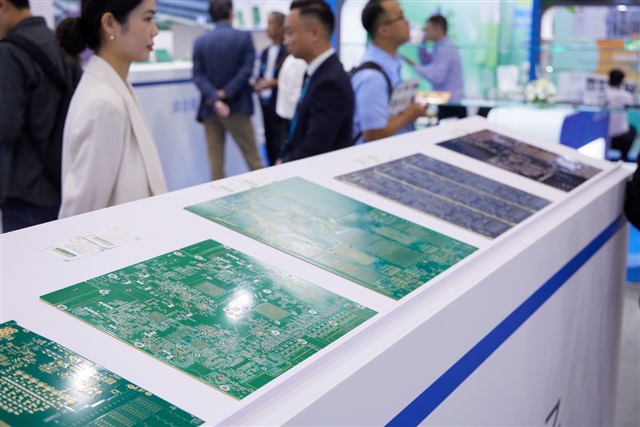

Una delle prime avvisaglie di questa tensione emerge dalla notizia che i substrati ABF (Ajinomoto Build-up Film), componenti critici per il packaging avanzato dei chip, sono attualmente esauriti presso i principali fornitori. Aziende come Unimicron, Kinsus e Nan Ya PCB, attori chiave nella produzione di questi materiali, stanno affrontando una domanda che supera di gran lunga la capacità produttiva, segnalando un potenziale collo di bottiglia per l'intera industria dei chip AI.

Il Ruolo Cruciale dei Substrati ABF nell'Hardware AI

I substrati ABF sono materiali compositi essenziali per la realizzazione di package ad alta densità, utilizzati nei processori più avanzati, inclusi quelli destinati ai carichi di lavoro AI. Questi substrati fungono da interfaccia tra il die del chip e la scheda madre, facilitando le interconnessioni elettriche ad alta velocità e la dissipazione del calore. La loro capacità di supportare un'elevata densità di circuiti e di gestire le crescenti esigenze di potenza è fondamentale per le prestazioni delle moderne GPU e degli ASIC dedicati all'AI.

Senza substrati ABF di qualità e in quantità sufficiente, la produzione di chip AI ad alte prestazioni rallenta. Questo impatta direttamente la capacità dei produttori di soddisfare la domanda di acceleratori con specifiche elevate, come quelli dotati di grandi quantità di VRAM e di elevato throughput, indispensabili per l'esecuzione efficiente di LLM complessi. La tecnicia di packaging avanzato, di cui i substrati ABF sono una componente chiave, è ciò che permette di integrare migliaia di miliardi di transistor in un singolo chip, garantendo al contempo l'integrità del segnale e la gestione termica.

Implicazioni per il Deployment On-Premise e la Supply Chain

La carenza di substrati ABF ha ripercussioni dirette per le organizzazioni che pianificano o stanno espandendo i loro deployment AI on-premise. CTO, responsabili DevOps e architetti infrastrutturali si trovano di fronte a tempi di consegna più lunghi per l'acquisizione di hardware AI, con conseguenti ritardi nella realizzazione dei progetti e un aumento del Total Cost of Ownership (TCO). La dipendenza da una catena di approvvigionamento globale con punti di strozzatura può compromettere la capacità di mantenere la sovranità dei dati e di garantire ambienti air-gapped, aspetti cruciali per molti settori.

Per chi valuta deployment on-premise, la resilienza della supply chain diventa un fattore critico. Le aziende potrebbero dover considerare strategie di diversificazione dei fornitori o esplorare soluzioni hardware alternative, anche se con compromessi in termini di performance o costi. La pianificazione strategica a lungo termine, che tenga conto di queste dinamiche di mercato, è essenziale per mitigare i rischi e assicurare la continuità operativa delle pipeline AI locali. AI-RADAR offre framework analitici su /llm-onpremise per valutare questi trade-off e supportare decisioni informate.

Prospettive Future e Strategie di Mitigazione

La situazione attuale evidenzia la complessità e l'interdipendenza dell'ecosistema hardware AI. Nel breve termine, le aziende dovranno navigare in un mercato caratterizzato da disponibilità limitata e prezzi potenzialmente più elevati. Nel lungo periodo, è probabile che si assista a investimenti significativi per aumentare la capacità produttiva di substrati ABF e per la ricerca di materiali alternativi o tecnicie di packaging innovative.

I produttori di chip potrebbero anche esplorare forme di integrazione verticale o partnership strategiche per assicurarsi l'approvvigionamento di componenti critici. Per le aziende che implementano soluzioni AI, comprendere queste dinamiche di mercato non è solo una questione di costi, ma anche di agilità e capacità di innovazione. La capacità di adattarsi a una supply chain volatile sarà un fattore determinante per il successo nel panorama dell'intelligenza artificiale, specialmente per chi punta su infrastrutture self-hosted e un controllo granulare sui propri carichi di lavoro AI.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!