The AI Server Push on Physical Infrastructure

Demand for dedicated artificial intelligence servers is experiencing an unprecedented surge, a phenomenon directly reflected in the hardware supply chain. As reported by DIGITIMES, this increasing requirement is leading to significant revenue growth for companies specializing in the production of "rail kits," which are the mounting systems for servers within racks. This seemingly niche indicator reveals a broader and more strategic trend in the current technological landscape.

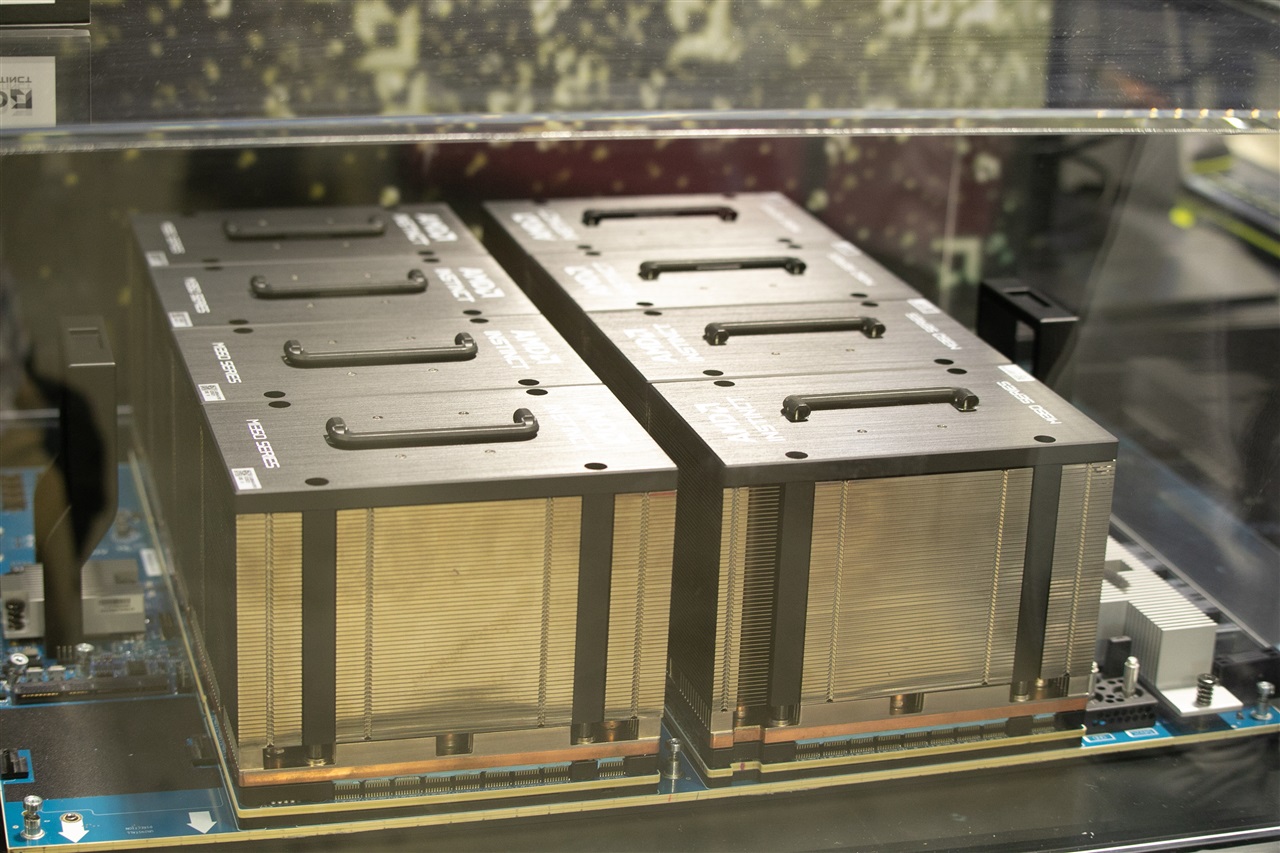

The widespread adoption of Large Language Models (LLM) and other generative AI applications is pushing organizations to strengthen their computational capabilities. It's no longer just about processing data, but about managing complex models that require enormous amounts of VRAM and processing power, often delivered by latest-generation GPUs like the A100 or H100. The need to physically host these high-performance machines is therefore redirecting investments towards tangible hardware infrastructure.

The Crucial Role of Physical Components

Rail kits are essential components for installing and managing servers within data centers. They allow server units to be securely and organizedly mounted in standard racks, facilitating maintenance, cooling, and space optimization. The increase in their demand is not a minor detail, but a clear signal that companies are actively expanding their computing capabilities through the acquisition and deployment of new physical servers.

This trend suggests that, despite the ubiquity of cloud computing, a significant portion of AI investments is focusing on self-hosted or on-premise solutions. The reasons behind this choice are manifold and often interconnected, ranging from data sovereignty to the need for more granular control over the hardware and software environment. The ability to directly manage infrastructure becomes a critical factor for particularly demanding AI workloads.

On-Premise vs. Cloud: Drivers for LLM Deployment Choices

The decision to implement on-premise AI infrastructures, rather than relying exclusively on cloud services, is driven by several strategic factors. Data sovereignty is often a primary concern, especially for regulated sectors like finance or healthcare, where the location and protection of sensitive information are mandatory. An air-gapped environment or a private data center offers unparalleled control over these aspects.

Furthermore, the Total Cost of Ownership (TCO) can play a decisive role. Although the initial investment (CapEx) for on-premise hardware is high, long-term operational costs for intensive AI workloads can be lower compared to cloud-based OpEx models, especially when it comes to large-scale training or inference. The ability to optimize GPU utilization, cooling management, and energy efficiency contributes to making on-premise an economically advantageous choice for certain applications. For those evaluating on-premise deployments, AI-RADAR offers analytical frameworks on /llm-onpremise to assess the trade-offs between different options.

Future Outlook and Strategic Considerations

The current growth in the AI server component market underscores a phase of maturation in enterprise-level AI adoption. Organizations are no longer just experimenting; they are building robust, dedicated infrastructures to support their LLM development and deployment pipelines. This requires in-depth strategic planning, taking into account not only hardware specifications, such as VRAM capacity or GPU throughput, but also the resilience, scalability, and security of the entire infrastructure.

For CTOs, DevOps leads, and infrastructure architects, understanding these dynamics is fundamental. The choice between a self-hosted approach and a cloud-based solution is not trivial and depends on a careful evaluation of specific workload requirements, budget constraints, and compliance needs. The "rail kit" market serves as a barometer indicating how the industry is responding to these challenges, increasingly focusing on concrete and controllable infrastructure solutions.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!