L'AI come motore del mercato della memoria

Il settore dei fornitori di memoria ha segnato un traguardo significativo, registrando ricavi record nel primo trimestre del 2026. Questo risultato, come riportato da DIGITIMES, è attribuibile in gran parte alla domanda inarrestabile proveniente dal comparto dell'intelligenza artificiale. Un aspetto particolarmente notevole di questa crescita è la sua capacità di sfidare i tradizionali rallentamenti stagionali che spesso caratterizzano il mercato dei semiconduttori, indicando una tendenza di fondo robusta e duratura.

Questa dinamica sottolinea come l'AI non sia più una nicchia emergente, ma un motore economico consolidato, capace di influenzare profondamente le catene di fornitura globali. L'incremento della domanda di memoria ad alte prestazioni riflette l'espansione e la maturazione delle applicazioni AI, che richiedono risorse hardware sempre più sofisticate per supportare carichi di lavoro complessi, dal training all'Inference di Large Language Models (LLM).

Le esigenze di memoria dei Large Language Models

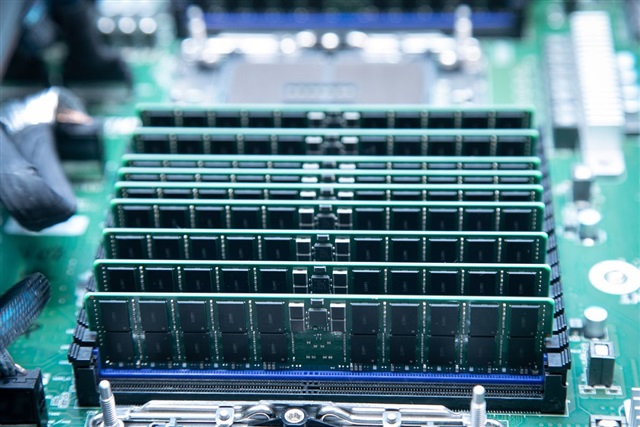

I Large Language Models, in particolare, sono noti per la loro insaziabile fame di memoria. Sia durante la fase di training, dove vengono elaborati dataset massivi, sia durante l'Inference, quando il modello genera risposte, la disponibilità di VRAM (Video Random Access Memory) sulle GPU è un fattore critico. Modelli sempre più grandi, con finestre di contesto estese e architetture complesse, richiedono quantità crescenti di memoria ad alta larghezza di banda, come l'High Bandwidth Memory (HBM) o le più recenti generazioni di GDDR, per operare in modo efficiente e con bassa latenza.

La scelta della memoria influisce direttamente sulle performance e sulla scalabilità delle infrastrutture AI. Per esempio, la capacità di una GPU di ospitare un LLM di determinate dimensioni, o di gestire un batch size elevato, dipende direttamente dalla sua VRAM disponibile. Questo rende la memoria non solo un componente, ma un vero e proprio collo di bottiglia strategico per le aziende che mirano a sviluppare o implementare soluzioni AI avanzate, specialmente in contesti dove la sovranità dei dati e il controllo sull'infrastruttura sono prioritari.

Implicazioni per i deployment on-premise e il TCO

Per le organizzazioni che valutano un deployment on-premise o self-hosted per i loro carichi di lavoro AI, la forte domanda di memoria ha implicazioni dirette. L'aumento dei ricavi per i fornitori potrebbe tradursi in una maggiore stabilità della catena di fornitura a lungo termine, ma anche in potenziali fluttuazioni dei prezzi e della disponibilità nel breve e medio periodo. Questo impatta direttamente il Total Cost of Ownership (TCO) delle infrastrutture AI, influenzando le decisioni di CapEx e OpEx.

La necessità di garantire la sovranità dei dati e la conformità normativa spinge molte aziende verso soluzioni on-premise o air-gapped, dove il controllo sull'hardware e sui dati è massimo. In questi scenari, l'approvvigionamento di GPU con sufficiente VRAM e di moduli di memoria ad alte prestazioni diventa una priorità strategica. La valutazione dei trade-off tra performance, costo e disponibilità è fondamentale. Per chi valuta deployment on-premise, AI-RADAR offre framework analitici su /llm-onpremise per valutare questi trade-off in modo strutturato, considerando aspetti come la densità di memoria per server e l'efficienza energetica.

Uno sguardo al futuro del mercato AI

La traiettoria di crescita del mercato della memoria, guidata dall'AI, suggerisce che questa tendenza non è effimera. L'innovazione continua nei Large Language Models e la loro adozione in settori sempre più ampi garantiranno una domanda sostenuta per componenti hardware avanzati. Questo scenario impone alle aziende di adottare una pianificazione strategica a lungo termine per le proprie infrastrutture AI, considerando non solo le capacità di calcolo, ma anche e soprattutto le esigenze di memoria.

La capacità di accedere a memoria di alta qualità e in quantità sufficiente sarà un fattore distintivo per le organizzazioni che intendono mantenere un vantaggio competitivo nell'era dell'intelligenza artificiale. Il mercato della memoria, quindi, non è solo un indicatore della salute del settore tech, ma un barometro cruciale per l'evoluzione e l'espansione delle capacità AI a livello globale.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!