AI Demand Fuels Taiwan's Semiconductor Supply Chain

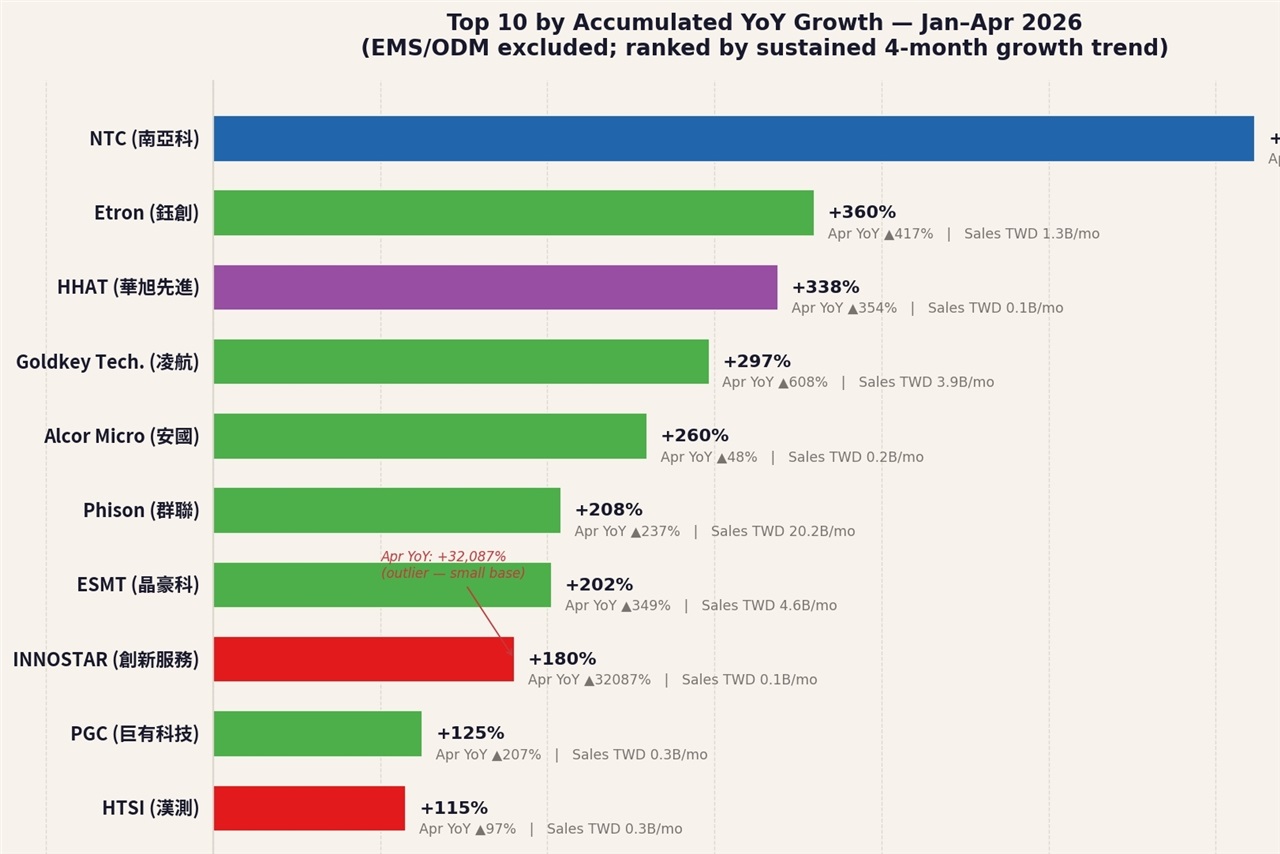

April marked a period of growth for Taiwan's semiconductor supply chain, with overall positive results reflecting clear and undeniable demand from the artificial intelligence sector. This trend is not isolated but is evident across the entire ecosystem, from foundries to component manufacturers, signaling an acceleration in the global adoption and development of AI solutions.

The clear visibility of this AI demand is a crucial indicator for companies planning infrastructure investments. The need to process increasing volumes of data and run complex Large Language Models (LLMs) requires increasingly powerful and specialized hardware, laying the groundwork for a significant investment cycle in the near future.

The Crucial Role of AI Hardware and Its Implications

The increase in AI demand directly translates into a greater need for high-performance silicon, particularly for Graphics Processing Units (GPUs) and accelerators dedicated to model Inference and training. Components such as high-capacity VRAM and high throughput become differentiating factors for the performance of AI systems. Organizations developing and deploying LLMs and other AI workloads must carefully consider these technical specifications to ensure their infrastructure can support computing needs.

Hardware choice directly influences a system's ability to handle model complexity, batch size, and response latency. For example, the availability of GPUs with ample VRAM is essential for loading large models or managing extended context windows, which are critical elements for the effectiveness of LLMs in enterprise contexts.

Implications for On-Premise Deployment and TCO

For CTOs, DevOps leads, and infrastructure architects evaluating deployment strategies, the strong AI demand in the semiconductor market has direct implications. The availability and cost of specialized hardware are key factors in deciding between self-hosted solutions and cloud services. A robust semiconductor market can offer greater supply stability but also price pressures, influencing the Total Cost of Ownership (TCO) of an on-premise AI infrastructure.

On-premise deployment offers significant advantages in terms of data sovereignty, compliance, and the ability to operate in air-gapped environments, but it requires a higher initial investment (CapEx) and direct hardware management. The ability to acquire and maintain the necessary AI hardware thus becomes a strategic element. For those evaluating on-premise deployments, complex trade-offs exist, which AI-RADAR explores with analytical frameworks on /llm-onpremise, to balance performance, costs, and control.

Future Outlook and Market Challenges

The clear visibility of AI demand in the Taiwanese semiconductor sector suggests that the growth trend is set to continue. However, the global supply chain remains subject to complex dynamics, including geopolitical challenges and the need for continuous innovation to meet the evolving requirements of artificial intelligence.

Companies will need to continue to closely monitor semiconductor market trends to effectively plan their infrastructure strategies. The ability to rapidly adapt to changes in hardware availability and specifications will be a critical factor in maintaining a competitive edge in the AI era.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!