AI Demand Pressures the Technology Supply Chain

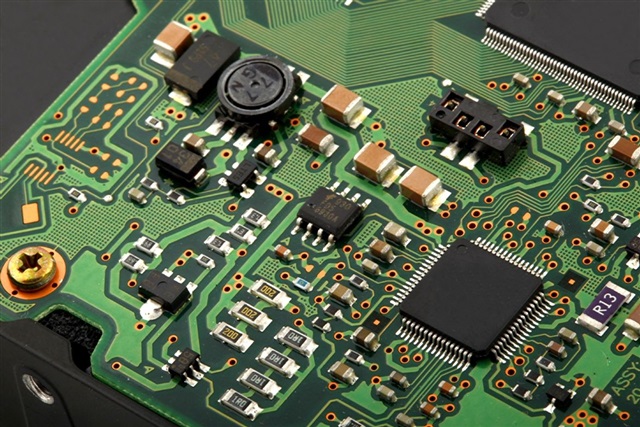

The rapid expansion of artificial intelligence, particularly Large Language Models (LLMs), is generating unprecedented demand for specialized hardware components and services. This surge is exerting significant pressure on the entire technology supply chain, leading to increased production costs and, consequently, substantial challenges in pricing management for suppliers. Companies operating in this sector are now tasked with balancing the need to meet constantly growing demand with the reality of increasingly high operational and procurement costs.

This scenario creates a complex environment for technology decision-makers. The availability of advanced silicon, high-bandwidth memory like HBM (High Bandwidth Memory), and latest-generation GPUs, which are essential for AI model inference and training, has become a critical factor. Current market dynamics reflect a palpable tension between accelerated innovation in AI and the supply chain's capacity to keep pace without excessively impacting final costs.

Impact on Key AI Components

The rise in costs is particularly evident in segments crucial for AI development and deployment. GPUs, for instance, are at the heart of this dynamic. Their manufacturing complexity, combined with massive demand for LLM training and inference workloads, has driven up prices and delivery times. Components such as high-capacity VRAM (e.g., 80GB per GPU) and high-speed interconnects (like NVLink) have become bottlenecks and significant cost drivers.

Beyond hardware, advanced packaging processes and global logistics also contribute to this increase. The scarcity of specific raw materials and the complexity of global supply chains add further layers of cost and uncertainty. This scenario makes planning investments in AI infrastructure a challenge that requires in-depth analysis of TCO and medium-to-long-term market projections.

Implications for On-Premise Deployment

For organizations considering on-premise deployment of AI solutions, the current context of rising costs in the technology supply chain has direct implications. While a self-hosted approach offers advantages in terms of data sovereignty, control, and compliance (especially for air-gapped environments), the initial investment (CapEx) in hardware can be significantly higher and more volatile. TCO planning for an on-premise AI infrastructure requires accurate estimation not only of acquisition costs but also those related to power, cooling, and maintenance over time.

The choice between an on-premise infrastructure and cloud-based solutions thus becomes an even more complex strategic decision. While the cloud can offer flexibility and an OpEx model, data control and hardware customization for specific workloads remain strengths of self-hosting. For those evaluating on-premise deployments, AI-RADAR offers analytical frameworks on /llm-onpremise to assess the trade-offs between initial and operational costs and the long-term benefits in terms of control and security.

Outlook and Future Strategies

Facing these challenges, companies are exploring various strategies to mitigate the impact of rising costs. Optimizing existing hardware utilization, adopting advanced quantization techniques to reduce VRAM requirements, and exploring more efficient model architectures are some viable paths. Diversifying suppliers and building more resilient supply chain relationships can also help stabilize costs and ensure component availability.

The AI market is constantly evolving, and with it, the dynamics of its supply chain. The ability to adapt quickly to these changes, while maintaining a focus on economic and technological sustainability, will be crucial for companies aiming to fully leverage the potential of Large Language Models and other artificial intelligence applications. Vigilance over market trends and proactive infrastructure planning will be key elements for successfully navigating this landscape.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!