Nvidia's Warning on DeepSeek's Hardware Choice

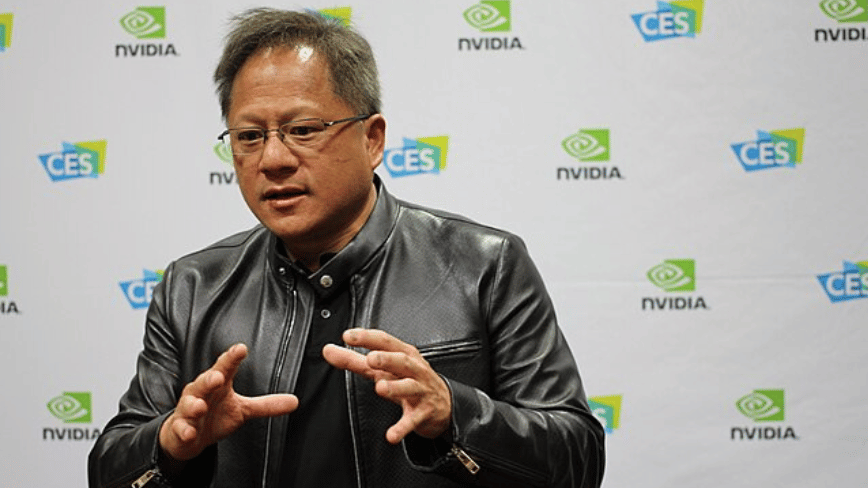

Jensen Huang, CEO of Nvidia, recently raised an important issue regarding the dynamics of the global artificial intelligence market. During an appearance on the Dwarkesh Podcast, Huang expressed clear concern over the Chinese lab DeepSeek's decision to optimize its Large Language Models (LLMs) for Huawei's Ascend chips, rather than for American-made hardware. This choice, according to the Nvidia CEO, would represent a "horrible outcome" for the United States, underscoring the profound strategic implications that AI infrastructure decisions entail.

DeepSeek, an emerging player in the artificial intelligence landscape, is preparing to release its V4 foundation model. The news that this model will be based on Huawei's Ascend 950PR processor marks a significant turning point. This migration from Nvidia's traditionally dominant hardware to alternative solutions highlights a growing diversification in the sector and raises questions about the future architecture of LLM deployments globally.

Technical and Strategic Implications of Silicio Choice

Optimizing an LLM like DeepSeek's V4 for a specific hardware architecture, such as Huawei's Ascend 950PR processor, is not trivial. It requires a considerable investment in engineering and software development to ensure the model fully leverages the capabilities of the underlying silicio. This includes optimization for available VRAM, compute units, memory bandwidth, and the specific AI acceleration capabilities offered by the chip. Effective optimization can directly influence throughput, latency, and ultimately, the Total Cost of Ownership (TCO) of a large-scale deployment.

For companies evaluating LLM deployments, hardware selection is a critical factor. Different architectures present distinct trade-offs in terms of performance, energy efficiency, and compatibility with the existing software ecosystem. While Nvidia's hardware has historically dominated the market for LLM training and inference, the emergence of alternatives like Huawei's Ascend chips introduces new variables. These alternatives may be considered for reasons of cost, availability, or, as in this case, for strategies of technological independence and data sovereignty, especially in contexts where compliance and air-gapped environments are priorities.

Geopolitical Context and Technological Sovereignty

Jensen Huang's warning is not limited to a matter of commercial competition; it is part of a broader geopolitical context. A country's ability to develop and control its own AI infrastructure, from silicio to foundation models, is increasingly seen as a pillar of technological sovereignty. Reliance on external suppliers for critical components can expose risks related to the supply chain, trade restrictions, or data security concerns. DeepSeek's choice to rely on Huawei can be interpreted as a step towards greater technological autonomy for China in the AI sector.

This dynamic underscores the importance for technology decision-makers to consider not only technical specifications and TCO, but also the long-term implications of the supply chain and geopolitics. Diversifying suppliers and developing internal capabilities for LLM deployment become key strategies to mitigate risks and ensure operational resilience, especially for sensitive or strategic workloads that require maximum control over data and infrastructure.

Perspectives for On-Premise Deployments

The situation highlighted by Nvidia's CEO offers significant insights for organizations evaluating on-premise or hybrid LLM deployments. The ability to optimize models for specific, even non-mainstream, hardware can offer advantages in terms of customized performance and control over long-term operational costs. However, this entails the need to invest in internal expertise for the optimization and management of less common technology stacks.

For those evaluating on-premise deployments, AI-RADAR offers analytical frameworks on /llm-onpremise to assess the trade-offs between different hardware architectures, initial (CapEx) and operational (OpEx) costs, and the implications for data sovereignty and compliance. The choice of an AI infrastructure is a strategic decision that goes beyond mere performance, encompassing aspects of control, security, and technological independence, as demonstrated by DeepSeek's move and market reactions.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!