The Real Fears of IT: Beyond the "Bork"

In today's technological landscape, the true "fears" for an IT professional do not lie in paranormal phenomena, but in the complexities and hidden pitfalls behind the deployment and management of critical systems. If once an unstable operating system caused anxiety, today the focus shifts to the challenges posed by artificial intelligence, particularly Large Language Models (LLMs). These systems, while promising revolutions, bring with them a series of infrastructural and operational requirements that can turn into real nightmares if not managed with due care.

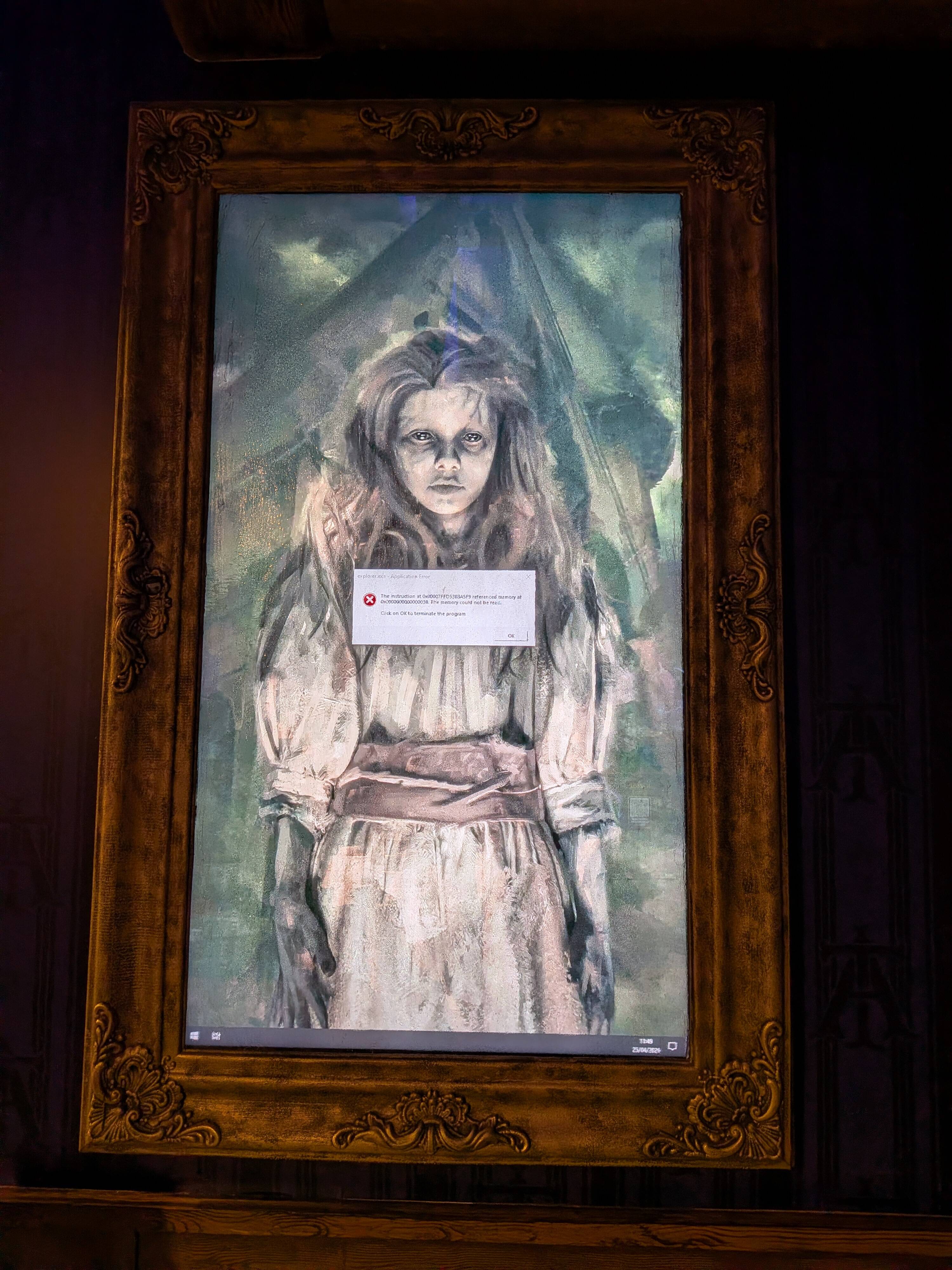

The metaphor of the "bork" – that unexpected sound or error that crashes a system – takes on a deeper meaning when talking about AI workloads. It's not just a software crash, but an entire chain of potential failures: from VRAM shortages on a GPU, to network throughput bottlenecks, to compatibility issues between various Frameworks. Every weak link can compromise the entire inference or training Pipeline, generating not only frustration but also significant costs and operational disruptions.

The Challenges of On-Premise Deployment for LLMs

Deploying LLMs in self-hosted or on-premise environments offers advantages in terms of control, security, and data sovereignty, but also introduces significant complexities. Hardware selection is crucial: GPU VRAM, for example, is a determining factor for the size of models that can be run and the context window. An incorrect evaluation can lead to investments in undersized or oversized Silicio, directly impacting the TCO (Total Cost of Ownership).

Beyond hardware, managing the software stack is equally challenging. Optimizing performance requires mastering techniques like Quantization to reduce the memory footprint of models, or implementing efficient serving Frameworks to maximize throughput and minimize latency. Configuring air-gapped environments for stringent security requirements adds another layer of complexity, requiring meticulous planning for system updates and maintenance without compromising isolation.

Data Sovereignty and Security Implications

One of the main drivers for choosing an on-premise deployment is the need to maintain full control over data. Sectors such as finance, healthcare, or public administration are subject to strict regulations (like GDPR) that impose specific requirements on data residency and protection. In this context, the idea of entrusting sensitive data to an external cloud provider can generate legitimate "fears" regarding compliance and security.

Managing a self-hosted AI infrastructure means taking full responsibility for security. This includes not only the physical protection of servers and network security but also managing software vulnerabilities and protecting against targeted attacks on the models themselves. A system "borked" due to a security breach can have devastating consequences, far beyond simple downtime, affecting reputation and user trust. The ability to conduct internal audits and maintain a completely isolated environment is a key advantage but requires dedicated skills and resources.

Mitigating Risks and Planning for the Future

To transform "fears" into opportunities, it is essential to adopt a proactive and fact-based approach. Strategic planning must include a thorough analysis of workload requirements, a comparative evaluation of hardware and software options, and a clear understanding of the trade-offs between performance, cost, and operational complexity. There is no one-size-fits-all solution; the choice between different GPUs, Frameworks, or deployment strategies must be guided by the specific needs of the organization.

AI-RADAR serves as a resource for decision-makers navigating these complexities, offering analytical frameworks on /llm-onpremise to evaluate the trade-offs and implications of each choice. Understanding concrete hardware specifications, such as the VRAM needed for a given model, or expected performance metrics (tokens/sec, latency), is essential for building a resilient and high-performing infrastructure. Only through rigorous planning and deep technical knowledge can the challenges of on-premise AI deployment be addressed, transforming potential "fears" into confidence and operational control.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!