Taiwan Semiconductor Materials: Competitive Scenarios and Impact on On-Premise AI

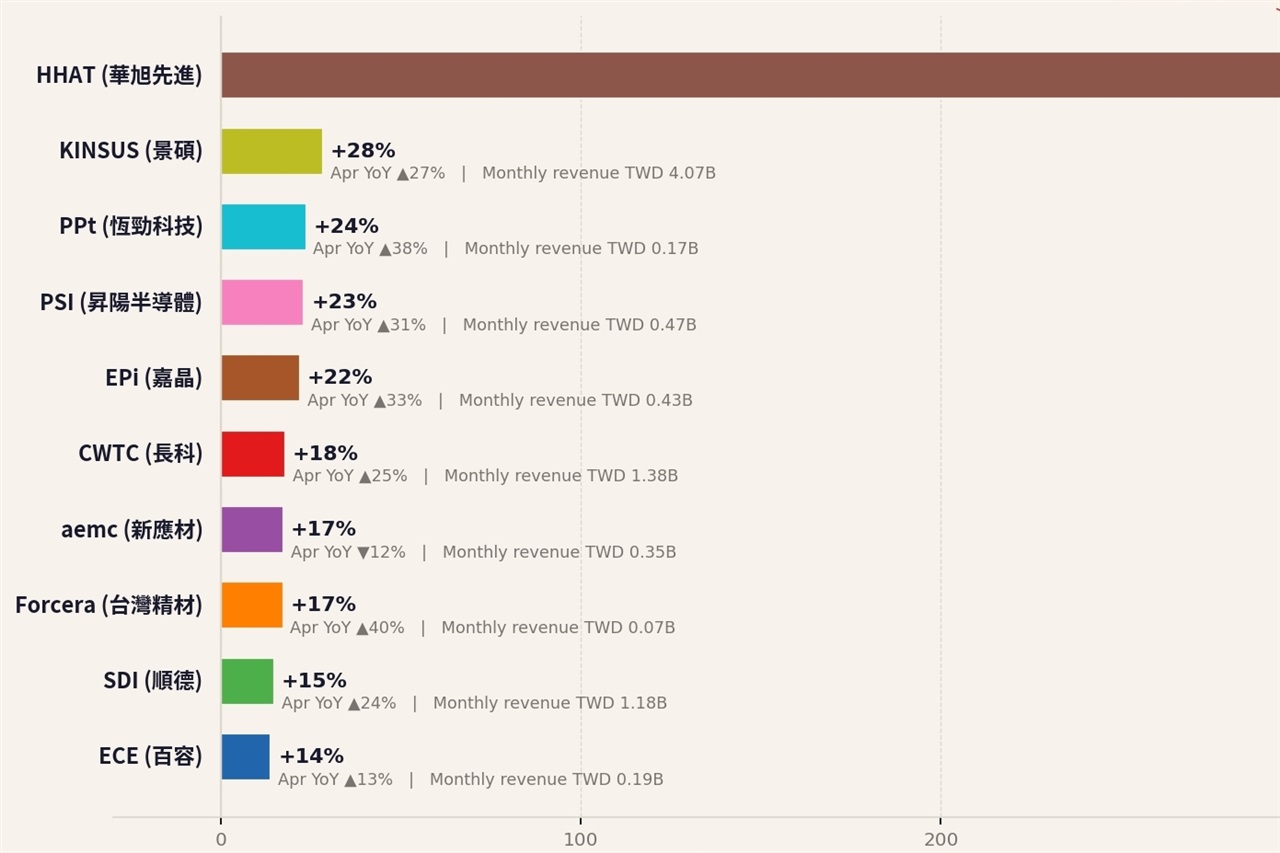

An analysis published by Digitimes forecasts a scenario of increasing polarization in Taiwan's semiconductor materials sector by April 2026, describing a situation of "two races." This prediction suggests potential fragmentation or intensified competition within a sector fundamental to the entire global technology industry. For organizations planning or managing on-premise Large Language Model (LLM) deployments, understanding these market dynamics is crucial.

The availability and cost of semiconductor materials directly influence the production of advanced chips, from CPUs to GPUs, which form the backbone of AI infrastructure. An evolving market, with possible strategic or technological divergences among key players, could have significant repercussions on the supply chain and, consequently, on investment decisions for artificial intelligence hardware.

The Semiconductor Materials Context and the "Two Races"

Semiconductor materials represent the foundation of every electronic component, from silicon substrates to the chemical compounds used in manufacturing processes. Taiwan is a global epicenter for this industry, hosting some of the largest producers and suppliers. The phrase "two races" can indicate various trends: it could refer to technological differentiation, with some players investing in materials for ultra-advanced process nodes and others focusing on more mature but high-volume solutions.

Alternatively, it might reflect geopolitical competition or specialization in distinct market segments, such as materials for high-performance AI versus those for consumer electronics. Regardless of the specific interpretation, such dynamics introduce complexity and potential constraints in the supply chain, making strategic planning even more critical for those dependent on these components.

Implications for On-Premise LLM Deployments

For CTOs, DevOps leads, and infrastructure architects, the dynamics of the semiconductor materials market directly translate into practical considerations for on-premise LLM deployments. The availability of GPUs with high VRAM, essential for inference and fine-tuning of complex models, is closely linked to the production capacity and stability of the materials supply chain. An increase in costs or a scarcity of these materials could drive up the Total Cost of Ownership (TCO) for acquiring and maintaining self-hosted AI infrastructure.

In a context where data sovereignty and regulatory compliance (such as GDPR) push many companies towards on-premise or air-gapped solutions, reliance on a potentially unstable or polarized supply chain represents a risk. The choice between a bare metal deployment or the use of containers on local infrastructure becomes even more strategic, requiring careful evaluation of the trade-offs between flexibility, cost, and supply chain resilience.

Future Outlook and Mitigation Strategies

The scenario outlined by Digitimes for 2026 underscores the importance of a proactive strategy in managing AI infrastructure. Companies will need to closely monitor the evolution of the semiconductor materials market, assessing the impact on hardware prices and delivery times. This could include diversifying suppliers, exploring alternative hardware architectures, or investing in software optimization solutions to maximize the efficiency of existing resources.

For those evaluating on-premise deployments, analytical frameworks exist to assess the trade-offs between CapEx and OpEx, supply chain resilience, and performance requirements. The ability to anticipate and adapt to these market dynamics will be a key factor in ensuring the long-term sustainability and effectiveness of their artificial intelligence strategies.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!