Meta Terminates Sama Contract: Privacy and Sensitive Data from Smart Glasses

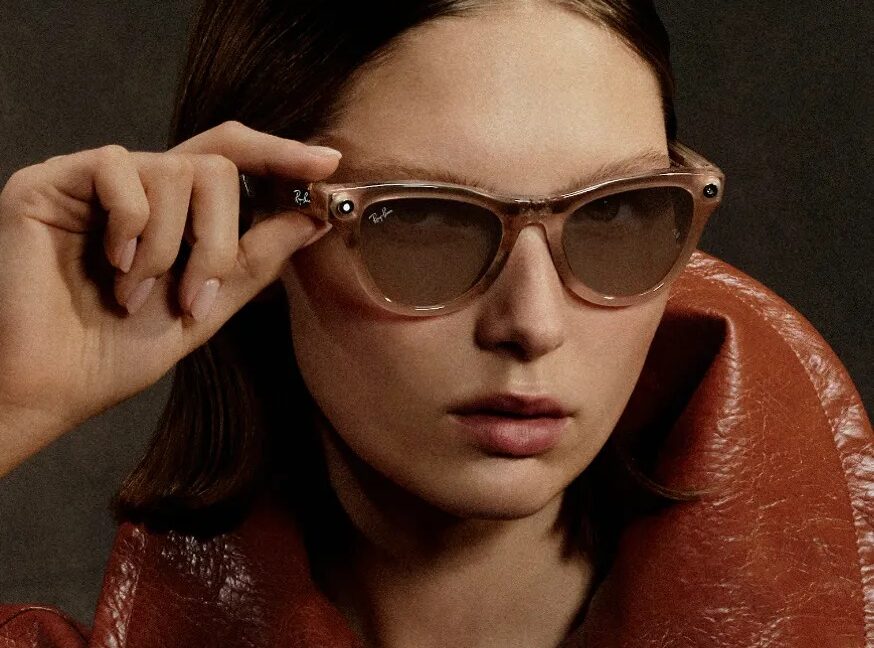

Meta has ended its contractual relationship with Sama, a Kenya-based company specializing in data annotation services. The decision comes approximately two months after reports from numerous Sama workers who claimed to have viewed private and sensitive footage, including explicit content, recorded by Ray-Ban Meta smart glasses. These incidents, initially reported by Swedish newspapers and a Kenyan freelance journalist, have raised serious concerns regarding privacy management and the security of personal data within the context of artificial intelligence system development.

The incident highlights the inherent complexities and risks in outsourcing critical processes such as data annotation, a fundamental step for training and fine-tuning AI algorithms. Sama, which provided Meta with video, image, and speech annotation services for Ray-Ban Meta's AI systems, stated that the contract termination impacted over 1,108 workers. This episode not only has significant employment consequences but also illuminates the ethical and operational challenges companies face when managing vast volumes of user-generated data.

The Importance of Data Annotation and Privacy Risks

Data annotation is a fundamental pillar for developing robust and high-performing artificial intelligence models. Through this process, raw data – be it images, videos, text, or audio – is labeled and categorized, making it understandable and usable by machine learning algorithms. When it comes to wearable devices like smart glasses, which capture moments of daily life, the nature of the collected data can be extremely personal and sensitive.

Outsourcing these activities to third parties, often in different jurisdictions, introduces additional layers of complexity in terms of regulatory compliance, data sovereignty, and control over security. For organizations dealing with highly sensitive data, the choice of an annotation partner or the decision to fully internalize the process becomes crucial. The implications of unauthorized exposure or misuse of data can range from reputational damage to severe legal penalties, such as those stipulated by GDPR.

Implications for Data Governance and User Trust

The episode involving Meta and Sama underscores the urgent need for companies to strengthen their data governance policies and implement rigorous controls throughout the entire AI development pipeline. User trust is a valuable and fragile asset, and incidents like this can quickly erode it, impacting the adoption of new technologies and services. For CTOs, DevOps leads, and infrastructure architects, the issue is not just technological but also strategic and legal.

Evaluating the trade-offs between cost, scalability, and control is essential. While outsourcing can offer advantages in terms of efficiency and reduced operational costs (OpEx), it can also lead to a loss of direct control over data and processes, increasing security and and compliance risks. This scenario prompts many companies to consider self-hosted alternatives or on-premise deployments for their most sensitive AI workloads, where data sovereignty and the ability to operate in air-gapped environments become absolute priorities.

Future Perspectives and Strategic AI Decisions

The AI industry is constantly evolving, and with it, the challenges related to data management. Events like the one involving Meta serve as a warning for the entire sector, highlighting the importance of a holistic approach to security and privacy from the earliest stages of designing an AI product or service. Infrastructure decisions, whether cloud, hybrid, or on-premise, must be guided not only by performance and TCO considerations but also, and above all, by the ability to ensure data protection and compliance.

For those evaluating on-premise deployments for their LLM workloads, AI-RADAR offers analytical frameworks on /llm-onpremise to assess the trade-offs between control, security, and costs. The ability to keep data within one's own infrastructural boundaries, to apply granular access policies, and to constantly monitor every phase of the data lifecycle can represent a significant competitive advantage and a guarantee of compliance in an increasingly stringent regulatory landscape. The choice of how and where to process data for AI is, now more than ever, a strategic decision that defines an organization's resilience and reliability.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!