The AI Chip Race and Nvidia's Stance

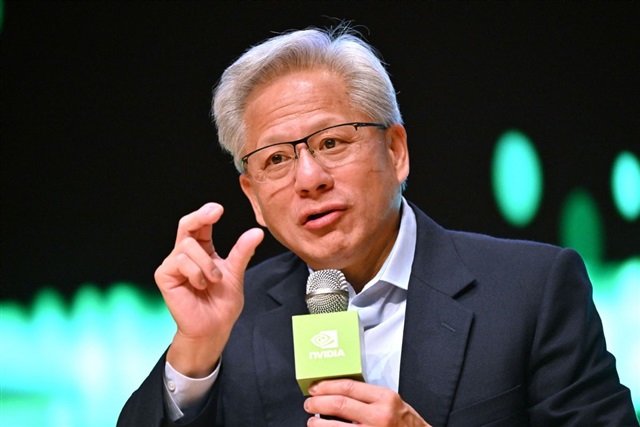

The artificial intelligence landscape is constantly evolving, fueled by a growing demand for computing power. At the heart of this transformation lies a true "AI chip race," with numerous players competing to provide the most efficient and performant hardware solutions. In this dynamic scenario, Nvidia's CEO recently made a significant statement, asserting that Google's Tensor Processing Units (TPUs) do not pose a threat to his company's dominant position.

This perspective offers an interesting insight into Nvidia's strategy and its perception of the competition. While Nvidia has solidified its leadership in the GPU sector, becoming a cornerstone for training and Inference of Large Language Models (LLM) and other AI workloads, Google has developed its own custom accelerators, TPUs, optimized for its infrastructure and machine learning Frameworks. The CEO's statement underscores Nvidia's confidence in its architecture and ecosystem.

GPU vs. TPU: Architectures Compared

The competition between GPUs and TPUs reflects different approaches to AI acceleration. Nvidia's GPUs, such as the A100 and H100 series, are highly parallel processors designed for a wide range of computational workloads, including graphics and scientific computing, in addition to AI. Their success is also linked to the CUDA ecosystem, a robust software Framework that has enabled developers to fully leverage the power of GPUs for training and Inference of complex models.

Google's TPUs, on the other hand, are Application-Specific Integrated Circuits (ASICs) specifically designed to accelerate machine learning workloads within Google's data centers. They are optimized for linear algebra operations and matrix multiplication, which are fundamental for training neural networks. This specialization allows TPUs to offer high efficiency for specific workloads, particularly those using Frameworks like TensorFlow and JAX, native to the Google ecosystem. Their architecture was designed to scale within Google's cloud infrastructure, providing high performance for internal services and Google Cloud Platform customers.

Implications for On-Premise Deployments

For enterprises evaluating on-premise Deployments of LLMs and other AI applications, hardware selection is a critical decision that goes beyond peak performance metrics. Factors such as Total Cost of Ownership (TCO), compatibility with existing infrastructure, VRAM availability, Throughput, and ease of integration with local software stacks are paramount. Nvidia's GPUs benefit from a mature ecosystem and a broad developer base, making them a versatile choice for self-hosted and air-gapped environments.

Conversely, adopting TPUs outside the Google ecosystem is more complex, given their proprietary nature and deep integration with Google's cloud infrastructure. This makes GPUs a more accessible and flexible solution for organizations prioritizing data sovereignty and complete control over their technology stack. The ability to perform Fine-tuning, Inference, and other AI operations on proprietary hardware, without relying on external cloud services, is an increasingly felt requirement in regulated sectors or those with stringent compliance needs.

Future Outlook and Decision Trade-offs

The "AI chip race" is set to intensify, with new players and innovative architectures emerging. Nvidia's CEO's statement reflects confidence in their position, but the market is constantly evolving. For CTOs, DevOps leads, and infrastructure architects, the decision of which hardware to adopt for AI workloads is never simple. It requires careful evaluation of trade-offs between initial (CapEx) and operational (OpEx) costs, energy efficiency, scalability, software support, and security requirements.

The choice between general-purpose solutions like GPUs and specialized accelerators like TPUs ultimately depends on the specific workload requirements, budget, and deployment strategy of the organization. AI-RADAR offers analytical Frameworks on /llm-onpremise to help evaluate these complex trade-offs, providing neutral guidance for decisions that prioritize data sovereignty, control, and TCO in self-hosted environments. The ability to choose the most suitable hardware is crucial for optimizing long-term performance and costs.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!