Nvidia's Support for AI in National Security

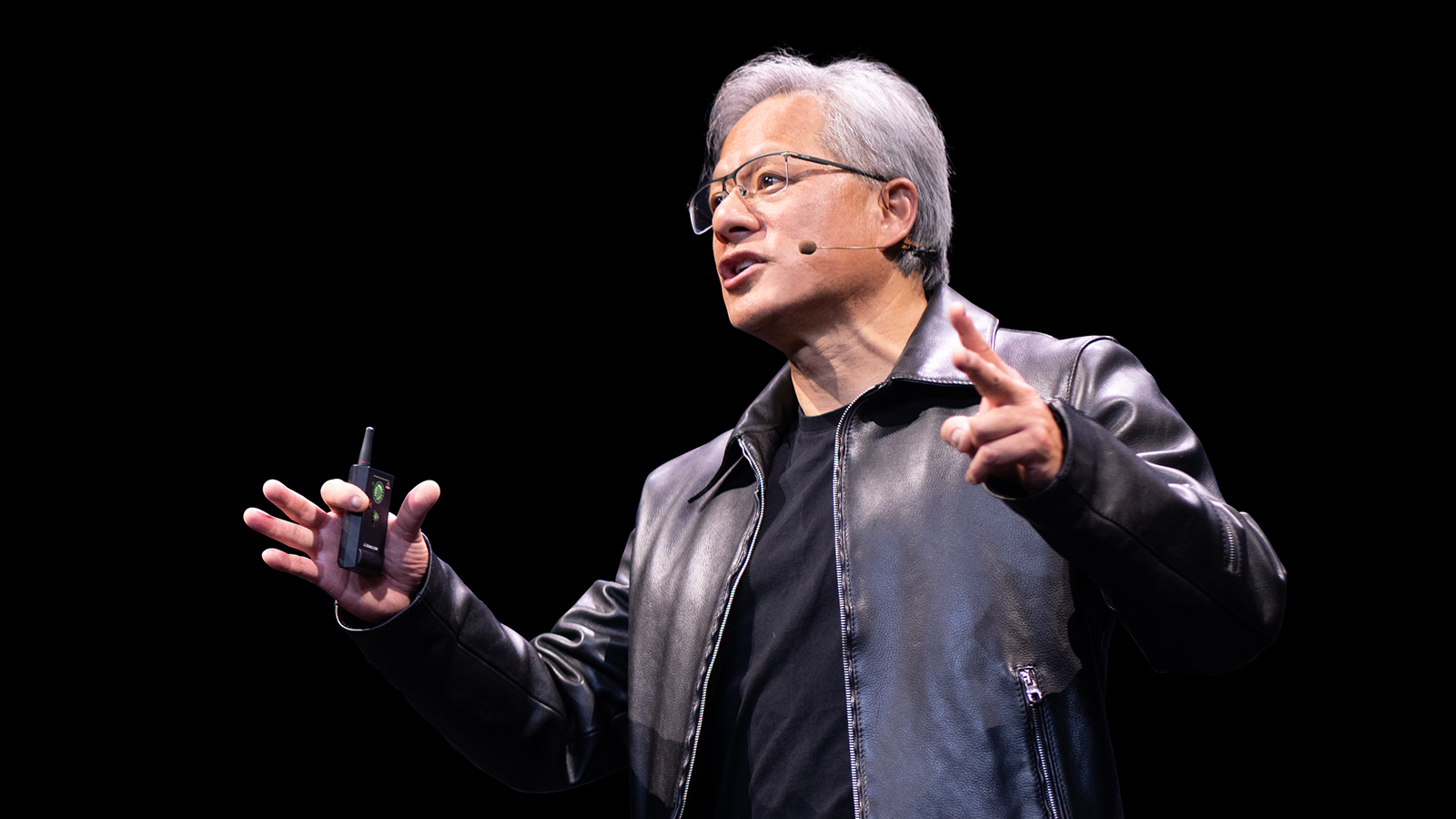

Jensen Huang, CEO of Nvidia, recently reiterated his support for the United States' use of artificial intelligence for national security purposes. This stance underscores the growing strategic relevance of AI, particularly Large Language Models (LLM), in critical sectors that demand not only advanced computational capabilities but also rigorous control over data and the underlying infrastructure. Huang's statement, while expressing respect for an unspecified entity, also highlighted a disagreement with some of its positions, suggesting a broader debate on the methods and limits of AI application in sensitive contexts.

For government organizations and critical infrastructures, adopting AI solutions poses unique challenges. The need to ensure data sovereignty, regulatory compliance, and operational security often drives them towards on-premise or air-gapped deployment architectures. These choices, though complex, offer unparalleled control over models, training data, and inference pipelines—fundamental aspects when national security is at stake.

Technical Implications for Critical Deployments

Implementing AI systems for national security requires high-level hardware and infrastructural specifications. Nvidia GPUs, such as the A100 or the more recent H100, with their high VRAM capacities and throughput, are often at the core of these architectures, both for intensive training and low-latency inference. The choice between different silicio configurations and the management of requirements like tensor parallelism or pipeline parallelism become crucial for optimizing performance and energy efficiency in self-hosted environments.

An on-premise deployment implies a thorough evaluation of the Total Cost of Ownership (TCO), which includes not only the initial CapEx for hardware and network infrastructure but also operational costs for power, cooling, and maintenance. The ability to manage AI workloads on bare metal or through orchestrated containers (e.g., with Kubernetes) is essential for maintaining the necessary flexibility and scalability, while ensuring that sensitive data never leaves the organization's control perimeter. Model Quantization, for example, can reduce VRAM requirements and improve throughput, but it demands careful evaluation of its impact on precision—a critical trade-off in security applications.

Strategic Context and Decision-Making Trade-offs

Jensen Huang's position is set against a global backdrop where the race for AI is seen as a decisive factor for technological leadership and security. The debate on the ethical implications, governance, and control of AI is more intense than ever. For entities operating in highly sensitive sectors, the decision to adopt cloud-based AI solutions or invest in on-premise infrastructure is strategic. While the cloud offers scalability and reduced initial operational costs, self-hosted solutions guarantee full data sovereignty and the ability to operate in air-gapped environments, essential for preventing unauthorized access or information leaks.

These trade-offs are not just technical but also economic and strategic. The ability to Fine-tune proprietary LLM with sensitive data, maintaining complete control over the development and deployment pipeline, is a competitive advantage and an operational necessity for many national security actors. The choice of deployment Framework and orchestration tools must balance performance, security, and ease of management.

Future Outlook for AI and Sovereignty

The evolution of artificial intelligence will continue to present new challenges and opportunities for national security. The ability to securely and controllably develop, deploy, and manage LLM will be a fundamental pillar for resilience and innovation. Statements from industry leaders like Huang highlight the complexity of this scenario, where technological innovation confronts demands for control and sovereignty.

For CTOs, DevOps leads, and infrastructure architects evaluating these alternatives, AI-RADAR offers analytical frameworks on /llm-onpremise to understand the trade-offs between self-hosted and cloud solutions. The final decision will depend on a careful analysis of specific requirements in terms of security, compliance, performance, and TCO, with an increasing emphasis on the ability to maintain full control over critical digital assets.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!