The Evolution of OpenAI Codex on the Desktop

OpenAI has announced the release of a new version of its Codex desktop application, bringing with it a wide range of features and modifications. These updates are designed to extend Codex's capabilities, moving from specific developer tools to support for more general "knowledge work," a clear indication of OpenAI's desire to make artificial intelligence more pervasive in users' daily activities.

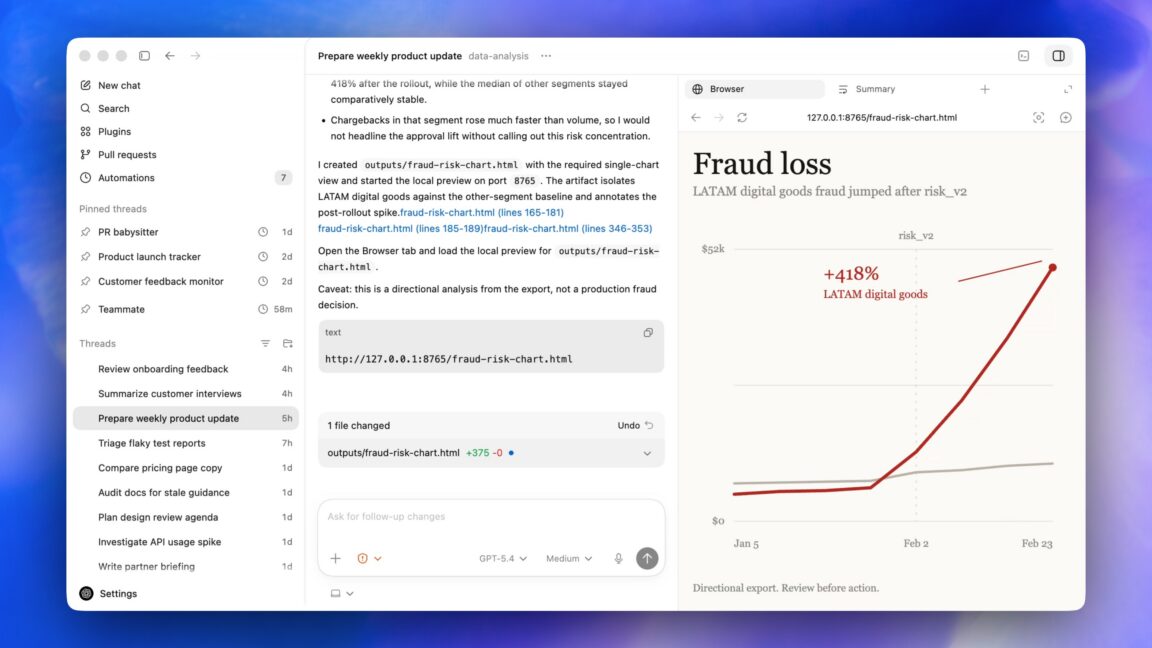

Among the introduced novelties, the most interesting for users is the ability to perform tasks on the PC in the background. OpenAI has stated that this feature was designed to operate without interfering with the activities the user is performing on their desktop, promising a fluid and non-intrusive experience. This development is not just a productivity enhancement but also represents a fundamental step in OpenAI's strategy for creating its "super app," a central application that integrates various AI functionalities into a single ecosystem.

Background Processing: Technical and Operational Implications

The ability of an AI application to operate in the background on a local PC raises significant technical questions, particularly regarding resource management. Running Large Language Models (LLM) often requires substantial computing power, frequently involving the GPU and a considerable amount of VRAM. Ensuring that such processes do not negatively impact the operating system's performance or other applications in use is an engineering challenge that demands optimizations at the level of scheduling, model quantization, and memory management.

This functionality aligns with the growing trend towards distributed AI and "edge" processing, where AI workloads are moved closer to the data source or the end-user. Local execution reduces the latency associated with cloud API calls and can improve application responsiveness. For businesses, this means being able to leverage the computing power available on user devices, potentially reducing reliance on external cloud infrastructure for specific Inference operations.

Data Sovereignty and TCO for the Enterprise

For organizations operating in regulated sectors or with stringent privacy requirements, the ability to process data locally via a desktop application like Codex offers significant advantages in terms of data sovereignty. Keeping data within the corporate perimeter, or even on the individual user's device, can simplify compliance with regulations like GDPR and reduce the risks associated with transferring sensitive information to external cloud services. This aspect is crucial for companies evaluating air-gapped or self-hosted deployment strategies.

From a Total Cost of Ownership (TCO) perspective, adopting AI solutions that leverage local hardware can present a different cost model compared to cloud services. While it may require an initial investment in more powerful hardware for user devices, local execution can reduce recurring operational costs associated with cloud Inference, which often scale with usage. For those evaluating on-premise deployment, there are complex trade-offs between CapEx and OpEx, and AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these aspects in detail, considering factors such as desired throughput and acceptable latency.

Towards the "Super App" and the Future of Local AI

OpenAI's vision of a "super app" based on Codex, powered by background processing capabilities, suggests a future where AI will be deeply integrated into operating systems and daily workflows. This integration could transform how users interact with their computers, delegating complex tasks to AI without visible interruptions or perceptible slowdowns.

This development reflects a broader trend in the tech industry, which sees a balance between centralized cloud computing power and the benefits of distributed processing. While the cloud remains essential for large-scale model training and intensive workloads, desktop applications with local AI capabilities, such as the new version of Codex, highlight the value of control, privacy, and efficiency that on-premise processing can offer for specific operational and strategic needs.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!