Introduction

OpenAI has recently expanded ChatGPT's capabilities with a new feature dedicated to personal finance. This initiative allows subscribers to directly connect their financial data to the chatbot, opening new frontiers for interacting with banking and investment information.

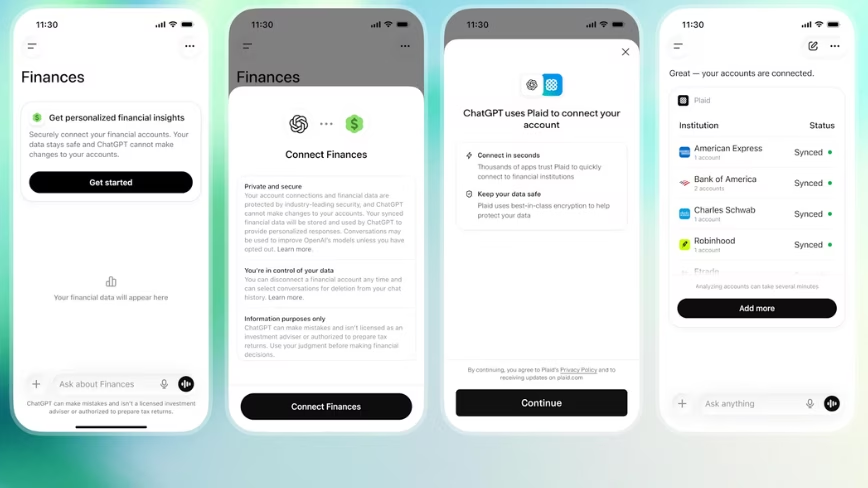

The feature, launched on May 15, is currently available as a preview for ChatGPT Pro users in the United States, accessible via web and iOS platforms. The stated goal is to offer greater convenience in accessing and analyzing personal financial data through a conversational interface.

Technical Details and Functionality

Specifically, the new experience enables users to link a wide range of financial accounts, including bank accounts, credit cards, investment portfolios, and loan accounts. Once the connection is established, subscribers can ask ChatGPT questions based on their real financial data, receiving personalized answers and analyses.

This integration represents a significant step towards using LLMs for tasks that require processing highly sensitive information. While the source does not specify the technical details of the integration, it is plausible that it relies on third-party APIs for accessing banking data, with an emphasis on security and communication encryption.

Implications for Data Sovereignty and Security

The introduction of a feature that handles personal financial data immediately raises crucial questions regarding data sovereignty and security. For companies and individuals operating in regulated sectors or requiring strict control over their information, the idea of entrusting such sensitive data to a third-party cloud service can pose a significant challenge.

Deployment decisions for LLMs that process sensitive data often gravitate towards self-hosted or air-gapped solutions precisely to maintain complete control over infrastructure and data. Evaluating the TCO in these scenarios includes not only hardware and software costs but also those related to regulatory compliance and risk management. For those considering on-premise deployment, AI-RADAR offers analytical frameworks on /llm-onpremise to assess trade-offs between control, security, and operational costs.

Future Prospects and Trade-offs

While the convenience offered by such deep integration is undeniable for the end-user, the compromise between accessibility and data security remains a focal point. Organizations considering the adoption of LLMs for similar applications must carefully weigh the risks associated with managing financial data in environments not fully controlled.

The choice between a cloud-based approach and an on-premise deployment becomes even more critical when dealing with personal and financial information. The ability to keep data within one's own infrastructural boundaries, while ensuring compliance with local and international regulations, is a determining factor for many technology decision-makers.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!