AI and Code Generation: OpenAI's Stance

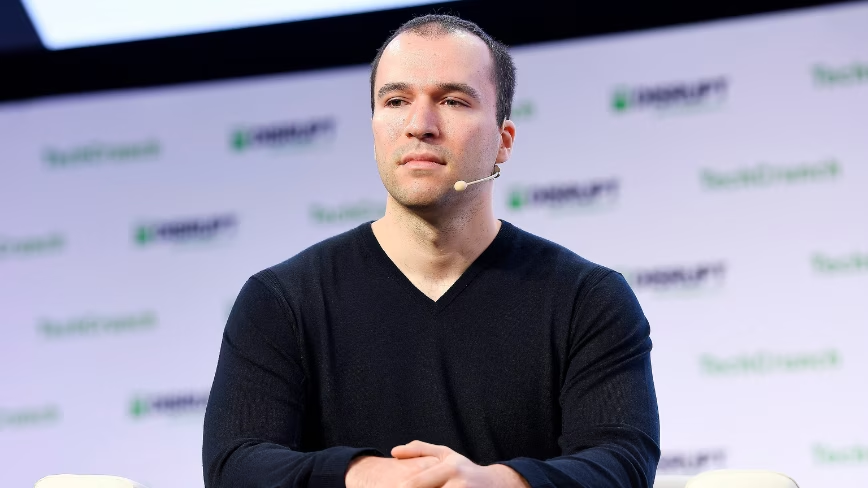

Greg Brockman, President of OpenAI, recently captured the tech industry's attention with a significant statement: artificial intelligence is now responsible for writing approximately 80% of the company's code. The announcement was made during the Sequoia’s AI Ascent 2026 conference, a key event for leaders and innovators in the field of AI.

This assertion, while remarkable, fits into an observed pattern where leaders of AI research labs tend to cite high and self-reinforcing productivity numbers. However, the source emphasizes that the underlying evidence regarding the actual productivity of AI-driven coding remains significantly more contested than the headline figure suggests, indicating the need for deeper analysis.

The Context of AI Productivity Claims

Debate surrounding AI's ability to generate code efficiently and reliably is not new. Tools like GitHub Copilot, based on Large Language Models (LLM), have already demonstrated the potential to accelerate the development process by assisting programmers in writing code snippets, correcting errors, and generating tests. However, the quality of the generated code, its maintainability, and the necessity of human oversight remain critical points.

Statements indicating such high percentages of AI-generated code raise important questions about measurement methodologies and evaluation criteria. Often, AI-generated code requires careful review, debugging, and integration—processes that can consume a significant portion of developers' time, thereby mitigating the overall productivity impact. This context highlights the complexity in quantifying the true benefit of these technologies in complex and mission-critical development environments.

Implications for Deployment Strategies and TCO

For companies evaluating the adoption of LLMs for code generation, the implications extend beyond mere developer productivity. The choice between cloud solutions and self-hosted or on-premise deployment becomes crucial. If AI generates a significant portion of proprietary code, data sovereignty and regulatory compliance become paramount. Organizations might prefer to maintain complete control over their data and models, opting for bare metal or air-gapped infrastructures to ensure maximum security and confidentiality.

Total Cost of Ownership (TCO) analysis is another determining factor. While AI can reduce development time, it introduces new costs related to inference infrastructure, energy, model management, and fine-tuning. The need for high-performance GPUs with adequate VRAM to run complex LLMs locally, or the management of robust deployment pipelines, requires significant investment. For those evaluating on-premise deployment, there are trade-offs that AI-RADAR analyzes through specific frameworks, available at /llm-onpremise, to balance performance, security, and costs.

Future Prospects and Critical Evaluation

The vision of a future where AI writes most of the code is fascinating and promising, but it requires a pragmatic evaluation. As LLMs continue to improve, their effective integration into enterprise development workflows will depend on the ability to address challenges such as managing complexity, ensuring code security, and optimizing hardware resources. Transparency in productivity metrics and a clear understanding of technical and economic trade-offs will be essential for strategic decisions.

Companies will need to consider not only the speed of code generation but also its quality, ease of maintenance, and impact on development culture. AI as an enhancement tool for developers, rather than a complete replacement, appears to be the most realistic and sustainable perspective in the short to medium term, especially for organizations operating with stringent data control and security requirements.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!