OpenAI lancia GPT-Rosalind per la ricerca biologica

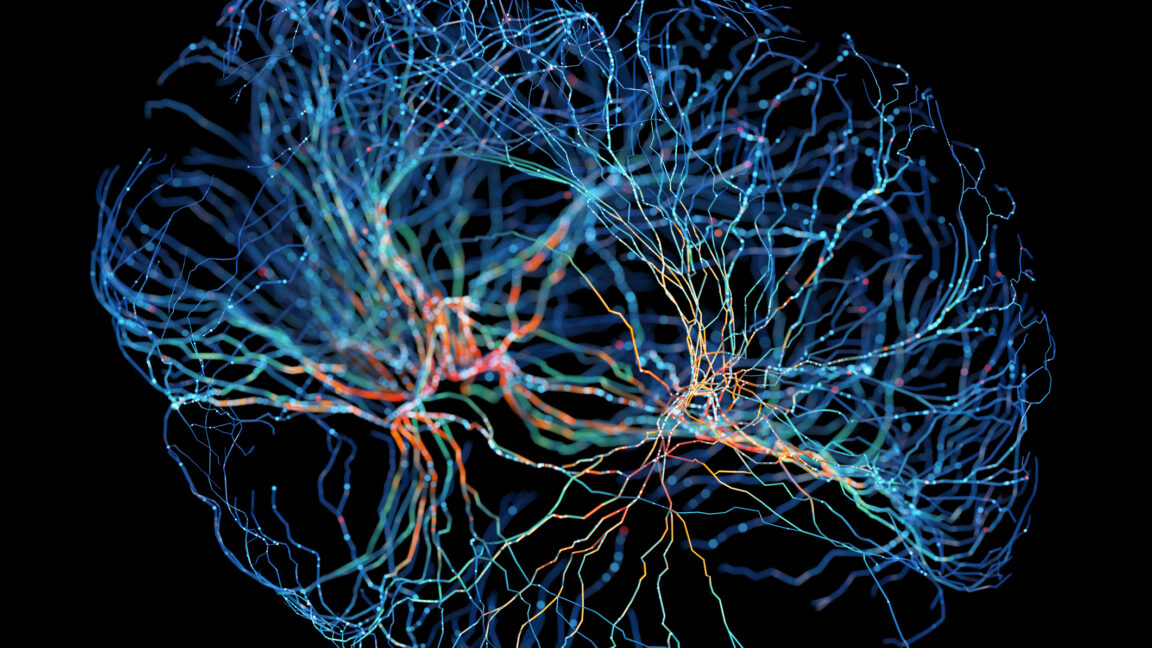

OpenAI ha recentemente introdotto GPT-Rosalind, un nuovo Large Language Model (LLM) progettato con un focus specifico sui flussi di lavoro comuni nel campo della biologia. Il modello, che prende il nome dalla celebre scienziata Rosalind Franklin, si distingue dagli approcci più generici adottati da altre aziende tecniciche per i loro modelli scientifici, optando invece per una specializzazione mirata.

Questa mossa segna un'evoluzione nell'applicazione degli LLM, passando da soluzioni ad ampio spettro a strumenti verticali, capaci di affrontare esigenze settoriali con maggiore precisione. La decisione di OpenAI di concentrarsi sulla biologia riflette la crescente consapevolezza del potenziale degli LLM nel supportare discipline complesse, dove la mole di dati e la specificità del linguaggio rappresentano ostacoli significativi per i ricercatori.

Dettagli tecnici e capacità del modello

Durante un briefing con la stampa, Yunyun Wang, Life Sciences Product Lead di OpenAI, ha spiegato che GPT-Rosalind è stato concepito per affrontare due ostacoli principali che i ricercatori biologici incontrano quotidianamente. Il primo riguarda la gestione di dataset massivi, generati da decenni di sequenziamento genomico e biochimica delle proteine, la cui vastità può superare la capacità di analisi di un singolo ricercatore. Il secondo problema è la natura altamente specializzata dei sottocampi della biologia, ognuno con le proprie tecniche e il proprio gergo, rendendo difficile per un genetista comprendere, ad esempio, l'immensa letteratura neurobiologica.

Per superare queste sfide, OpenAI ha addestrato l'LLM su 50 dei flussi di lavoro biologici più comuni, oltre a istruirlo su come accedere ai principali database pubblici di informazioni biologiche. Questo addestramento mirato ha permesso al sistema di suggerire percorsi biologici probabili e di prioritizzare potenziali target farmacologici. Wang ha sottolineato che l'obiettivo è "connettere il genotipo al fenotipo attraverso percorsi noti e meccanismi regolatori, inferire probabili proprietà strutturali o funzionali delle proteine e sfruttare realmente questa comprensione meccanicistica".

Contesto e implicazioni per il deployment

L'emergere di LLM specializzati come GPT-Rosalind evidenzia una tendenza chiave nel panorama dell'intelligenza artificiale: la necessità di modelli non solo potenti, ma anche profondamente contestualizzati. Mentre i modelli generici offrono versatilità, le applicazioni in settori critici come la biologia e la medicina richiedono una comprensione sfumata e una precisione che solo un addestramento specifico può garantire. Questo approccio può portare a una maggiore efficienza nella ricerca e nello sviluppo, riducendo i tempi e i costi associati all'analisi manuale di dati complessi.

Per le organizzazioni che operano con dati sensibili, come quelli biologici o medici, la scelta del deployment di tali LLM diventa cruciale. La necessità di mantenere la sovranità dei dati, garantire la compliance normativa (come il GDPR) e operare in ambienti air-gapped o self-hosted spinge molte realtà a valutare soluzioni on-premise o ibride. AI-RADAR, ad esempio, offre framework analitici su /llm-onpremise per aiutare a valutare i trade-off tra costi, controllo e performance in scenari di deployment locale, un aspetto fondamentale quando si lavora con modelli che elaborano informazioni così delicate.

Prospettive future degli LLM verticali

La direzione intrapresa da OpenAI con GPT-Rosalind suggerisce un futuro in cui gli LLM non saranno solo strumenti di intelligenza generale, ma anche esperti altamente qualificati in domini specifici. Questa verticalizzazione potrebbe accelerare scoperte scientifiche e innovazioni in settori che tradizionalmente richiedono anni di ricerca intensiva. La capacità di un LLM di navigare e sintetizzare informazioni da dataset vastissimi e da letteratura altamente specializzata può democratizzare l'accesso alla conoscenza e potenziare i ricercatori, permettendo loro di concentrarsi su ipotesi e sperimentazioni piuttosto che sulla mera aggregazione di dati.

Tuttavia, lo sviluppo e il mantenimento di questi modelli specializzati comportano sfide significative, inclusi i requisiti di calcolo per il fine-tuning e l'aggiornamento continuo delle basi di conoscenza. La scelta di un LLM verticale, quindi, non è solo una questione di capacità, ma anche di strategia infrastrutturale e di gestione del Total Cost of Ownership (TCO) per le aziende che intendono integrarli nei propri workflow. Il successo di iniziative come GPT-Rosalind dipenderà dalla loro capacità di integrarsi efficacemente negli ecosistemi di ricerca esistenti e di offrire un valore tangibile che giustifichi l'investimento in termini di risorse e infrastruttura.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!