The Race for AI Infrastructure Slows: 40% of US Data Centers at Risk of Delay

Silicio Valley is pouring hundreds of billions of dollars into building ever-larger artificial intelligence data centers, massive infrastructures that require as much electricity as hundreds of thousands of US homes. However, this substantial buildout is encountering significant challenges in construction and power supply, exacerbated by growing local resistance. A recent analysis indicates that nearly 40% of US data center projects, scheduled for completion in 2026, may not be finished this year as planned.

These delays not only complicate companies' ability to scale their AI operations but also raise questions about the sustainability and long-term planning of the infrastructure required to power the next generation of Large Language Models (LLM) and other AI applications. For organizations evaluating self-hosted LLM deployment, the availability and delivery times of physical infrastructure become a critical factor in assessing the Total Cost of Ownership (TCO) and adoption strategy.

Analysis Details and Impact on Tech Giants

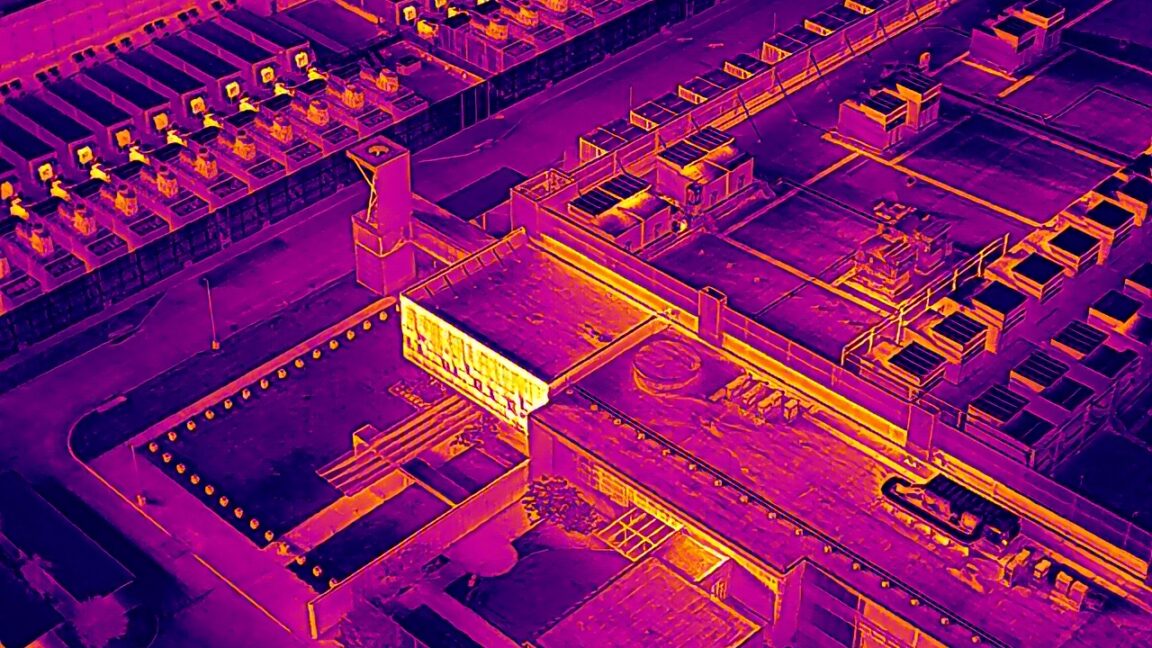

The analysis, conducted by the Financial Times, utilized satellite imagery provided by geospatial data analytics company SynMax to track progress in land clearing and foundation laying for each data center project. This data was then cross-referenced with public statements and permit documents compiled by the industry research group IIR Energy. This methodology revealed that major projects from tech companies such as Microsoft, Oracle, and OpenAI are likely to miss completion dates by more than three months.

The precision of these estimates, based on concrete progress data, offers a clear perspective on the difficulties the industry is facing. The implications are significant, considering that these data centers are intended to host the essential computing resources for training and inference of increasingly complex AI models, directly influencing the market's ability to meet the growing demand for computational power.

Causes of Slowdowns: Labor, Power, and Bureaucracy

Interviews with over a dozen industry executives, reported by the Financial Times, highlighted the primary causes of these delays. Among them are chronic shortages of skilled labor, particularly electricians and pipe fitters, who are crucial for the complex installation of systems within data centers. This is compounded by issues in power supply and the availability of specific equipment, as well as lengthy processes for obtaining necessary permits.

These constraints are not merely logistical or financial but also reflect a broader challenge in the supply chain and infrastructural planning. The demand for power for AI data centers is unprecedented, and the capacity of existing electrical grids to support such a load, along with local community resistance to new infrastructure, represents a significant hurdle. For companies considering self-hosted AI infrastructure, these factors translate into longer deployment times and potentially higher costs.

Future Outlook and Implications for LLM Deployment

The delays in AI data center construction underscore a growing tension between the tech sector's expansion ambitions and operational realities on the ground. The availability of adequate infrastructure is a fundamental prerequisite for the large-scale adoption of artificial intelligence, particularly for workloads requiring high computational power and low latency, such as LLM inference.

These slowdowns could have a cascading impact on the entire AI ecosystem, affecting the development roadmap of new models and enterprises' ability to implement AI-based solutions. For those evaluating on-premise deployment, it is crucial to consider these infrastructural constraints in strategic planning, as the availability of hardware resources and deployment speed can no longer be taken for granted. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate the trade-offs between different deployment strategies, taking into account factors such as TCO, data sovereignty, and performance requirements.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!