Rockwell Automation: le sfide dell'AI per la manifattura autonoma a Taiwan

Introduzione

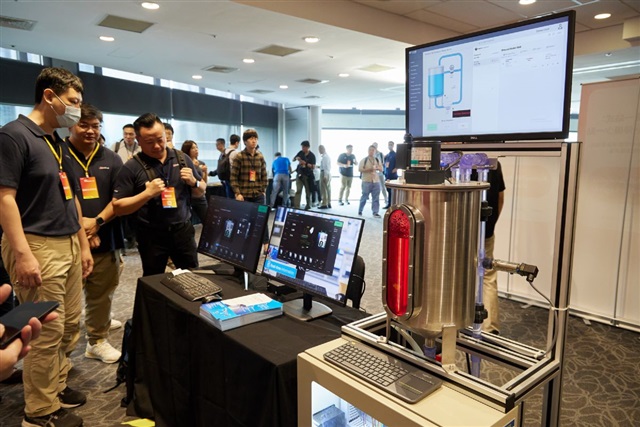

Rockwell Automation, attore di rilievo nel settore dell'automazione industriale, ha recentemente posto l'accento sulle principali sfide che l'intelligenza artificiale presenta per il settore manifatturiero. L'azienda ha contestualmente presentato una strategia articolata in tre fasi, specificamente pensata per supportare la transizione di Taiwan verso un modello di manifattura autonoma. Questa iniziativa sottolinea la crescente importanza dell'AI nell'ottimizzazione dei processi produttivi e la necessità di un approccio strutturato per superare gli ostacoli tecnici e operativi.

La spinta verso l'automazione autonoma rappresenta un'evoluzione significativa per l'industria, promettendo maggiore efficienza, riduzione degli errori e capacità di adattamento in tempo reale. Tuttavia, l'integrazione di sistemi AI complessi in ambienti produttivi esistenti richiede una pianificazione meticolosa e la capacità di affrontare problematiche che vanno dalla gestione dei dati alla sicurezza informatica.

Le Sfide dell'AI nel Contesto Industriale

Le "sfide chiave" identificate da Rockwell Automation, sebbene non specificate nel dettaglio, riflettono probabilmente le complessità intrinseche all'adozione dell'AI in contesti industriali. Tra queste, figurano spesso l'integrazione con infrastrutture legacy, la necessità di elaborare enormi volumi di dati generati da sensori e macchinari in tempo reale, e la garanzia di affidabilità e sicurezza in ambienti operativi critici. La latenza, ad esempio, è un fattore determinante per le applicazioni di controllo in tempo reale, rendendo spesso preferibile l'elaborazione dei dati il più vicino possibile alla fonte.

Questo scenario pone i decision-maker di fronte a scelte architetturali significative, in particolare riguardo al deployment delle soluzioni AI. La decisione tra un approccio cloud-based e un deployment on-premise o edge è cruciale. Le soluzioni on-premise offrono vantaggi in termini di sovranità dei dati, latenza ridotta e controllo diretto sull'infrastruttura, aspetti fondamentali per settori come quello manifatturiero che gestiscono dati sensibili e richiedono risposte immediate.

Strategie per la Manifattura Autonoma

La strategia in tre fasi proposta da Rockwell Automation per Taiwan suggerisce un percorso metodico per l'adozione dell'AI nella manifattura autonoma. Tipicamente, tali strategie includono fasi come la raccolta e l'organizzazione dei dati, lo sviluppo e il fine-tuning di modelli di intelligenza artificiale (spesso Large Language Models o modelli più specifici per visione artificiale), e infine il deployment e l'ottimizzazione continua delle soluzioni. Per le aziende che operano in contesti industriali, la scelta dell'hardware gioca un ruolo fondamentale.

La disponibilità di VRAM su GPU dedicate, la capacità di throughput e la gestione della potenza di calcolo sono elementi critici per l'inference di modelli complessi direttamente in fabbrica. La valutazione del TCO (Total Cost of Ownership) diventa essenziale, considerando non solo i costi iniziali di CapEx per l'hardware, ma anche le spese operative legate all'energia, al raffreddamento e alla manutenzione.

Implicazioni per il Deployment On-Premise

Per CTO, DevOps lead e architetti di infrastruttura che valutano l'implementazione di carichi di lavoro AI/LLM in ambienti industriali, le considerazioni di Rockwell Automation sono particolarmente rilevanti. L'esigenza di mantenere la sovranità dei dati, rispettare stringenti normative di compliance e operare in ambienti air-gapped spinge spesso verso soluzioni self-hosted e bare metal. Questo approccio garantisce il massimo controllo sui dati e sull'infrastruttura, riducendo la dipendenza da fornitori esterni e mitigando i rischi di sicurezza.

La progettazione di un'infrastruttura AI on-premise richiede un'attenta valutazione delle specifiche hardware, dalla scelta delle GPU più adatte per l'inference o il training, alla configurazione della rete e dello storage. Per chi valuta deployment on-premise, AI-RADAR offre framework analitici su /llm-onpremise per comprendere i trade-off tra diverse architetture e ottimizzare il TCO, senza fornire raccomandazioni specifiche ma evidenziando i vincoli e le opportunità.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!