The Market for Damaged Hardware and Its Implications

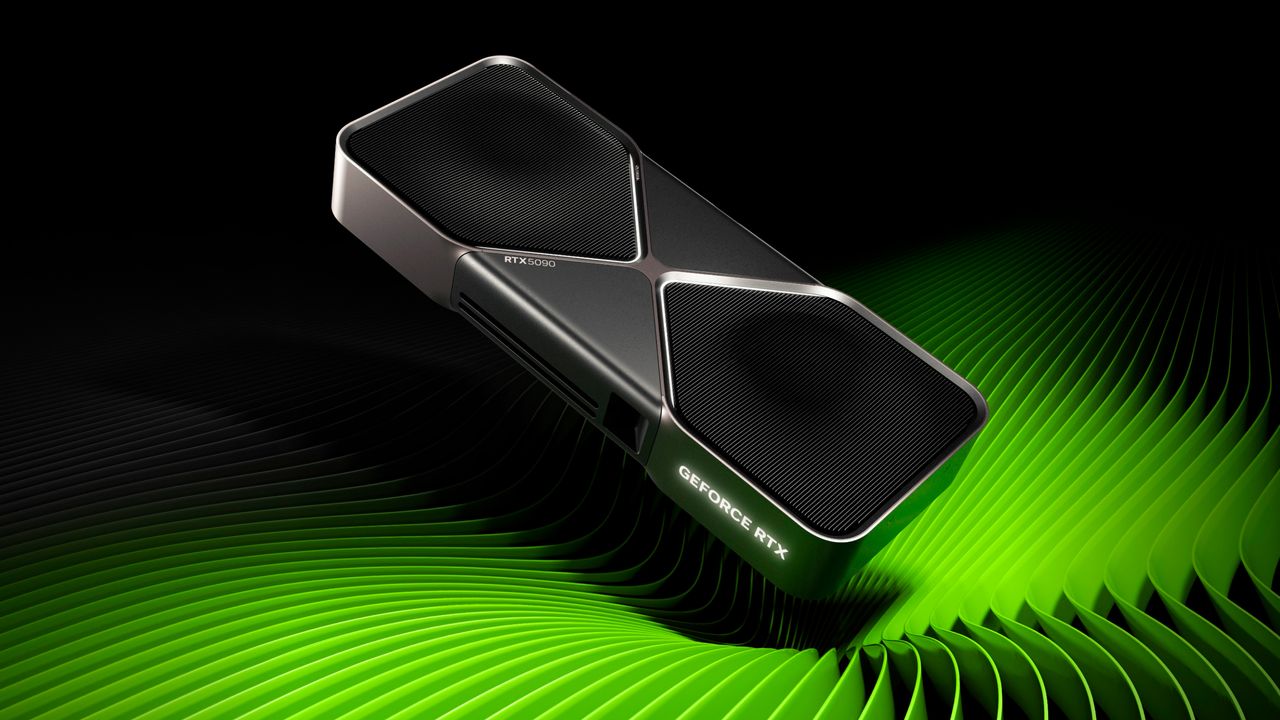

The landscape of artificial intelligence hardware is constantly evolving, with a growing demand for high-performance components to support the intensive workloads of LLMs. In this context, the emergence of unusual offers on the market can generate significant discussion. Recently, a retailer listed GeForce RTX 5090 Founders Edition graphics cards, stated to be damaged during transport, with a starting price of approximately $1,760. The peculiarity of these units lies in the fact that, despite external or functional damage, all essential components are present on the PCB.

This situation, though specific, offers food for thought for technical decision-makers, such as CTOs and infrastructure architects, who are evaluating on-premise deployment options. Purchasing low-cost hardware, even with defects, can be part of a TCO optimization strategy, but it requires careful evaluation of risks and opportunities. The availability of components on the PCB, for example, could open up scenarios for repair or partial reuse, crucial aspects for those managing physical infrastructures.

Technical Analysis and Reuse Potential

GPUs, like the GeForce RTX 5090, are fundamental components for accelerating LLM Inference and Fine-tuning workloads. Their VRAM and computing capability are directly related to the size of the models that can be run and the processing speed of Tokens. When an opportunity arises to acquire hardware at a significantly reduced cost, even if damaged, it is essential to consider the nature of the damage.

The fact that all components are present on the PCB suggests that the damage might be localized or superficial, potentially repairable with the right skills and equipment. For a DevOps team or an infrastructure architect with in-house hardware maintenance capabilities, these cards could represent a source of spare parts or, in some cases, repairable units for development, testing environments, or even less critical workloads. However, a thorough diagnosis is crucial to understand the extent of the damage and the feasibility of functional restoration, avoiding investments in resources that might not yield an adequate return.

TCO and On-Premise Deployment Strategies

Total Cost of Ownership (TCO) analysis is a cornerstone for on-premise deployment decisions. Purchasing new hardware involves high CapEx but offers guarantees and reliability. The option of acquiring damaged hardware at a lower price, as in the case of the RTX 5090s, introduces a complex variable into the TCO equation. The reduced initial cost must be balanced against potential operational costs (OpEx) related to repair, testing, and managing any future failures.

For organizations prioritizing data sovereignty and complete control over infrastructure, self-hosted deployments on Bare metal are often the preferred choice. In these contexts, hardware management, including maintenance and spare parts procurement, becomes a critical in-house capability. The opportunity to acquire low-cost components, even with higher risk, can be evaluated as part of a broader strategy to build and maintain a resilient and controlled AI infrastructure, especially in Air-gapped environments or those with stringent compliance requirements.

Outlook and Trade-offs for Decision-Makers

The availability of damaged GPUs like the RTX 5090s on the market highlights the inherent trade-offs in AI infrastructure management. On one hand, there's the appeal of a significantly lower entry cost, which could allow budget-constrained teams to access high-end hardware. On the other hand, the risks associated with functionality, warranty, and durability require careful planning and dedicated technical resources. The decision to invest in such components must be supported by a clear understanding of internal repair capabilities and a realistic assessment of the value that the hardware, even if partially functional, can bring to one's LLM development Framework or Pipeline.

AI-RADAR focuses on analyzing these dynamics, providing analytical frameworks to evaluate the trade-offs between self-hosted and cloud solutions, and between different hardware acquisition strategies. There is no universal solution; the choice depends on specific project requirements, team expertise, and risk tolerance. For those evaluating on-premise deployments, resources and in-depth analyses are available at /llm-onpremise that can help navigate these complex decisions, emphasizing the importance of a fact-based approach and TCO analysis.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!