Indium Phosphide Semiconductors: New Horizons for AI Power and Bandwidth

The evolution of Large Language Models (LLMs) and artificial intelligence applications continues to push the limits of existing hardware, generating a growing demand for increased computing power and bandwidth, all while optimizing energy efficiency. In this context, indium phosphide (InP) compound semiconductors are emerging as a key technology, promising to overcome some of the fundamental barriers that currently limit the performance and power consumption of AI systems. The ability of these materials to handle intensive workloads with greater efficiency could redefine deployment strategies for AI infrastructures, particularly for self-hosted environments.

The Role of InP Semiconductors in AI

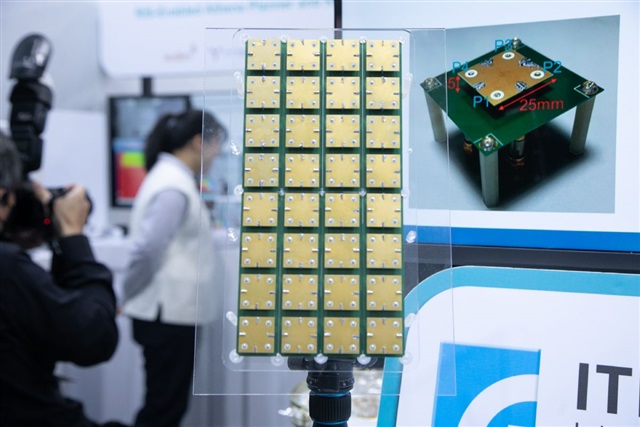

Traditional silicon-based semiconductors have reached high levels of maturity but present inherent limitations when it comes to applications requiring extreme speeds and low power consumption, such as LLM inference and training. Indium phosphide, on the other hand, offers superior properties in terms of electron mobility and heat management. These characteristics allow InP-based chips to operate at higher frequencies and with lower power dissipation compared to silicon, facilitating the creation of high-speed interconnects and integrated photonic modules. Such integration is crucial for overcoming bandwidth bottlenecks, an increasingly evident limiting factor in the parallel computing architectures required for LLMs. The promise is to enable a new generation of AI accelerators capable of processing a greater volume of tokens per second with significantly improved energy efficiency.

Implications for On-Premise Deployments

For companies evaluating on-premise AI infrastructure deployments, the introduction of InP semiconductors could have a transformative impact. Greater energy efficiency directly translates into a lower Total Cost of Ownership (TCO), reducing operational costs associated with powering and cooling data centers. Furthermore, the ability to handle higher bandwidth is fundamental for scaling AI workloads, allowing for the use of larger models or serving a greater number of users with reduced latencies. This is particularly relevant for air-gapped environments or scenarios where data sovereignty is a top priority, as it enables critical AI operations to remain within their own infrastructural boundaries without compromising performance. The choice of InP-based hardware could therefore offer a significant competitive advantage, balancing performance and economic sustainability.

Future Prospects and Challenges

Despite its potential, the widespread adoption of InP semiconductors in AI hardware still faces challenges. Mass production of InP chips is more complex and costly than that of silicon, requiring specialized fabrication processes. However, investments in research and development are accelerating, with the goal of making these technologies more accessible and scalable. In the long term, InP integration could not only improve the performance of dedicated accelerators but also pave the way for new computing architectures, such as neuromorphic systems or quantum computers, which could greatly benefit from the unique properties of these materials. For CTOs and infrastructure architects, monitoring the evolution of InP semiconductors will be crucial for planning future upgrades and ensuring that their AI infrastructures remain at the forefront.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!